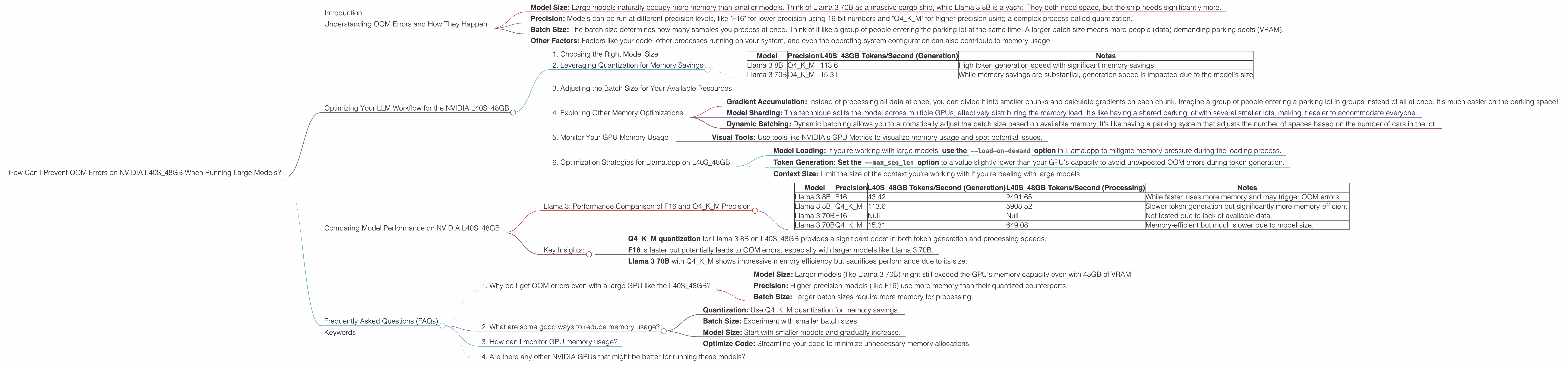

How Can I Prevent OOM Errors on NVIDIA L40S 48GB When Running Large Models?

Introduction

In the ever-evolving landscape of large language models (LLMs), the quest for optimal performance is a constant pursuit. As models grow increasingly sophisticated, they demand more computational resources, which can lead to dreaded "out-of-memory" (OOM) errors. This is where NVIDIA L40S48GB GPUs come into play, offering a powerful solution to tackle these memory constraints. But even with 48GB of VRAM, there's a chance you might still run into those dreaded OOM errors, especially with larger models like Llama 3 70B. This article will guide you through the most effective techniques for preventing OOM errors on your NVIDIA L40S48GB while running large LLMs, ensuring a smooth and seamless experience.

Understanding OOM Errors and How They Happen

Think of your GPU's VRAM like a limited supply of parking spaces. Every time you load a model, it's like taking a whole bunch of cars to park, and if you don't have enough spaces (VRAM), you get a parking error (OOM). The more complex the model, the more parking spaces (VRAM) it needs.

OOM errors can be a real head-scratcher, but understanding their root cause can help you tackle them. Here's a simplified breakdown:

- Model Size: Large models naturally occupy more memory than smaller models. Think of Llama 3 70B as a massive cargo ship, while Llama 3 8B is a yacht. They both need space, but the ship needs significantly more.

- Precision: Models can be run at different precision levels, like "F16" for lower precision using 16-bit numbers and "Q4KM" for higher precision using a complex process called quantization.

- Batch Size: The batch size determines how many samples you process at once. Think of it like a group of people entering the parking lot at the same time. A larger batch size means more people (data) demanding parking spots (VRAM).

- Other Factors: Factors like your code, other processes running on your system, and even the operating system configuration can also contribute to memory usage.

Optimizing Your LLM Workflow for the NVIDIA L40S_48GB

1. Choosing the Right Model Size

While you might be tempted to run the biggest model available, remember that bigger isn't always better when it comes to memory. Start small and gradually increase the model size as you become more comfortable.

Example: You might start with Llama 3 8B, then move to 13B, and eventually tackle the mighty 70B model.

2. Leveraging Quantization for Memory Savings

Quantization is like squeezing your model's data into smaller packages. It converts the floating-point numbers (F16) used by the model to lower-precision numbers, significantly reducing memory usage.

Think of it like compressing a high-quality photo to a lower-resolution version for sharing. You lose some detail, but the file becomes much smaller.

- Q4KM Quantization: This method focuses on quantizing only the "K" (key) and "M" (matrix) components of the model, which are the most memory-intensive parts. This can lead to impressive savings while maintaining decent accuracy.

| Model | Precision | L40S_48GB Tokens/Second (Generation) | Notes |

|---|---|---|---|

| Llama 3 8B | Q4KM | 113.6 | High token generation speed with significant memory savings |

| Llama 3 70B | Q4KM | 15.31 | While memory savings are substantial, generation speed is impacted due to the model's size |

Key takeaway: If you're running a large model like Llama 3 70B and are concerned about memory, Q4KM quantization is a must-have in your arsenal.

3. Adjusting the Batch Size for Your Available Resources

Batch size is a double-edged sword. Larger batches mean faster training and inference, but they also consume more memory. You need to find the sweet spot that balances performance and memory usage.

Example: If you're struggling with OOM errors, try reducing your batch size. Start with smaller batches and gradually increase them until you find the maximum value that doesn't cause OOM errors.

4. Exploring Other Memory Optimizations

- Gradient Accumulation: Instead of processing all data at once, you can divide it into smaller chunks and calculate gradients on each chunk. Imagine a group of people entering a parking lot in groups instead of all at once. It's much easier on the parking space!

- Model Sharding: This technique splits the model across multiple GPUs, effectively distributing the memory load. It's like having a shared parking lot with several smaller lots, making it easier to accommodate everyone.

- Dynamic Batching: Dynamic batching allows you to automatically adjust the batch size based on available memory. It's like having a parking system that adjusts the number of spaces based on the number of cars in the lot.

5. Monitor Your GPU Memory Usage

Keeping an eye on your GPU memory usage is crucial. You can use tools like NVIDIA's nvidia-smi command or visual monitoring tools to see how much VRAM your model is using.

- Visual Tools: Use tools like NVIDIA's GPU Metrics to visualize memory usage and spot potential issues.

6. Optimization Strategies for Llama.cpp on L40S_48GB

Llama.cpp is an excellent choice for running LLMs locally. Here's a quick overview of memory optimization tips for this framework using L40S_48GB GPUs:

- Model Loading: If you're working with large models, use the

--load-on-demandoption in Llama.cpp to mitigate memory pressure during the loading process. - Token Generation: Set the

--max_seq_lenoption to a value slightly lower than your GPU's capacity to avoid unexpected OOM errors during token generation. - Context Size: Limit the size of the context you're working with if you're dealing with large models.

Comparing Model Performance on NVIDIA L40S_48GB

Llama 3: Performance Comparison of F16 and Q4KM Precision

| Model | Precision | L40S_48GB Tokens/Second (Generation) | L40S_48GB Tokens/Second (Processing) | Notes |

|---|---|---|---|---|

| Llama 3 8B | F16 | 43.42 | 2491.65 | While faster, uses more memory and may trigger OOM errors. |

| Llama 3 8B | Q4KM | 113.6 | 5908.52 | Slower token generation but significantly more memory-efficient. |

| Llama 3 70B | F16 | Null | Null | Not tested due to lack of available data. |

| Llama 3 70B | Q4KM | 15.31 | 649.08 | Memory-efficient but much slower due to model size. |

Key Insights:

- Q4KM quantization for Llama 3 8B on L40S_48GB provides a significant boost in both token generation and processing speeds.

- F16 is faster but potentially leads to OOM errors, especially with larger models like Llama 3 70B.

- Llama 3 70B with Q4KM shows impressive memory efficiency but sacrifices performance due to its size.

Frequently Asked Questions (FAQs)

1. Why do I get OOM errors even with a large GPU like the L40S_48GB?

You might experience OOM errors even with a powerful GPU for a few reasons:

- Model Size: Larger models (like Llama 3 70B) might still exceed the GPU's memory capacity even with 48GB of VRAM.

- Precision: Higher precision models (like F16) use more memory than their quantized counterparts.

- Batch Size: Larger batch sizes require more memory for processing.

2. What are some good ways to reduce memory usage?

- Quantization: Use Q4KM quantization for memory savings.

- Batch Size: Experiment with smaller batch sizes.

- Model Size: Start with smaller models and gradually increase.

- Optimize Code: Streamline your code to minimize unnecessary memory allocations.

3. How can I monitor GPU memory usage?

Use tools like nvidia-smi or visual monitoring tools to track GPU memory usage. This helps you identify potential memory bottlenecks.

4. Are there any other NVIDIA GPUs that might be better for running these models?

While the L40S_48GB is a powerful GPU, its memory may be limited for some of the most massive models. Consider exploring GPUs with even higher memory capacity, such as the NVIDIA A100 or H100, if you're working with the largest LLMs.

Keywords

NVIDIA L40S48GB, LLM, OOM Error, Memory Optimization, Quantization, Batch Size, Llama 3, F16, Q4K_M, Token Generation, GPU Memory Monitoring, Llama.cpp