How Can I Prevent OOM Errors on NVIDIA A100 PCIe 80GB When Running Large Models?

Introduction

Ever get that dreaded "Out of Memory" (OOM) error when trying to run a large language model (LLM) on your fancy new NVIDIA A100PCIe80GB? It's a common frustration for developers and geeks who want to explore the power of these incredible models but hit a wall when their hardware can't keep up.

OOM errors are like trying to cram way too much stuff into a suitcase. It's inevitable that something's going to spill out. In our case, it's the LLM model itself that takes up too much memory, leaving your poor GPU struggling to handle the workload.

This article is your guide to understanding and preventing OOM errors on your NVIDIA A100PCIe80GB. We'll dive into the juicy details of how different LLM model sizes and settings affect memory consumption and provide you with practical tips and tricks to avoid those dreaded OOM errors.

The A100PCIe80GB: A Beast with Boundaries

The NVIDIA A100PCIe80GB is a powerhouse of a GPU, boasting 80GB of HBM2e memory. That's a lot of memory! However, even this beast has its limits. The size of the LLM you want to run and the specific settings you choose can easily push the limits of the A100PCIe80GB's memory.

Understanding the Memory Challenge: A Tale of Tokens and Sizes

Imagine you're trying to fit a giant Lego set into a small box. You've got all these intricate pieces, but they don't quite fit. That's essentially what happens when you try to run a big LLM model on a GPU with limited memory.

LLM models, like that giant Lego set, are made up of smaller pieces called "tokens." Each token represents a word, punctuation mark, or even part of a word, like "un-" in "unhappy." These tokens, when combined, form the entire model. The bigger the model, the more tokens it has, and the more memory it needs.

But wait, there's more! The way you set up your model for use ("quantization") also plays a role in memory consumption. Imagine the Lego set now comes in different sizes. One set uses large bricks, taking up more space. Another uses smaller bricks, fitting more in the box.

In LLMs, quantization is like choosing the "brick size." Using "quantization" allows you to trade off some accuracy for a smaller memory footprint.

Practical Tips to Combat OOM Errors

Choosing Your Model Wisely: It's All About Size

Not all LLMs are created equal. Some are compact like a pocket-sized LEGO set, while others are gargantuan. The "size" of your LLM directly impacts your GPU's memory consumption.

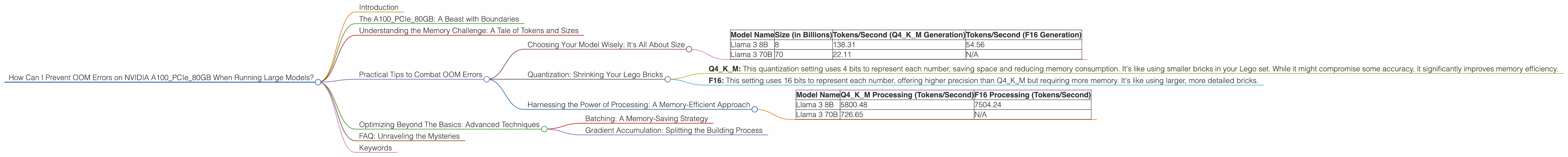

Here's a breakdown of some common LLM sizes and their performance on the A100PCIe80GB, based on the available data:

| Model Name | Size (in Billions) | Tokens/Second (Q4KM Generation) | Tokens/Second (F16 Generation) |

|---|---|---|---|

| Llama 3 8B | 8 | 138.31 | 54.56 |

| Llama 3 70B | 70 | 22.11 | N/A |

Note: The data for the A100PCIe80GB doesn't have information about the performance of the Llama 3 70B model with F16 quantization. We need to assume that it might require more memory than the Q4KM quantization and might lead to OOM errors.

Key Takeaway: Smaller models, like the Llama 3 8B, offer a balance between performance and memory usage, making them less likely to cause OOM errors. Larger models, like the Llama 3 70B, can push the limits of your A100PCIe80GB, especially when using the F16 quantization setting.

Quantization: Shrinking Your Lego Bricks

Quantization is like switching the brick sizes in your LEGO set. It allows you to use different precision levels for your model, trading off some accuracy for a smaller memory footprint.

Think of it this way: Imagine you're building a LEGO car. You can use large, detailed bricks for intricate details, but it takes up more space. Or, you can use smaller bricks to build the car in a more compact way, sacrificing some detail.

Quantization does the same for LLM models.

- Q4KM: This quantization setting uses 4 bits to represent each number, saving space and reducing memory consumption. It's like using smaller bricks in your Lego set. While it might compromise some accuracy, it significantly improves memory efficiency.

- F16: This setting uses 16 bits to represent each number, offering higher precision than Q4KM but requiring more memory. It's like using larger, more detailed bricks.

Key Takeaway: Quantization offers a powerful way to control the memory usage of your LLM model. Choosing the right setting can be a delicate balance between accuracy and memory efficiency.

Harnessing the Power of Processing: A Memory-Efficient Approach

Processing speed is another factor that can affect your GPU's memory. Think of it like building your Lego set quickly. If you can assemble it faster, you don't need to keep as many pieces on your workbench at any given time, which is like your GPU's memory.

The A100PCIe80GB is capable of incredibly high processing speeds, especially with the Q4KM quantization setting. This combination can significantly reduce memory consumption, making it less likely to encounter OOM errors. Here's a comparison of processing speeds based on the available data:

| Model Name | Q4KM Processing (Tokens/Second) | F16 Processing (Tokens/Second) |

|---|---|---|

| Llama 3 8B | 5800.48 | 7504.24 |

| Llama 3 70B | 726.65 | N/A |

Key Takeaway: The faster your processing speed, the less memory your LLM model needs to operate efficiently.

Optimizing Beyond The Basics: Advanced Techniques

We've covered the basics of preventing OOM errors. Now, let's explore some advanced techniques that can further optimize your GPU's memory use:

Batching: A Memory-Saving Strategy

Batching is like dividing your LEGO instructions into smaller sets. Each set focuses on building a particular section of the car. By focusing on one section at a time, you can manage the number of pieces on your workbench, reducing clutter and improving efficiency.

In the context of LLMs, batching allows you to process data in smaller chunks (batches) rather than all at once. This helps conserve GPU memory by processing one batch at a time.

Key Takeaway: Batching is a powerful technique to reduce memory consumption, especially for larger models like the Llama 3 70B. It's like splitting the Lego set into smaller, more manageable chunks, optimizing your memory resources.

Gradient Accumulation: Splitting the Building Process

Gradient accumulation is like building a LEGO car in stages. Instead of assembling everything at once, you focus on constructing specific parts, gradually accumulating the "gradient" of your progress.

In the context of LLMs, it involves accumulating the gradients over multiple smaller batches before updating model weights. This strategy allows you to train larger models with limited GPU memory. It's like building your model in stages, gradually accumulating the knowledge and progress through multiple batches, making the building process more memory-efficient.

Key Takeaway: Gradient accumulation is a valuable optimization strategy for larger models, but it takes time. If you have the patience and want to run larger models, this technique can be a game changer for preventing OOM errors.

FAQ: Unraveling the Mysteries

1. What is quantization, and how does it help prevent OOM errors?

Quantization is a technique that reduces the precision of numbers used in an LLM, resulting in a smaller memory footprint. It's like using smaller LEGO bricks to build a car. You might lose some detail, but you gain significantly more space in your toolbox (GPU memory).

2. Why do larger models require more memory?

Larger LLMs have more parameters (like LEGO pieces), needing a larger toolbox (GPU memory) to accommodate all the parts.

3. Can I use a different GPU if I'm facing OOM errors with the A100PCIe80GB?

Yes, if the A100PCIe80GB is exceeding its memory capacity, using a more powerful GPU with larger memory could be a solution. However, you might need to consider the cost and availability of such GPUs.

Keywords

A100PCIe80GB, OOM error, LLM, out of memory, GPU, memory, Llama 3, Llama 3 8B, Llama 3 70B, quantization, Q4KM, F16, batching, gradient accumulation, tokens, processing speed, performance, model size, memory optimization.