How Can I Prevent OOM Errors on NVIDIA 4070 Ti 12GB When Running Large Models?

How Can I Prevent OOM Errors on NVIDIA 4070 Ti 12GB When Running Large Models?

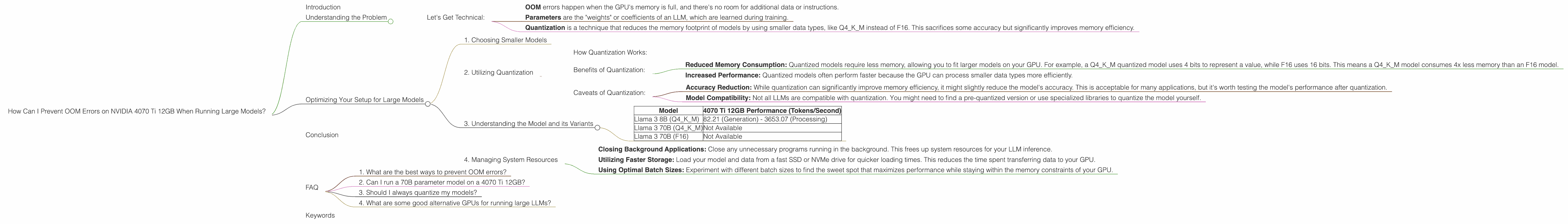

Introduction

Running large language models (LLMs) locally can be a rewarding experience. It allows you to control your data, experiment with different models, and even customize them to your needs. However, one of the biggest challenges you might encounter is the dreaded Out Of Memory (OOM) error. This occurs when the model exceeds the available memory on your graphics card (GPU), leaving your machine in a state of "memory overload".

Imagine trying to squeeze a giant elephant into a tiny shoebox - that's what happens with an OOM error. Your GPU is the shoebox, and the LLM is the elephant. The larger the model, the bigger the elephant, and the more likely it will crash your system.

In this article, we'll focus on the NVIDIA 4070 Ti 12GB GPU and explore solutions to prevent OOM errors when working with large LLMs. We'll dive into different techniques like quantization, model size selection, and practical strategies for efficient memory management.

Understanding the Problem

The NVIDIA 4070 Ti 12GB offers a decent amount of memory, but it might not be enough to handle the massive size of modern LLMs. These models require substantial memory to store their parameters and intermediate calculations during processing. For example, a 70 billion parameter model can easily consume more than 12GB of memory, leaving no room for other processes.

The primary culprits for exceeding your GPU's memory capacity are:

- Model Size: Larger models naturally need more memory.

- Precision: Higher precision (like F16 or F32) requires more memory than lower precision (like Q4KM).

- Batch Size: Larger batches require more memory as the GPU processes multiple inputs at once.

Let's Get Technical:

- OOM errors happen when the GPU's memory is full, and there's no room for additional data or instructions.

- Parameters are the "weights" or coefficients of an LLM, which are learned during training.

- Quantization is a technique that reduces the memory footprint of models by using smaller data types, like Q4KM instead of F16. This sacrifices some accuracy but significantly improves memory efficiency.

Optimizing Your Setup for Large Models

Let's explore the ways to overcome the OOM hurdle and successfully run large LLMs on your NVIDIA 4070 Ti 12GB.

1. Choosing Smaller Models

The most obvious solution is to opt for a smaller model. This may require tweaking your expectations regarding the complexity and capabilities of the model.

For example, instead of a 70B parameter model, you could consider a 7B or 8B parameter version. While it might have slightly reduced performance compared to a larger model, it could be a practical solution for your GPU's memory limits.

2. Utilizing Quantization

Quantization is a technique that reduces the memory footprint of models by using smaller data types. It's like simplifying a complex recipe by substituting high-quality ingredients with more affordable ones. The result might not be exactly the same, but it's good enough and significantly reduces the cost (in this case, memory).

How Quantization Works:

Imagine a number represented in a full-fledged F16 format - it's like a high-resolution image. Quantization effectively reduces the number of bits used to represent that number, like downsampling an image to a lower resolution. While the information loses some detail, it retains enough for basic understanding.

Benefits of Quantization:

- Reduced Memory Consumption: Quantized models require less memory, allowing you to fit larger models on your GPU. For example, a Q4KM quantized model uses 4 bits to represent a value, while F16 uses 16 bits. This means a Q4KM model consumes 4x less memory than an F16 model.

- Increased Performance: Quantized models often perform faster because the GPU can process smaller data types more efficiently.

Caveats of Quantization:

- Accuracy Reduction: While quantization can significantly improve memory efficiency, it might slightly reduce the model's accuracy. This is acceptable for many applications, but it's worth testing the model's performance after quantization.

- Model Compatibility: Not all LLMs are compatible with quantization. You might need to find a pre-quantized version or use specialized libraries to quantize the model yourself.

3. Understanding the Model and its Variants

LLMs exhibit different memory demands even in the same model family. For example, the Llama 3 model has several variations, each with its own unique combination of parameters and training data.

Here's a comparison of popular Llama models and their memory requirements:

| Model | 4070 Ti 12GB Performance (Tokens/Second) |

|---|---|

| Llama 3 8B (Q4KM) | 82.21 (Generation) - 3653.07 (Processing) |

| Llama 3 70B (Q4KM) | Not Available |

| Llama 3 70B (F16) | Not Available |

As you can see, data is only available for Llama 3 8B in Q4KM quantization.

While Llama 3 8B is small enough to fit on a 12GB GPU, the 70B model requires more memory than the 4070 Ti 12GB can handle. This is because it's a significantly bigger model with more parameters.

4. Managing System Resources

Beyond your GPU, your system's overall memory and CPU resources are crucial. To improve performance, you can try the following:

- Closing Background Applications: Close any unnecessary programs running in the background. This frees up system resources for your LLM inference.

- Utilizing Faster Storage: Load your model and data from a fast SSD or NVMe drive for quicker loading times. This reduces the time spent transferring data to your GPU.

- Using Optimal Batch Sizes: Experiment with different batch sizes to find the sweet spot that maximizes performance while staying within the memory constraints of your GPU.

Conclusion

Running large LLMs on a NVIDIA 4070 Ti 12GB GPU requires some careful planning and optimization. It's a balancing act between model size, precision, and available memory. By understanding the limitations of your hardware and employing techniques like quantization and efficient resource management, you can avoid OOM errors and unlock the capabilities of these powerful models.

FAQ

1. What are the best ways to prevent OOM errors?

The best ways to prevent OOM errors are to utilize smaller models or quantize them to reduce their memory footprint. You can also try optimizing system resources by closing unnecessary applications and using faster storage.

2. Can I run a 70B parameter model on a 4070 Ti 12GB?

Based on the available data, it's unlikely that you can run a 70B parameter Llama 3 model on a 4070Ti 12GB without encountering OOM errors. However, you might be able to run it with a smaller batch size or after quantization.

3. Should I always quantize my models?

Quantization might not be suitable for every application. While it offers memory savings and performance improvements, it can slightly reduce model accuracy. It's best to experiment and see if the trade-off is acceptable for your application.

4. What are some good alternative GPUs for running large LLMs?

If you encounter OOM errors with your 4070 Ti 12GB, you might consider upgrading to a GPU with higher memory capacity. Some popular options include the RTX 4080 or RTX 4090.

Keywords

LLM, large language model, NVIDIA, 4070 Ti 12GB, OOM, Out Of Memory, memory management, quantization, memory efficiency, batch size, Llama 3, performance, GPU, model size, resource management.