How Can I Prevent OOM Errors on NVIDIA 3090 24GB x2 When Running Large Models?

Introduction

Have you ever wanted to run cutting-edge large language models (LLMs) on your own machine? The thought of generating text, translating languages, or writing different kinds of creative content with the power of LLMs is tempting, but the memory requirements can be a real headache. You might be thinking, "I have a powerful NVIDIA 3090 24GB x2 setup, shouldn't I be good to go?" Well, not necessarily. These LLMs are massive, and even with a powerful setup, you can get hit by the dreaded "Out of Memory" (OOM) error.

This article will explore the common concerns of users running LLM models on NVIDIA 3090 24GB x2 setups, focusing on how to prevent dreaded OOM errors. We'll delve into the world of quantization, model size, and different strategies to maximize your hardware's potential. So, buckle up and join us on this journey to conquer the memory demons and unleash the full power of LLMs.

Understanding the Problem: Why OOM Errors Happen

Imagine trying to fit an elephant into a shoebox. You might get the head in, but the rest of the elephant is going to have a hard time. LLMs are like those elephants, they're huge! They require a ton of memory, and if you're not careful, you could easily run out of it. This is where the OOM error comes in, it's essentially a 'shoebox too small' message from your computer.

But why does this happen even with 24GB of dedicated memory on two GPUs? The answer lies in the sheer size of these models. For instance, Llama 3 70B, a popular model, boasts 70 billion parameters, which translates to a massive amount of memory needed for processing. When you add the input text and the output generated, the demand on your GPU's memory can easily exceed the available capacity.

Strategies to Combat OOM Errors

Don't despair! You can still enjoy the power of LLMs even if you face memory constraints. Here are some strategies to prevent OOM errors and get your LLMs running smoothly on your NVIDIA 3090 24GB x2 setup:

Quantization: Shrinking the Elephant

Quantization is a powerful technique that helps shrink the memory footprint of LLMs. Imagine that instead of using a full-size elephant, you get a miniature version. It's still an elephant, but it takes up much less space.

Quantization replaces the full-precision floating-point numbers (F16) used by LLMs with smaller, more efficient representations (Q4). This process can significantly reduce the model's memory requirements, allowing you to fit larger models on your GPU.

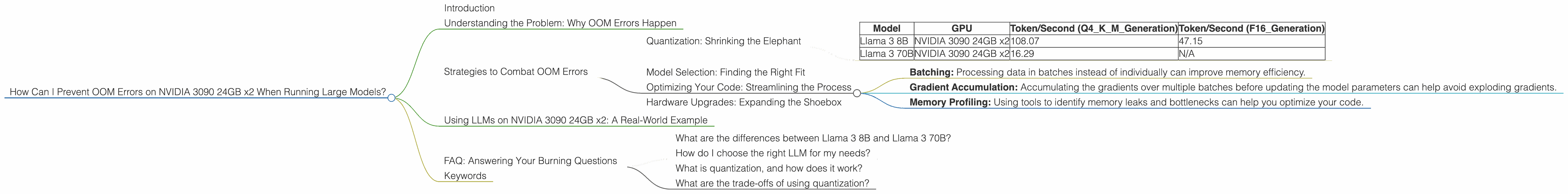

Quantization Performance:

| Model | GPU | Token/Second (Q4KM_Generation) | Token/Second (F16_Generation) |

|---|---|---|---|

| Llama 3 8B | NVIDIA 3090 24GB x2 | 108.07 | 47.15 |

| Llama 3 70B | NVIDIA 3090 24GB x2 | 16.29 | N/A |

(Note: Data for Llama 3 70B with F16 is not available)

We can see a significant boost in token generation speed for Llama 3 8B with Q4KM compared to F16. While this comes at the cost of some accuracy, the trade-off is often acceptable for many use cases.

Model Selection: Finding the Right Fit

Not all LLMs are created equal. Some are smaller and more lightweight, while others are colossal. Choosing the right model for your hardware and needs is essential. For example, if you're dealing with memory constraints, you might opt for Llama 3 8B, which is smaller and more efficient than the larger Llama 3 70B.

Optimizing Your Code: Streamlining the Process

If you're a developer, you can use various techniques to optimize your code and reduce memory usage. This can involve things like:

- Batching: Processing data in batches instead of individually can improve memory efficiency.

- Gradient Accumulation: Accumulating the gradients over multiple batches before updating the model parameters can help avoid exploding gradients.

- Memory Profiling: Using tools to identify memory leaks and bottlenecks can help you optimize your code.

Hardware Upgrades: Expanding the Shoebox

If you've tried all the strategies above, and you're still facing OOM errors, it might be time to consider hardware upgrades. Adding more memory or upgrading to a more powerful GPU can provide the extra space you need to run even the largest LLMs without running into memory issues.

Using LLMs on NVIDIA 3090 24GB x2: A Real-World Example

Let's look at a realistic scenario. Imagine you're a content creator who wants to use an LLM to generate high-quality articles. You have an NVIDIA 3090 24GB x2 setup, but you find that running Llama 3 70B results in OOM errors. What are your options?

Solution 1: Quantization

You can try quantizing the Llama 3 70B model to Q4KM. This will reduce its memory footprint and allow you to run it on your setup. However, keep in mind that quantization might slightly impact performance compared to the full-precision model.

Solution 2: Model Selection

Alternatively, you can opt for a smaller model like Llama 3 8B. This model should comfortably fit on your GPU and offer a good balance between performance and memory efficiency.

Solution 3: Batching

If you're coding with a framework like PyTorch, batching your data can help reduce the memory pressure on your GPU. This involves splitting your data into smaller chunks or batches and processing each batch sequentially.

FAQ: Answering Your Burning Questions

What are the differences between Llama 3 8B and Llama 3 70B?

The primary difference is size. Llama 3 8B is a smaller model with 8 billion parameters, while Llama 3 70B is significantly larger, with 70 billion parameters. Larger models typically offer better performance and can handle more complex tasks, but they require more memory.

How do I choose the right LLM for my needs?

Consider your memory constraints, the complexity of the tasks you want to perform, and the desired level of performance. If you have limited memory, choose a smaller model. If you need to perform complex tasks, opt for a larger model.

What is quantization, and how does it work?

Quantization is a technique for reducing the memory footprint of LLMs by representing the model's weights and activations using lower-precision data types. Imagine instead of using a full-size image, you use a smaller version with fewer pixels; you can represent it more efficiently. Quantization is like that for LLMs.

What are the trade-offs of using quantization?

Quantization offers a significant reduction in memory usage but can slightly impact performance and accuracy. For many use cases, the trade-off is worth it.

Keywords

LLM, Large Language Models, NVIDIA 3090, 24GB x2, OOM, Out of Memory, Quantization, Llama 3, F16, Q4KM, Token Generation, Token Speed, Memory Optimization, GPU, Hardware, Model Selection, Batching, Gradient Accumulation, Performance, Accuracy, Memory, Code Optimization.