From Installation to Inference: Running Llama3 8B on NVIDIA RTX A6000 48GB

Introduction

Welcome to the captivating world of local Large Language Models (LLMs)! Imagine having the power of a sophisticated AI language model right on your laptop, ready to answer your questions, write stories, or generate code—all without relying on the cloud. This is the exciting reality of running LLMs locally, and in this article, we'll dive deep into the performance of the Llama3 8B model running on a NVIDIA RTX A6000 48GB GPU, exploring its capabilities and limitations.

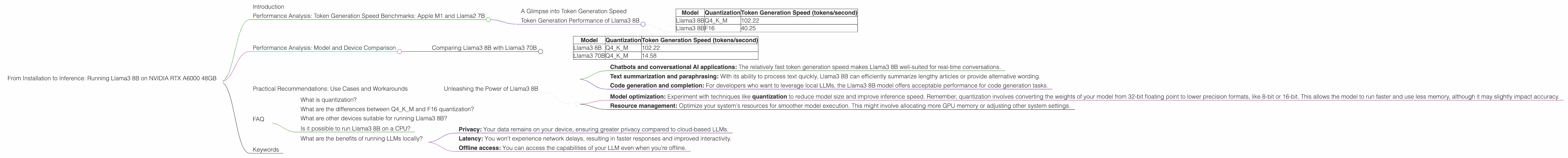

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

A Glimpse into Token Generation Speed

Token generation speed is a crucial metric for evaluating LLM performance. Tokens are the building blocks of text, like words, punctuation, and spaces. The faster a model can generate tokens, the smoother and more responsive your interaction with it. In this section, we'll focus on the token generation speed of the Llama3 8B model running on the RTX A6000 48GB.

To get a better grasp of the performance, let's imagine you're building a chatbot. If the chatbot generates tokens at a snail's pace, your users will be frustrated by long delays. On the other hand, a blazing-fast token generation speed translates to a seamless and enjoyable user experience.

Token Generation Performance of Llama3 8B

The table below summarizes the token generation speeds of Llama3 8B running on the RTX A6000 48GB. It showcases the model's performance when using different quantization techniques, namely Q4KM (4-bit quantization with Kernel and Matrix operations) and F16 (16-Bit floating point).

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 102.22 |

| Llama3 8B | F16 | 40.25 |

Key Observations:

- Q4KM Quantization: This quantization technique significantly boosts the speed of token generation, reaching an impressive 102.22 tokens per second.

- F16 Quantization: While F16 quantization is still faster than the original 32-bit floating point, the performance is significantly less compared to Q4KM.

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B with Llama3 70B

It's essential to compare the performance of Llama3 8B with its larger sibling, Llama3 70B, given the significant difference in model size. The table below highlights the token generation speed, but remember that we don't have data for the F16 quantization for Llama3 70B on this device.

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 102.22 |

| Llama3 70B | Q4KM | 14.58 |

Key Observations:

- The Llama3 8B model, despite being significantly smaller, outperforms the Llama3 70B in terms of token generation speed. This difference is attributed to the model's complexity and the computational resources needed to process it.

- The smaller model requires less memory and computational power, which enables a faster processing speed.

Practical Recommendations: Use Cases and Workarounds

Unleashing the Power of Llama3 8B

Now that we've explored the performance of Llama3 8B, let's discuss potential use cases and explore ways to work around its limitations.

Ideal Use Cases:

- Chatbots and conversational AI applications: The relatively fast token generation speed makes Llama3 8B well-suited for real-time conversations.

- Text summarization and paraphrasing: With its ability to process text quickly, Llama3 8B can efficiently summarize lengthy articles or provide alternative wording.

- Code generation and completion: For developers who want to leverage local LLMs, the Llama3 8B model offers acceptable performance for code generation tasks.

Workarounds:

- Model optimization: Experiment with techniques like quantization to reduce model size and improve inference speed. Remember, quantization involves converting the weights of your model from 32-bit floating point to lower precision formats, like 8-bit or 16-bit. This allows the model to run faster and use less memory, although it may slightly impact accuracy.

- Resource management: Optimize your system's resources for smoother model execution. This might involve allocating more GPU memory or adjusting other system settings.

FAQ

What is quantization?

Quantization is like simplifying a complex recipe. Instead of using many ingredients with precise measurements, we reduce the number of ingredients and use rougher measurements. Similarly, quantization converts the precise weights in a neural network to lower precision formats, like 8-bit or 16-bit. This makes the model smaller and faster, but it might affect accuracy.

What are the differences between Q4KM and F16 quantization?

Q4KM quantization utilizes a 4-bit format and performs kernel and matrix operations on a 32-bit floating point. This technique offers a faster and more efficient way to process information, but it may slightly affect accuracy. F16 quantization uses a 16-bit floating point format, leading to a balance between speed and accuracy.

What are other devices suitable for running Llama3 8B?

While the RTX A6000 48GB offers exceptional performance, several other powerful GPUs are capable of running Llama3 8B smoothly. These include the NVIDIA RTX 4090, NVIDIA RTX 3090, and AMD Radeon RX 7900 XTX, just to name a few.

Is it possible to run Llama3 8B on a CPU?

Yes, it is possible to run Llama3 8B on a CPU, but the performance would be much slower compared to a GPU. You'd likely need a high-end CPU with multiple cores and a significant amount of RAM.

What are the benefits of running LLMs locally?

Running LLMs locally offers several advantages:

- Privacy: Your data remains on your device, ensuring greater privacy compared to cloud-based LLMs.

- Latency: You won't experience network delays, resulting in faster responses and improved interactivity.

- Offline access: You can access the capabilities of your LLM even when you're offline.

Keywords

LLMs, local LLMs, Llama3 8B, NVIDIA RTX A6000 48GB, GPU, token generation speed, performance analysis, quantization, Q4KM, F16, use cases, workarounds, chatbots, conversational AI, text summarization, paraphrasing, code generation, model optimization, resource management, privacy, latency, offline access.