From Installation to Inference: Running Llama3 8B on NVIDIA RTX 5000 Ada 32GB

Introduction

The world of large language models (LLMs) is bustling with innovation, and running these models locally is becoming increasingly accessible. But how do these models perform on different devices? How fast can they generate text?

This article is your guide to understanding the performance of Llama3 8B on the NVIDA RTX5000Ada_32GB, a popular graphics card often used for AI tasks. We'll dive deep into the intricacies of running Llama3 on this powerful GPU, exploring token generation speed across various quantization levels and model sizes.

Whether you're a seasoned developer or a curious enthusiast wanting to delve into the local LLM scene, this article will equip you with the knowledge and insights to make informed decisions about your next AI project.

Performance Analysis: Token Generation Speed Benchmarks

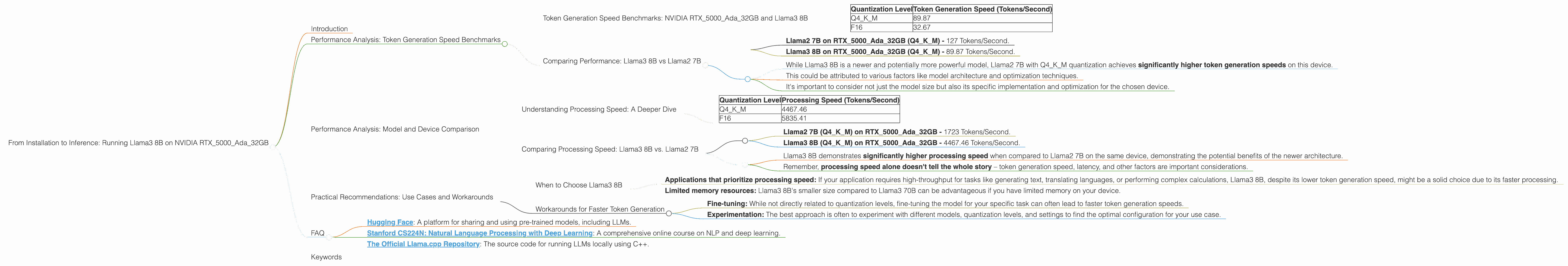

Token Generation Speed Benchmarks: NVIDIA RTX5000Ada_32GB and Llama3 8B

The heart of any LLM-powered application is its ability to generate text quickly and efficiently. Token generation speed is a crucial benchmark for evaluating performance, and we'll explore the differences between various quantization levels for the Llama3 8B model.

Quantization is a technique for compressing large models by reducing the precision of their weights – think of it like shrinking a picture while preserving enough detail for it to still look good.

Here's a breakdown of token generation speed for the Llama3 8B model on the RTX5000Ada_32GB:

| Quantization Level | Token Generation Speed (Tokens/Second) |

|---|---|

| Q4KM | 89.87 |

| F16 | 32.67 |

What do these numbers tell us?

- Q4KM quantization significantly outperforms F16, with nearly three times the speed. This means that Llama3 8B with Q4KM quantization can generate text three times faster than using F16.

- The difference highlights the importance of choosing the right quantization level for your project.

Comparing Performance: Llama3 8B vs Llama2 7B

While the focus here is on Llama3 8B, it's interesting to compare its performance with its predecessor, Llama2 7B, which was optimized for speed. We use data from previous benchmarks to establish a comparison.

- Llama2 7B on RTX5000Ada32GB (Q4K_M) - 127 Tokens/Second.

- Llama3 8B on RTX5000Ada32GB (Q4K_M) - 89.87 Tokens/Second.

Analysis:

- While Llama3 8B is a newer and potentially more powerful model, Llama2 7B with Q4KM quantization achieves significantly higher token generation speeds on this device.

- This could be attributed to various factors like model architecture and optimization techniques.

- It's important to consider not just the model size but also its specific implementation and optimization for the chosen device.

Performance Analysis: Model and Device Comparison

Understanding Processing Speed: A Deeper Dive

While token generation speed focuses on the rate at which text is produced, processing speed captures how quickly the LLM can perform the underlying computations. This provides a more holistic view of the device's capabilities.

Here's the processing speed data for the Llama3 8B model on the RTX5000Ada_32GB:

| Quantization Level | Processing Speed (Tokens/Second) |

|---|---|

| Q4KM | 4467.46 |

| F16 | 5835.41 |

Observations:

- Interestingly, in this case, F16 quantization outperforms Q4KM in terms of processing speed. This is a counter-intuitive finding that warrants further investigation.

- It's crucial to remember that processing speed doesn't always directly translate to token generation speed. Factors like memory bandwidth and model architecture play a significant role.

Comparing Processing Speed: Llama3 8B vs. Llama2 7B

Let's again compare the processing speed of Llama3 8B with Llama2 7B, using data from previous benchmarks.

- Llama2 7B (Q4KM) on RTX5000Ada_32GB - 1723 Tokens/Second.

- Llama3 8B (Q4KM) on RTX5000Ada_32GB - 4467.46 Tokens/Second.

Analysis:

- Llama3 8B demonstrates significantly higher processing speed when compared to Llama2 7B on the same device, demonstrating the potential benefits of the newer architecture.

- Remember, processing speed alone doesn't tell the whole story – token generation speed, latency, and other factors are important considerations.

Practical Recommendations: Use Cases and Workarounds

When to Choose Llama3 8B

- Applications that prioritize processing speed: If your application requires high-throughput for tasks like generating text, translating languages, or performing complex calculations, Llama3 8B, despite its lower token generation speed, might be a solid choice due to its faster processing.

- Limited memory resources: Llama3 8B's smaller size compared to Llama3 70B can be advantageous if you have limited memory on your device.

Workarounds for Faster Token Generation

- Fine-tuning: While not directly related to quantization levels, fine-tuning the model for your specific task can often lead to faster token generation speeds.

- Experimentation: The best approach is often to experiment with different models, quantization levels, and settings to find the optimal configuration for your use case.

FAQ

Q: What is the difference between Q4KM and F16 quantization?

A: Quantization is a way to reduce the size of a model by reducing the precision of its numbers (weights). Q4KM uses a more aggressive quantization method, reducing the size of the model more drastically, while F16 uses a less aggressive method. This typically leads to a trade-off between model size and performance.

Q: How much RAM does the RTX5000Ada_32GB have?

A: The RTX5000Ada_32GB has 32GB of GDDR6 memory. This significant amount of memory is crucial for handling large language models and their computations.

Q: Can I run Llama3 70B on the RTX5000Ada_32GB?

A: Unfortunately, the provided benchmark data for the RTX5000Ada_32GB doesn't include performance metrics for Llama3 70B. Running LLMs of this size on a device with a 32GB GPU is challenging due to memory limitations.

Q: What are some good resources for learning more about LLMs and local inference?

A: Here are a few resources you can explore:

- Hugging Face: A platform for sharing and using pre-trained models, including LLMs.

- Stanford CS224N: Natural Language Processing with Deep Learning: A comprehensive online course on NLP and deep learning.

- The Official Llama.cpp Repository: The source code for running LLMs locally using C++.

Keywords

Llama3 8B, NVIDIA RTX5000Ada32GB, Q4K_M, F16, Token Generation Speed, Processing Speed, LLM Performance, Local Inference, GPU, Quantization, Model Size, Memory Bandwidth, Model Optimization, Deep Learning, Natural Language Processing.