From Installation to Inference: Running Llama3 8B on NVIDIA RTX 4000 Ada 20GB

Introduction: The Rise of Local LLMs and the NVIDIA RTX4000Ada_20GB

Welcome, fellow AI enthusiasts! Today, we're diving deep into the world of local, large language models (LLMs), specifically the Llama3 8B model running on the NVIDIA RTX4000Ada_20GB GPU.

The evolution of LLMs has been remarkable, shifting from cloud-based giants like ChatGPT to smaller, more accessible models that can be run locally. This newfound accessibility has sparked a wave of innovation, allowing developers to leverage the power of LLMs without needing extensive infrastructure or online connectivity.

Our focus is on the RTX4000Ada_20GB, a popular graphics card known for its powerful performance and affordability, making it a compelling option for running LLMs locally.

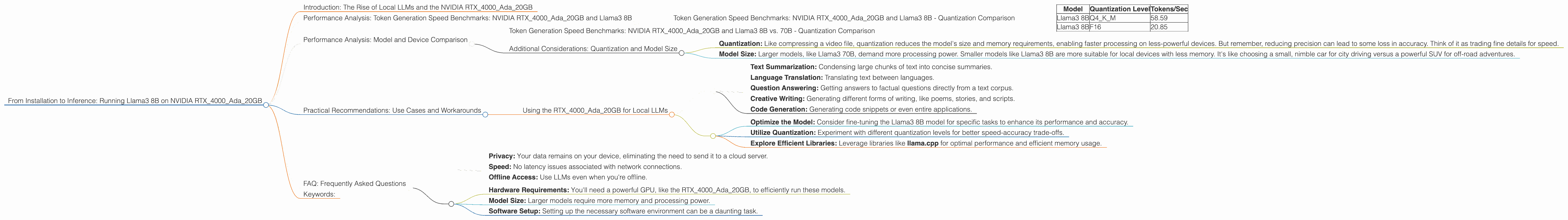

Performance Analysis: Token Generation Speed Benchmarks: NVIDIA RTX4000Ada_20GB and Llama3 8B

Token Generation Speeds are a crucial measure of an LLM's efficiency, determining how quickly it can generate text. We'll delve into these benchmarks for two quantization levels: Q4KM (low precision) and F16 (half precision).

Token Generation Speed Benchmarks: NVIDIA RTX4000Ada_20GB and Llama3 8B - Quantization Comparison

| Model | Quantization Level | Tokens/Sec |

|---|---|---|

| Llama3 8B | Q4KM | 58.59 |

| Llama3 8B | F16 | 20.85 |

Key Takeaways:

- Q4KM outperforms F16 in terms of token generation speed. This is expected, as Q4KM utilizes lower precision, resulting in faster calculations. Think of it like this: Q4KM is a lightning-fast runner, but sometimes takes a shortcut, while F16 takes a more precise route, though it might be a little slower.

- Llama3 8B on RTX4000Ada_20GB shows promising performance, demonstrating its potential for practical local applications.

Performance Analysis: Model and Device Comparison

While we're focusing on the RTX4000Ada_20GB, it's helpful to compare its performance to other devices and models, especially for those seeking a wider perspective.

Token Generation Speed Benchmarks: NVIDIA RTX4000Ada_20GB and Llama3 8B vs. 70B - Quantization Comparison

Unfortunately, data for the Llama3 70B model on the RTX4000Ada_20GB is currently unavailable.

Additional Considerations: Quantization and Model Size

- Quantization: Like compressing a video file, quantization reduces the model's size and memory requirements, enabling faster processing on less-powerful devices. But remember, reducing precision can lead to some loss in accuracy. Think of it as trading fine details for speed.

- Model Size: Larger models, like Llama3 70B, demand more processing power. Smaller models like Llama3 8B are more suitable for local devices with less memory. It's like choosing a small, nimble car for city driving versus a powerful SUV for off-road adventures.

Practical Recommendations: Use Cases and Workarounds

Using the RTX4000Ada_20GB for Local LLMs

The RTX4000Ada_20GB is a strong candidate for running Llama3 8B locally for various tasks:

- Text Summarization: Condensing large chunks of text into concise summaries.

- Language Translation: Translating text between languages.

- Question Answering: Getting answers to factual questions directly from a text corpus.

- Creative Writing: Generating different forms of writing, like poems, stories, and scripts.

- Code Generation: Generating code snippets or even entire applications.

A Few Tips:

- Optimize the Model: Consider fine-tuning the Llama3 8B model for specific tasks to enhance its performance and accuracy.

- Utilize Quantization: Experiment with different quantization levels for better speed-accuracy trade-offs.

- Explore Efficient Libraries: Leverage libraries like llama.cpp for optimal performance and efficient memory usage.

FAQ: Frequently Asked Questions

Q: What is quantization? A: Quantization is a technique used to reduce the size and memory footprint of large language models. It involves converting the model's weights from full precision (32-bit floats) to lower precision formats like Q4KM (4-bit quantized) or F16 (half-precision floats).

Q: What are the benefits of running LLMs locally? A: Running LLMs locally offers advantages like: * Privacy: Your data remains on your device, eliminating the need to send it to a cloud server. * Speed: No latency issues associated with network connections. * Offline Access: Use LLMs even when you're offline.

Q: What are the challenges of running LLMs locally? A: Local LLM deployment can present challenges like: * Hardware Requirements: You'll need a powerful GPU, like the RTX4000Ada_20GB, to efficiently run these models. * Model Size: Larger models require more memory and processing power. * Software Setup: Setting up the necessary software environment can be a daunting task.

Q: What are some alternative GPUs for running LLMs? A: Besides the RTX4000Ada_20GB, other options include the RTX 4090, RTX 4080, and RTX 3090. However, these often come at a higher price point.

Keywords:

RTX4000Ada20GB, NVIDIA, LLMs, Llama3 8B, Llama3 70B, Token Generation Speed, Quantization, Q4K_M, F16, Local LLMs, GPU, Inference, Text Summarization, Language Translation, Question Answering, Creative Writing, Code Generation, Performance Benchmarks, Model Size, Practical Recommendations, Use Cases, Workarounds, FAQs, Privacy, Speed, Offline Access, Hardware Requirements, Software Setup, AI, Deep Learning, Natural Language Processing.