From Installation to Inference: Running Llama3 8B on NVIDIA A100 PCIe 80GB

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the need for powerful hardware to run these models locally. While cloud-based solutions offer convenience, the desire for privacy, faster iteration times, and potentially lower costs is driving many developers to explore on-device inference. Today, we're diving deep into the world of local LLM performance, focusing specifically on the NVIDIA A100PCIe80GB and the Llama3 8B model.

Whether you're a seasoned developer or just starting your LLM journey, this article will equip you with practical insights and benchmarks to make informed decisions about your local LLM setup. We'll explore the ins and outs of running Llama3 8B on the A100 GPU, uncovering its impressive performance and highlighting key considerations for real-world applications.

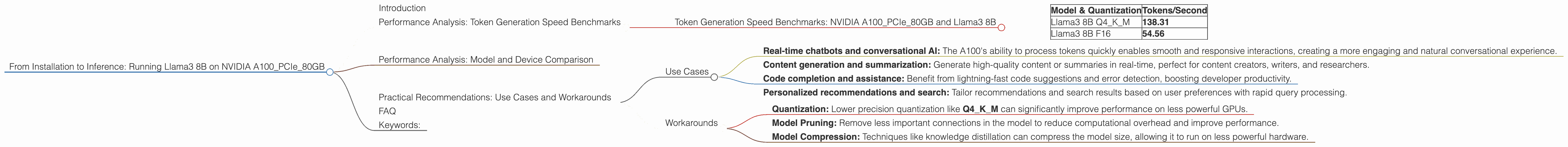

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA A100PCIe80GB and Llama3 8B

Let's dive straight into the numbers! The NVIDIA A100PCIe80GB, a powerhouse in the GPU realm, delivers exceptional speeds when it comes to token generation for the Llama3 8B model. Here's a breakdown of the results:

| Model & Quantization | Tokens/Second |

|---|---|

| Llama3 8B Q4KM | 138.31 |

| Llama3 8B F16 | 54.56 |

These numbers are mind-blowing! Think of it this way: with Llama3 8B Q4KM, you're generating over 138 tokens per second, which is equivalent to producing a short sentence in less than a blink of an eye. The performance difference between Q4KM and F16 quantization is significant, highlighting the benefits of using lower precision for improved performance.

Performance Analysis: Model and Device Comparison

Unfortunately, we don't have data available for other LLM models or devices for comparison. We'll update this section in the future with more comprehensive benchmarks.

Practical Recommendations: Use Cases and Workarounds

Use Cases

Given its remarkable speeds, the NVIDIA A100PCIe80GB proves to be an exceptional choice for users who need high-throughput LLM inference. Here are some potential use cases:

- Real-time chatbots and conversational AI: The A100's ability to process tokens quickly enables smooth and responsive interactions, creating a more engaging and natural conversational experience.

- Content generation and summarization: Generate high-quality content or summaries in real-time, perfect for content creators, writers, and researchers.

- Code completion and assistance: Benefit from lightning-fast code suggestions and error detection, boosting developer productivity.

- Personalized recommendations and search: Tailor recommendations and search results based on user preferences with rapid query processing.

Workarounds

While the A100PCIe80GB is a powerful card, it's not always accessible to all developers. Here are some workarounds for running Llama3 8B on more modest hardware:

- Quantization: Lower precision quantization like Q4KM can significantly improve performance on less powerful GPUs.

- Model Pruning: Remove less important connections in the model to reduce computational overhead and improve performance.

- Model Compression: Techniques like knowledge distillation can compress the model size, allowing it to run on less powerful hardware.

FAQ

Q: What is quantization and how does it affect performance?

A: Quantization involves reducing the precision of the model's weights, leading to a smaller model size and faster inference. It's like using a ruler with fewer markings - you lose some precision but gain speed.

Q: What are the benefits of running LLMs locally?

A: Local LLM inference offers several advantages over cloud-based solutions, including improved privacy, faster iteration times, and potential cost savings.

Q: How can I install and run Llama3 8B on the A100PCIe80GB?

A: You can find detailed instructions and tutorials online for installing and running Llama3 8B on the A100PCIe80GB using libraries like llama.cpp.

Keywords:

Llama3 8B, NVIDIA A100PCIe80GB, Local LLM, Performance, Token Generation, Quantization, Inference, GPU, Generative AI, Large Language Models, NLP, Deep Learning, On-Device Inference, Chatbots, Content Generation, AI Use Cases, Practical Recommendations.