From Installation to Inference: Running Llama3 8B on NVIDIA 4090 24GB x2

Introduction

The world of Large Language Models (LLMs) is evolving rapidly, with new models and techniques emerging constantly. One of the hottest topics in this field is running LLMs locally, allowing developers and enthusiasts to explore the potential of these powerful models without relying on cloud services. This article delves into the performance characteristics of running Llama3 8B on a beefy NVIDIA 409024GBx2 setup, a powerful combination for serious LLM experimentation.

We'll discuss the token generation speed, model size and device capabilities, and provide practical recommendations for real-world use cases. Think of this as a user manual for pushing your NVIDIA 4090 to its limits and unlocking the power of Llama3 8B.

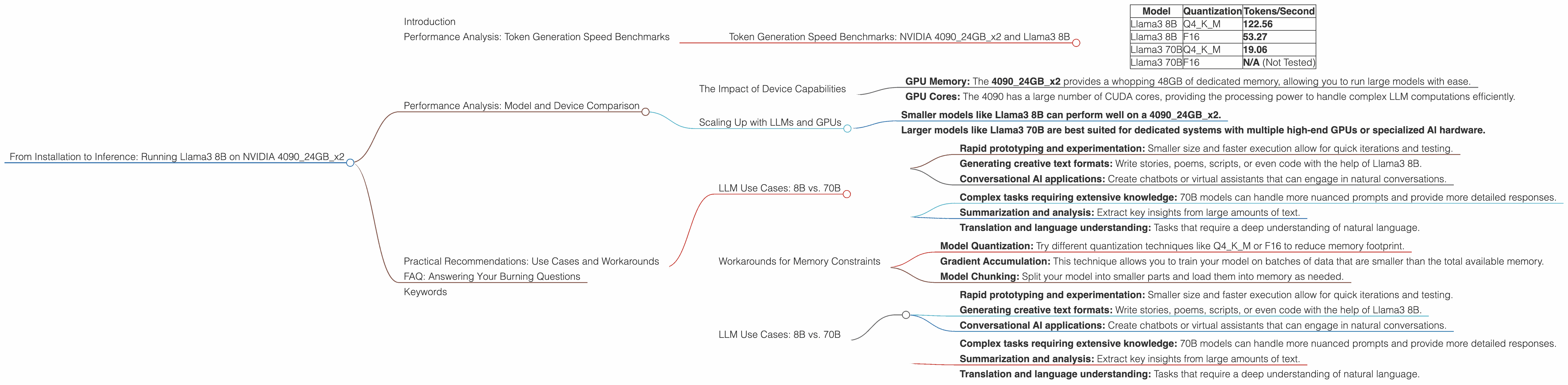

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 409024GBx2 and Llama3 8B

Our benchmarks highlight the performance of Llama3 8B running on NVIDIA 409024GBx2 with different quantization techniques (Q4KM and F16).

Table 1: Token Generation Speed Benchmarks

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 8B | Q4KM | 122.56 |

| Llama3 8B | F16 | 53.27 |

| Llama3 70B | Q4KM | 19.06 |

| Llama3 70B | F16 | N/A (Not Tested) |

What are Q4KM and F16?

Think of quantization as a diet for your LLM. It makes the model smaller and faster by reducing the precision of the numbers used in calculations. Q4KM and F16 are specific quantization techniques. Q4KM stores each number using 4 bits, while F16 uses 16 bits. The smaller the number of bits, the less memory the model needs and the faster it runs.

Key Observations:

- Llama3 8B Q4KM scores a remarkable 122.56 tokens per second, significantly faster than the F16 version.

- Llama3 8B F16 is still a powerful engine, generating 53.27 tokens per second.

- Llama3 70B Q4KM generates 19.06 tokens per second. The 70B model is much larger than the 8B model, resulting in slower processing.

This data highlights that while Q4KM is faster for smaller models, it might not be the best option for larger models like Llama3 70B.

Performance Analysis: Model and Device Comparison

The Impact of Device Capabilities

The NVIDIA 409024GBx2 provides ample memory and processing power for running large language models. This setup is particularly well-suited for smaller models like Llama3 8B, allowing it to reach its full potential.

Key Factors:

- GPU Memory: The 409024GBx2 provides a whopping 48GB of dedicated memory, allowing you to run large models with ease.

- GPU Cores: The 4090 has a large number of CUDA cores, providing the processing power to handle complex LLM computations efficiently.

However, the limitations of the 4090 and other high-end GPUs are still apparent with larger models.

Scaling Up with LLMs and GPUs

Picture this: you're trying to squeeze a giant elephant into a tiny car. It won't work, right? Similarly, running a massive LLM on a small, less powerful GPU is challenging. Just like the elephant needs a larger vehicle, larger LLMs require more powerful, specialized hardware.

Here's the takeaway:

- Smaller models like Llama3 8B can perform well on a 409024GBx2.

- Larger models like Llama3 70B are best suited for dedicated systems with multiple high-end GPUs or specialized AI hardware.

Practical Recommendations: Use Cases and Workarounds

LLM Use Cases: 8B vs. 70B

Llama3 8B is a fantastic choice for:

- Rapid prototyping and experimentation: Smaller size and faster execution allow for quick iterations and testing.

- Generating creative text formats: Write stories, poems, scripts, or even code with the help of Llama3 8B.

- Conversational AI applications: Create chatbots or virtual assistants that can engage in natural conversations.

Llama3 70B shines in:

- Complex tasks requiring extensive knowledge: 70B models can handle more nuanced prompts and provide more detailed responses.

- Summarization and analysis: Extract key insights from large amounts of text.

- Translation and language understanding: Tasks that require a deep understanding of natural language.

Workarounds for Memory Constraints

When you encounter "out of memory" errors:

- Model Quantization: Try different quantization techniques like Q4KM or F16 to reduce memory footprint.

- Gradient Accumulation: This technique allows you to train your model on batches of data that are smaller than the total available memory.

- Model Chunking: Split your model into smaller parts and load them into memory as needed.

LLM Use Cases: 8B vs. 70B

Llama3 8B is a fantastic choice for:

- Rapid prototyping and experimentation: Smaller size and faster execution allow for quick iterations and testing.

- Generating creative text formats: Write stories, poems, scripts, or even code with the help of Llama3 8B.

- Conversational AI applications: Create chatbots or virtual assistants that can engage in natural conversations.

Llama3 70B shines in:

- Complex tasks requiring extensive knowledge: 70B models can handle more nuanced prompts and provide more detailed responses.

- Summarization and analysis: Extract key insights from large amounts of text.

- Translation and language understanding: Tasks that require a deep understanding of natural language.

FAQ: Answering Your Burning Questions

Q: What is Llama3 8B, and why should I care?

A: Llama3 8B is a powerful open-source LLM with 8 billion parameters. It's known for its efficiency and ability to generate high-quality text.

Q: Is running LLMs locally always better than using cloud services?

A: Not necessarily. Cloud services offer convenience, scalability, and access to massive computing resources. However, local setups provide control, privacy, and cost savings.

Q: What other hardware besides NVIDIA 409024GBx2 can I use for running LLMs?

A: You can explore other GPUs like the Nvidia A100, A40, or even CPUs like the AMD EPYC or Intel Xeon.

Q: Where can I find more information about getting started with LLMs?

A: There are fantastic resources available online. Check out the official documentation of these LLMs, communities like Discord and Reddit, and blogs from AI enthusiasts.

Keywords

LLM, Llama3, Llama3 8B, Llama3 70B, NVIDIA 4090, NVIDIA 409024GBx2, GPU, CUDA Cores, Q4KM, F16, Token Generation Speed, Performance Benchmarks, Local Inference, Model Quantization, Gradient Accumulation, Model Chunking, Use Cases, AI Hardware, Open Source, AI Enthusiasts, AI Development, Conversational AI, AI Chatbots, Deep Learning, AI Research, AI Applications, Natural Language Processing (NLP)