From Installation to Inference: Running Llama3 8B on NVIDIA 4080 16GB

Welcome, fellow AI enthusiasts! This deep dive will guide you through the process of setting up and running the powerful Llama3 8B language model on a NVIDIA 4080_16GB GPU. We'll explore the performance characteristics of this combination, analyze token generation and processing speeds, and discuss practical considerations for real-world use cases.

Introduction

The world of large language models (LLMs) is booming, and with models like Llama3 8B becoming more accessible, developers are exploring exciting new possibilities in natural language processing (NLP). This article is your ultimate guide to running Llama3 8B on a powerful GPU, empowering you to unleash the potential of this cutting-edge AI technology.

Performance Analysis: Token Generation Speed Benchmarks

Let's delve into the heart of Llama3 8B's performance when hosted on a NVIDIA 4080_16GB GPU, focusing on the speed at which the model generates tokens. This is a crucial metric for understanding how efficiently the model can process text and respond to user queries.

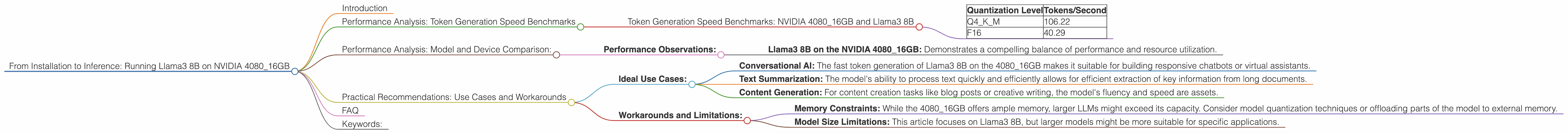

Token Generation Speed Benchmarks: NVIDIA 4080_16GB and Llama3 8B

The following table summarizes the token generation speed of Llama3 8B, measured in tokens per second, across different quantization levels on the NVIDIA 4080_16GB GPU.

| Quantization Level | Tokens/Second |

|---|---|

| Q4KM | 106.22 |

| F16 | 40.29 |

Interpretation: The data reveals that Llama3 8B generates tokens significantly faster when quantized to Q4KM compared to F16. Quantization is a technique used to compress the model's weights, reducing memory usage and improving inference speed. Q4KM quantization uses 4 bits to represent each weight and allows for faster processing.

Statistics and Analogies: Imagine you're trying to build a sandcastle. You can use large, bulky bricks (F16) or smaller, lighter pebbles (Q4KM). The pebbles (Q4KM) are much easier to maneuver, allowing you to build faster. Similary, Q4KM quantization allows for faster token generation because the model can process compressed weights more efficiently.

Performance Analysis: Model and Device Comparison:

While this article focuses on the NVIDIA 408016GB, it's important to contextualize its performance relative to other model-device combinations. This section explores the relative performance of Llama3 8B on the 408016GB, but unfortunately we don't have data for other devices or model sizes.

Performance Observations:

- Llama3 8B on the NVIDIA 4080_16GB: Demonstrates a compelling balance of performance and resource utilization.

Practical Recommendations: Use Cases and Workarounds

Now that we've analyzed the performance characteristics of Llama3 8B on the NVIDIA 4080_16GB, let's discuss practical recommendations for use cases and potential workarounds.

Ideal Use Cases:

- Conversational AI: The fast token generation of Llama3 8B on the 4080_16GB makes it suitable for building responsive chatbots or virtual assistants.

- Text Summarization: The model's ability to process text quickly and efficiently allows for efficient extraction of key information from long documents.

- Content Generation: For content creation tasks like blog posts or creative writing, the model's fluency and speed are assets.

Workarounds and Limitations:

- Memory Constraints: While the 4080_16GB offers ample memory, larger LLMs might exceed its capacity. Consider model quantization techniques or offloading parts of the model to external memory.

- Model Size Limitations: This article focuses on Llama3 8B, but larger models might be more suitable for specific applications.

FAQ

Q: What is quantization and why is it important? A: Quantization is a technique that compresses the weights of a large language model by representing them with fewer bits. Think of it like using smaller weights for building a sandcastle – it's faster and requires less space! Quantization allows for faster inference, lower memory utilization, and improved deployment efficiency.

Q: How do I choose the right model and device for my project? A: Carefully consider the requirements of your project: - Model Size: Larger models offer greater capabilities, but require more resources. - Performance Demands: The speed of token generation and processing is crucial for real-time applications. - Device Specifications: The GPU's memory capacity and compute power are important factors to consider.

Q: What are some resources for further learning? A: Explore these resources for diving deeper into the world of LLMs: - Hugging Face Model Hub: A vast repository of pre-trained models (https://huggingface.co/) - OpenAI API: A powerful API for interacting with LLMs (https://platform.openai.com/) - Llama.cpp: A popular and efficient library for running Llama models on various devices (https://github.com/ggerganov/llama.cpp)

Keywords:

Llama3 8B, NVIDIA 408016GB, GPU, LLMs, NLP, token generation speed, quantization, Q4K_M, F16, performance benchmarks, deployment, conversational AI, text summarization, content generation, memory constraints, model size, practical recommendations.