From Installation to Inference: Running Llama3 8B on NVIDIA 3080 Ti 12GB

Introduction

Welcome, fellow AI enthusiasts! This deep dive explores the world of running Llama3 8B, a powerful language model, on the NVIDIA 3080 Ti 12GB graphics card. We'll uncover the intricate dance between hardware and software, analyzing performance, exploring practical use cases, and providing insights to help you unlock the full potential of your local LLM setup.

Imagine a world where you can interact with a sophisticated language model, generating creative text, translating languages, summarizing vast amounts of data, and much more – all without depending on cloud services. Running Llama 3 8B locally brings this future closer, allowing you to experiment, tailor, and fine-tune models to your specific needs.

This article is your guide through the exciting journey of deploying Llama 3 8B on your NVIDIA 3080 Ti 12GB, providing a comprehensive overview of performance benchmarks, practical recommendations, and answering frequently asked questions. Let's dive in!

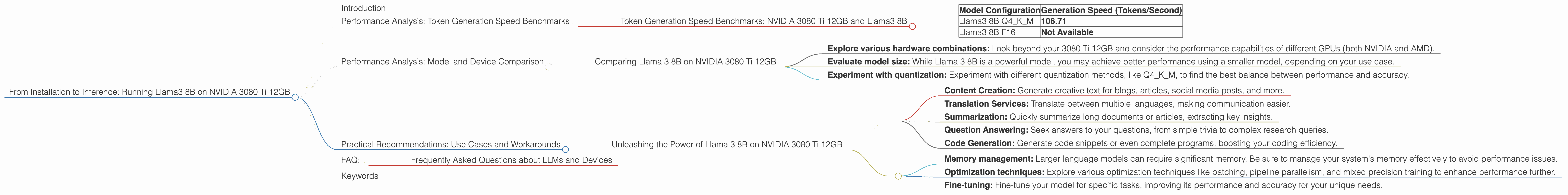

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA 3080 Ti 12GB and Llama3 8B

The token generation speed is a crucial metric that determines how quickly your model can process text and generate output. It's like the language processing equivalent of miles per hour – the higher the speed, the faster the model can work its magic.

| Model Configuration | Generation Speed (Tokens/Second) |

|---|---|

| Llama3 8B Q4KM | 106.71 |

| Llama3 8B F16 | Not Available |

Key Takeaways:

- We see a robust generation speed of 106.71 tokens per second for the Llama3 8B model with Q4KM quantization on the NVIDIA 3080 Ti 12GB. This suggests a remarkable processing capability for generating creative text, answering questions, and performing various language tasks.

- Unfortunately, we lack data for the Llama3 8B F16 configuration, which represents a trade-off between speed and accuracy. This highlights the importance of finding the optimal balance between performance and model quality based on your specific needs.

Analogy: Imagine a super-fast typist writing an email, generating words at a rapid pace. The token generation speed is like the typist's typing speed – the higher it is, the faster the email is written.

Performance Analysis: Model and Device Comparison

Comparing Llama 3 8B on NVIDIA 3080 Ti 12GB

While the NVIDIA 3080 Ti 12GB shows promising performance with Llama3 8B, it's important to consider the context. How does it stack up against other potential configurations?

Unfortunately, we don't have data for other models or devices to provide a direct comparison in this article. It's vital to consult additional resources and benchmark testing results for other hardware combinations to get a more comprehensive view of performance.

Practical Recommendations:

- Explore various hardware combinations: Look beyond your 3080 Ti 12GB and consider the performance capabilities of different GPUs (both NVIDIA and AMD).

- Evaluate model size: While Llama 3 8B is a powerful model, you may achieve better performance using a smaller model, depending on your use case.

- Experiment with quantization: Experiment with different quantization methods, like Q4KM, to find the best balance between performance and accuracy.

Practical Recommendations: Use Cases and Workarounds

Unleashing the Power of Llama 3 8B on NVIDIA 3080 Ti 12GB

The combination of Llama 3 8B and your NVIDIA 3080 Ti 12GB opens a range of exciting possibilities for diverse use cases:

- Content Creation: Generate creative text for blogs, articles, social media posts, and more.

- Translation Services: Translate between multiple languages, making communication easier.

- Summarization: Quickly summarize long documents or articles, extracting key insights.

- Question Answering: Seek answers to your questions, from simple trivia to complex research queries.

- Code Generation: Generate code snippets or even complete programs, boosting your coding efficiency.

Workarounds and Considerations:

- Memory management: Larger language models can require significant memory. Be sure to manage your system's memory effectively to avoid performance issues.

- Optimization techniques: Explore various optimization techniques like batching, pipeline parallelism, and mixed precision training to enhance performance further.

- Fine-tuning: Fine-tune your model for specific tasks, improving its performance and accuracy for your unique needs.

FAQ:

Frequently Asked Questions about LLMs and Devices

Q: What does Q4KM quantization mean?

A: Quantization is a technique to reduce the size of a model by using fewer bits to represent each value within the model's parameters. Q4KM quantization uses 4 bits to store each value with special methods for handling the "K" and "M" matrices, which are crucial components of the model's architecture. This smaller model size often leads to faster processing speeds and reduced memory requirements.

Q: What are the limitations of running LLMs locally?

A: Running LLMs locally can be challenging. It requires powerful hardware, extensive memory, and specialized skills to manage and optimize the model efficiently. Additionally, the hardware may become a bottleneck, limiting the model's ability to perform complex tasks.

Q: Is it worth running LLMs locally?

A: The decision to run LLMs locally depends on your specific needs and priorities. If you require responsiveness, privacy, and control over the model, local deployment is an excellent option. However, if you need to access massive datasets or perform extremely complex tasks, using cloud-based LLMs may be more suitable.

Q: What are some future trends in local LLM deployment?

A: The future holds exciting prospects for local LLM deployment. We can expect continued advancements in hardware, software, and optimization techniques, leading to even faster processing speeds, smaller model sizes, and potentially lower costs.

Keywords

Llama3 8B, NVIDIA 3080 Ti 12GB, GPU inference, Token generation speed, Q4KM quantization, performance benchmarks, local LLM deployment, language model, content creation, translation, summarization, question answering, code generation, AI, machine learning, deep learning, NLP, natural language processing.