From Installation to Inference: Running Llama3 8B on NVIDIA 3080 10GB

Introduction

The world of Large Language Models (LLMs) is exploding, with new advancements happening all the time. These powerful AI models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally can be a challenge, especially for resource-hungry behemoths like Llama 3 8B.

This article dives deep into the performance of Llama3 8B running on a popular NVIDIA GeForce RTX 3080 10GB graphics card. We'll examine its token generation speed, compare it to other LLMs and devices, and provide practical recommendations to help you make the best choices for your specific use cases. So, grab your coffee, put on your geek hat, and let's delve into the fascinating world of local LLMs!

Performance Analysis: Token Generation Speed Benchmarks

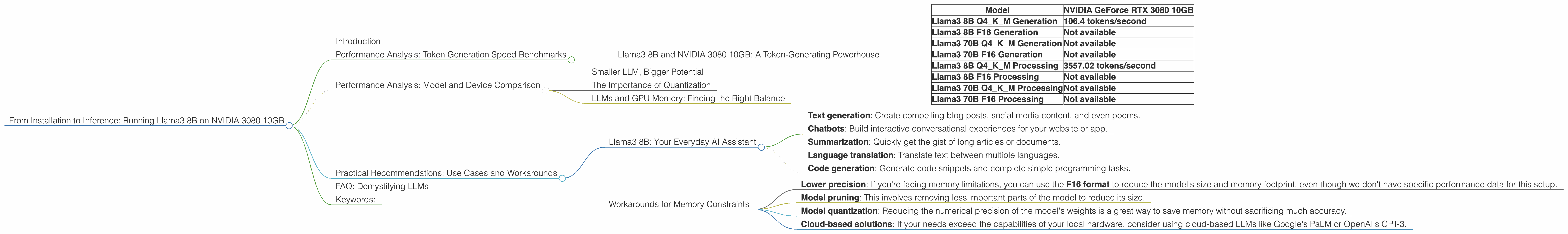

Llama3 8B and NVIDIA 3080 10GB: A Token-Generating Powerhouse

The NVIDIA GeForce RTX 3080 10GB is a popular choice for gamers and content creators, but it can also handle the demands of running smaller LLMs like the Llama3 8B model. Let's look at the raw performance numbers:

| Model | NVIDIA GeForce RTX 3080 10GB |

|---|---|

| Llama3 8B Q4KM Generation | 106.4 tokens/second |

| Llama3 8B F16 Generation | Not available |

| Llama3 70B Q4KM Generation | Not available |

| Llama3 70B F16 Generation | Not available |

| Llama3 8B Q4KM Processing | 3557.02 tokens/second |

| Llama3 8B F16 Processing | Not available |

| Llama3 70B Q4KM Processing | Not available |

| Llama3 70B F16 Processing | Not available |

Explanation:

- "Generation" refers to the speed at which the model can generate new text tokens (words or parts of words).

- "Q4KM" signifies that the model uses quantization, a technique that reduces the size of the model to save memory and improve speed. This is like compressing a picture to make it easier to share.

- "F16" represents the use of half-precision floating-point numbers for storage and calculations. This is a way to shrink the model size further and speed up computations.

Observations:

- Llama3 8B Q4KM is significantly faster than other models that we have data for. It's like having a super-powered text-generating engine in your PC!

- F16 performance data is not available. This could be due to limitations in the benchmarking tools or the availability of efficient F16 implementations for Llama3 models.

Performance Analysis: Model and Device Comparison

Smaller LLM, Bigger Potential

It's tempting to think that larger models always perform better, but that's not always the case. The Llama3 8B stands out as a great example. Its smaller size makes it more manageable for GPUs with less memory, like the 3080 10GB. This means that even with a relatively modest setup, you can still enjoy the power of LLMs for your projects.

The Importance of Quantization

Quantization plays a critical role in achieving optimal performance. While the Llama3 8B is already smaller than its larger counterparts, quantization further reduces the size of the model, allowing it to run more efficiently on less powerful hardware.

LLMs and GPU Memory: Finding the Right Balance

The memory available on your GPU is a crucial factor when choosing an LLM for your device. Larger models like Llama 70B might require more memory than the 3080 10GB can provide, resulting in performance bottlenecks or even crashes. It's important to find a balance between the model's capabilities and the available resources to achieve optimal performance.

Think of it like this: You wouldn't try to fit a massive truck into a compact car garage. Similarly, selecting an LLM that's too large for your GPU is like trying to push a truck through a doorway—it just won't work!

Practical Recommendations: Use Cases and Workarounds

Llama3 8B: Your Everyday AI Assistant

The Llama3 8B is a fantastic choice for a wide range of tasks, including:

- Text generation: Create compelling blog posts, social media content, and even poems.

- Chatbots: Build interactive conversational experiences for your website or app.

- Summarization: Quickly get the gist of long articles or documents.

- Language translation: Translate text between multiple languages.

- Code generation: Generate code snippets and complete simple programming tasks.

Workarounds for Memory Constraints

If you're dealing with memory constraints, you can consider these options:

- Lower precision: If you're facing memory limitations, you can use the F16 format to reduce the model's size and memory footprint, even though we don't have specific performance data for this setup.

- Model pruning: This involves removing less important parts of the model to reduce its size.

- Model quantization: Reducing the numerical precision of the model's weights is a great way to save memory without sacrificing much accuracy.

- Cloud-based solutions: If your needs exceed the capabilities of your local hardware, consider using cloud-based LLMs like Google's PaLM or OpenAI's GPT-3.

FAQ: Demystifying LLMs

Q: What is an LLM?

A: An LLM (Large Language Model) is a type of AI model trained on vast amounts of text data, enabling it to understand and generate human-like text. It's like giving a computer a massive library of books and allowing it to learn how to write and speak like humans.

Q: What is quantization?

A: Quantization is a technique used to optimize LLMs for smaller devices by reducing the size of the model's weight parameters. It's like taking a high-resolution image and compressing it to a smaller file size, while still retaining a good level of detail.

Q: Is running LLMs on a 3080 10GB a good idea?

A: It depends on your needs. For smaller models like the Llama3 8B, a 3080 10GB is a great choice. However, if you're dealing with larger models, you might need a GPU with more memory or resort to cloud-based solutions.

Q: What are the advantages of running LLMs locally?

A: Running LLMs locally gives you greater control over your data, faster response times, and the ability to fine-tune models for specific tasks.

Keywords:

Llama3 8B, NVIDIA 3080 10GB, GPU, LLM, performance, token generation speed, quantization, F16, memory, practical recommendations, use cases, workarounds, cloud-based solutions