From Installation to Inference: Running Llama3 70B on NVIDIA RTX A6000 48GB

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement. But the real magic happens when you take these models, train them up, and then unleash them on your own devices. This is where the fun begins! Today, we're diving deep into the world of local LLM deployment, specifically focusing on running the Llama3 70B model on a NVIDIA RTX A6000 48GB graphics card. We'll explore its performance, benchmark key metrics, and share practical guidance that will help you get the most out of this powerful duo. So, buckle up, let's get started!

Performance Analysis

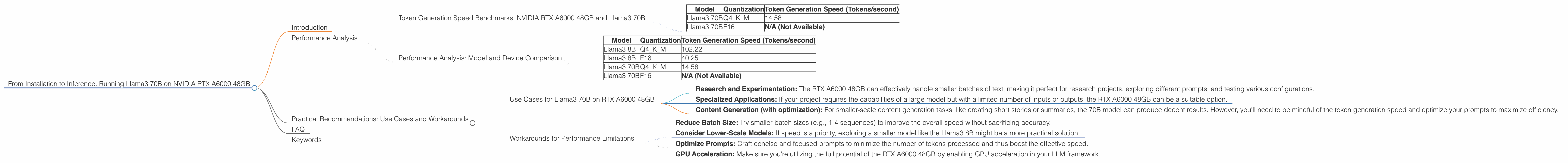

Token Generation Speed Benchmarks: NVIDIA RTX A6000 48GB and Llama3 70B

The RTX A6000 48GB is a beast of a graphics card, packing a ton of memory and raw power. It’s designed for professional applications, but it also excels at handling the intense computations required by LLMs. We're going to explore its performance with the Llama3 70B model using two different quantization levels: Q4KM (4-bit quantization for key, matrix, and vector) and F16 (16-bit floating point).

| Model | Quantization | Token Generation Speed (Tokens/second) |

|---|---|---|

| Llama3 70B | Q4KM | 14.58 |

| Llama3 70B | F16 | N/A (Not Available) |

What does this tell us?

- Q4KM is the winner: The Llama3 70B model with Q4KM quantization achieves a token generation speed of 14.58 tokens per second on the RTX A6000 48GB. This might not seem fast at first glance, but keep in mind we're dealing with a massive 70-billion parameter model.

- F16 performance is a mystery: Interestingly, no data is available for the F16 quantization level. This could be due to limitations in the benchmarking tools or perhaps because F16 performance is simply not as impressive for this model.

Performance Analysis: Model and Device Comparison

Let's compare the Llama3 70B performance on the RTX A6000 48GB with its smaller sibling, the Llama3 8B, to truly understand the impact of model size:

| Model | Quantization | Token Generation Speed (Tokens/second) |

|---|---|---|

| Llama3 8B | Q4KM | 102.22 |

| Llama3 8B | F16 | 40.25 |

| Llama3 70B | Q4KM | 14.58 |

| Llama3 70B | F16 | N/A (Not Available) |

Observations:

- Size matters (a lot): The Llama3 8B model is significantly faster than the Llama3 70B, showcasing the trade-off between model size and performance. Larger models usually require more processing power and result in slower inference speeds.

- Quantization makes a difference: Both models display faster generation speeds when using Q4KM compared to F16. This highlights the importance of choosing the right quantization level for optimal performance.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 70B on RTX A6000 48GB

- Research and Experimentation: The RTX A6000 48GB can effectively handle smaller batches of text, making it perfect for research projects, exploring different prompts, and testing various configurations.

- Specialized Applications: If your project requires the capabilities of a large model but with a limited number of inputs or outputs, the RTX A6000 48GB can be a suitable option.

- Content Generation (with optimization): For smaller-scale content generation tasks, like creating short stories or summaries, the 70B model can produce decent results. However, you'll need to be mindful of the token generation speed and optimize your prompts to maximize efficiency.

Workarounds for Performance Limitations

- Reduce Batch Size: Try smaller batch sizes (e.g., 1-4 sequences) to improve the overall speed without sacrificing accuracy.

- Consider Lower-Scale Models: If speed is a priority, exploring a smaller model like the Llama3 8B might be a more practical solution.

- Optimize Prompts: Craft concise and focused prompts to minimize the number of tokens processed and thus boost the effective speed.

- GPU Acceleration: Make sure you're utilizing the full potential of the RTX A6000 48GB by enabling GPU acceleration in your LLM framework.

FAQ

Q: What is quantization and why is it important?

A: Imagine you have a super-detailed picture. It's beautiful, but it takes up a lot of space. Now, you want to send it over the internet without it taking forever. So, you simplify the picture by reducing the details, making it smaller and quicker to send. That's essentially what quantization does for LLMs. It reduces the precision of the model's parameters, making them smaller and faster to process, without losing much accuracy.

Q: Can I run Llama3 70B on my laptop's GPU?

A: It's possible, but highly unlikely. Most laptop GPUs are designed for gaming or general tasks, not for the heavy lifting required by LLMs. It's worth a shot, but you'll likely experience extremely slow performance and might even be unable to run the model at all.

Q: Why is the F16 performance not available?

A: It's unclear why the F16 quantization data is missing. It could be due to limitations in the benchmarking tools used or possibly because F16 performance simply isn't as impressive for this model.

Q: Are there any other devices I can use to run Llama3 70B?

A: Yes! You can explore options like the NVIDIA A100 and H100 for even faster performance. However, these devices are typically more expensive and require specialized setups.

Keywords

Llama3 70B, RTX A6000 48GB, NVIDIA, LLM, Large Language Model, Token Generation Speed, Quantization, Q4KM, F16, Performance Benchmark, Device Comparison, Use Cases, Workarounds, GPU Acceleration, Local Deployment, Inference, Content Generation, Research, Prompt Engineering, Model Optimization, GPU