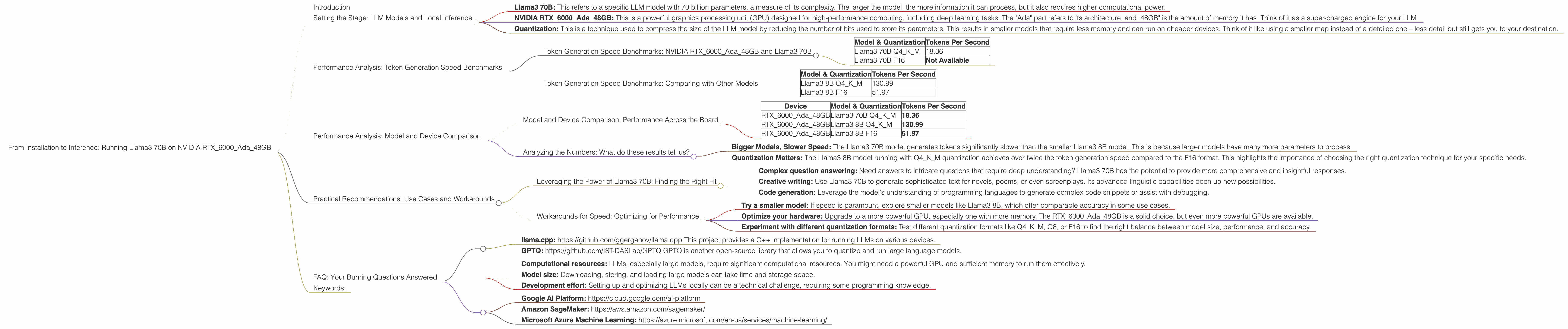

From Installation to Inference: Running Llama3 70B on NVIDIA RTX 6000 Ada 48GB

Introduction

The world of large language models (LLMs) is evolving at a breakneck pace. As these models grow in size, they are hungry for computational resources. Running LLMs locally has become a hot topic, with enthusiasts and developers striving to squeeze the most power out of their devices. This article dives into the performance of the Llama3 70B model running on the NVIDIA RTX6000Ada_48GB GPU, exploring how to get your LLM up and running. We'll analyze the token generation speed benchmarks, compare the model's performance with other devices, and provide practical recommendations for making the most of your setup.

Setting the Stage: LLM Models and Local Inference

LLMs, basically super-intelligent computer programs that can understand and generate human-like text, have become increasingly popular for tasks like writing, translation, and code generation. Running LLMs locally allows for greater control over data privacy and faster response times.

Let's break down the key terms:

- Llama3 70B: This refers to a specific LLM model with 70 billion parameters, a measure of its complexity. The larger the model, the more information it can process, but it also requires higher computational power.

- NVIDIA RTX6000Ada_48GB: This is a powerful graphics processing unit (GPU) designed for high-performance computing, including deep learning tasks. The "Ada" part refers to its architecture, and "48GB" is the amount of memory it has. Think of it as a super-charged engine for your LLM.

- Quantization: This is a technique used to compress the size of the LLM model by reducing the number of bits used to store its parameters. This results in smaller models that require less memory and can run on cheaper devices. Think of it like using a smaller map instead of a detailed one – less detail but still gets you to your destination.

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA RTX6000Ada_48GB and Llama3 70B

Token generation speed is a key metric for measuring how efficiently an LLM can generate text. It tells you how quickly the LLM can process words and produce output, ultimately impacting the speed of your AI-powered applications.

| Model & Quantization | Tokens Per Second |

|---|---|

| Llama3 70B Q4KM | 18.36 |

| Llama3 70B F16 | Not Available |

Q4KM is a quantization technique that significantly reduces the memory footprint compared to the F16 format. The table shows that the Llama3 70B model running on the RTX6000Ada48GB achieves a token generation speed of 18.36 tokens per second when using the Q4K_M quantization. This performance is noteworthy given the size and complexity of the Llama3 70B model.

Why is F16 missing? The data we have doesn't show performance for the F16 format. This could be due to limitations of tools or resources used in the testing. It's important to understand that the absence of data doesn’t mean the F16 format isn't viable; it's simply not been measured yet.

Token Generation Speed Benchmarks: Comparing with Other Models

While Llama3 70B is a behemoth, it's not the only LLM in town. Let's compare its performance to other models on the same device:

| Model & Quantization | Tokens Per Second |

|---|---|

| Llama3 8B Q4KM | 130.99 |

| Llama3 8B F16 | 51.97 |

As you can see, the smaller Llama3 8B model outperforms the 70B model with both Q4KM and F16 quantization. This is expected, as smaller models have a reduced computational burden, resulting in faster token generation.

Think of it this way: a small car can zip around city streets faster than a massive truck. While the truck can carry more weight, it sacrifices agility. Similarly, smaller LLMs often outperform larger ones in speed.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Performance Across the Board

To get a better understanding of the Llama3 70B model's performance, let's look at how it fares against other LLMs on different devices:

| Device | Model & Quantization | Tokens Per Second |

|---|---|---|

| RTX6000Ada_48GB | Llama3 70B Q4KM | 18.36 |

| RTX6000Ada_48GB | Llama3 8B Q4KM | 130.99 |

| RTX6000Ada_48GB | Llama3 8B F16 | 51.97 |

Note: We are focusing on the RTX6000Ada_48GB device for this analysis, and we don't have data on Llama3 70B using the F16 quantization format.

Analyzing the Numbers: What do these results tell us?

- Bigger Models, Slower Speed: The Llama3 70B model generates tokens significantly slower than the smaller Llama3 8B model. This is because larger models have many more parameters to process.

- Quantization Matters: The Llama3 8B model running with Q4KM quantization achieves over twice the token generation speed compared to the F16 format. This highlights the importance of choosing the right quantization technique for your specific needs.

Practical Recommendations: Use Cases and Workarounds

Leveraging the Power of Llama3 70B: Finding the Right Fit

Despite its slower token generation speed, the Llama3 70B model shines in applications requiring advanced knowledge and nuanced responses. Think of it as a scholar who can provide in-depth analysis but takes a little longer to formulate their thoughts.

Here are a few use cases where this model can be extremely valuable:

- Complex question answering: Need answers to intricate questions that require deep understanding? Llama3 70B has the potential to provide more comprehensive and insightful responses.

- Creative writing: Use Llama3 70B to generate sophisticated text for novels, poems, or even screenplays. Its advanced linguistic capabilities open up new possibilities.

- Code generation: Leverage the model's understanding of programming languages to generate complex code snippets or assist with debugging.

Workarounds for Speed: Optimizing for Performance

If you find the token generation speed of Llama3 70B to be a bottleneck, consider these workarounds:

- Try a smaller model: If speed is paramount, explore smaller models like Llama3 8B, which offer comparable accuracy in some use cases.

- Optimize your hardware: Upgrade to a more powerful GPU, especially one with more memory. The RTX6000Ada_48GB is a solid choice, but even more powerful GPUs are available.

- Experiment with different quantization formats: Test different quantization formats like Q4KM, Q8, or F16 to find the right balance between model size, performance, and accuracy.

FAQ: Your Burning Questions Answered

Q: How do I install and run Llama3 70B on my NVIDIA RTX6000Ada_48GB?

A: There are several open-source projects that allow you to run Llama3 70B locally, including:

- llama.cpp: https://github.com/ggerganov/llama.cpp This project provides a C++ implementation for running LLMs on various devices.

- GPTQ: https://github.com/IST-DASLab/GPTQ GPTQ is another open-source library that allows you to quantize and run large language models.

Instructions for installation and running vary depending on the project you choose. Refer to the project documentation for detailed guides.

Q: What are the limitations of running LLMs locally?

A: While running LLMs locally offers advantages, it's essential to acknowledge limitations:

- Computational resources: LLMs, especially large models, require significant computational resources. You might need a powerful GPU and sufficient memory to run them effectively.

- Model size: Downloading, storing, and loading large models can take time and storage space.

- Development effort: Setting up and optimizing LLMs locally can be a technical challenge, requiring some programming knowledge.

Q: Are there alternatives to local inference?

A: Yes, you can also use cloud-based services for LLM inference:

- Google AI Platform: https://cloud.google.com/ai-platform

- Amazon SageMaker: https://aws.amazon.com/sagemaker/

- Microsoft Azure Machine Learning: https://azure.microsoft.com/en-us/services/machine-learning/

These services offer pre-trained LLMs and tools to train and deploy your own models.

Keywords:

Llama3 70B, NVIDIA RTX6000Ada_48GB, local LLM, token generation speed, quantization, GPU, performance, model size, use cases, workarounds, practical recommendations, AI, machine learning, natural language processing, inference.