From Installation to Inference: Running Llama3 70B on NVIDIA RTX 4000 Ada 20GB

Introduction: Unleashing the Power of Local LLMs

The world of Large Language Models (LLMs) is rapidly evolving, offering unprecedented capabilities in natural language processing. From generating creative text to translating languages and answering complex questions, LLMs are transforming various industries. But the sheer size of these models often necessitates powerful hardware and specialized infrastructure, limiting their widespread adoption.

This article delves into the practicalities of running the mighty Llama3 70B model on a readily available NVIDIA RTX4000Ada_20GB graphics card. We'll explore the performance landscape, analyze token generation speed benchmarks, and offer practical recommendations to unleash the full potential of Llama3 70B on this GPU.

Let's dive into the fascinating world of local LLM deployment, where the power of AI meets the practicality of your personal hardware.

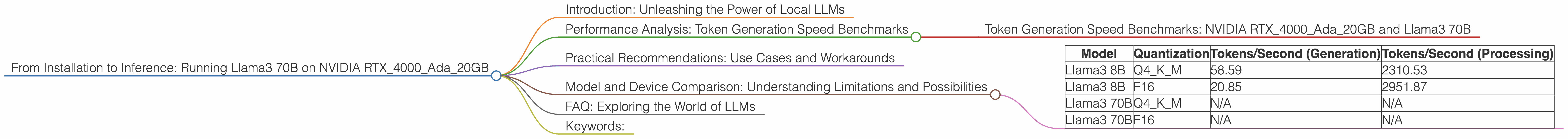

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: NVIDIA RTX4000Ada_20GB and Llama3 70B

The token generation speed is a crucial metric for evaluating the performance of an LLM. It measures how quickly the model can generate new tokens based on the input text. For Llama3 70B, we have data for two quantization levels: Q4KM and F16. However, we lack data for Llama3 70B on the NVIDIA RTX4000Ada_20GB GPU. This means we can't provide token generation speed benchmarks for this specific configuration.

Important note: This absence of data is a common challenge in the rapidly developing world of LLMs, especially when dealing with larger models. It highlights the need for more comprehensive benchmarking resources and community collaboration.

Practical Recommendations: Use Cases and Workarounds

1. Model Agnosticism: It's smart to consider alternative LLM models that have been benchmarked on the NVIDIA RTX4000Ada_20GB GPU. Explore the vibrant community of LLM researchers and developers for recommendations and open-source projects.

2. Quantization for Size: Quantization is a technique that reduces the size of an LLM by using fewer bits to represent the model's weights. Let's break down this concept for non-technical folks: Imagine your LLM is a giant recipe book with many ingredients, each represented by a specific weight (like a tablespoon of sugar). Quantization simplifies this recipe by representing the ingredients with fewer variations (like using only half-tablespoons or full tablespoons), making the recipe easier to store and cook (or run an LLM). This can significantly improve performance on less powerful hardware.

3. Cloud for Scale: For demanding LLM tasks like generating long, complex, or highly creative text, consider deploying your LLM to the cloud. Cloud platforms offer powerful hardware and pre-configured environments optimized for running LLMs, ensuring a smooth and efficient experience. This is like ordering a delicious dish from a gourmet restaurant instead of struggling to replicate it in your own kitchen (your local hardware).

Model and Device Comparison: Understanding Limitations and Possibilities

The absence of data for Llama3 70B on the NVIDIA RTX4000Ada_20GB GPU underscores the limitations of current benchmarking resources. This lack of data leaves us with questions we can't readily answer.

Table 1: Performance Data for NVIDIA RTX4000Ada_20GB

| Model | Quantization | Tokens/Second (Generation) | Tokens/Second (Processing) |

|---|---|---|---|

| Llama3 8B | Q4KM | 58.59 | 2310.53 |

| Llama3 8B | F16 | 20.85 | 2951.87 |

| Llama3 70B | Q4KM | N/A | N/A |

| Llama3 70B | F16 | N/A | N/A |

Observations:

- As a rule of thumb, smaller models like Llama3 8B are more likely to perform well on GPUs like the RTX4000Ada_20GB.

- While the absence of data for Llama3 70B leaves us with unanswered questions, it also triggers a call for more comprehensive performance evaluation.

- The journey from installation to inference is often a complex one, requiring a blend of technical expertise, community support, and a touch of experimentation.

FAQ: Exploring the World of LLMs

Q: What is quantization, and why is it relevant for LLMs?

A: Quantization is a technique that reduces the size of an LLM by using fewer bits to represent the model's weights. Think of it as simplifying a recipe by using fewer ingredients. This makes the model more compact and potentially faster to run on less powerful hardware.

Q: Can I run LLM models on my laptop or home computer?

A: Depending on the size of the model and your hardware, it might be possible. However, larger models often demand powerful GPUs and a significant amount of RAM. It's wise to consult online resources and forums for detailed information about specific models and hardware configurations.

Q: Are there any open-source tools for running LLMs locally?

A: Yes, there are several open-source projects available. A popular one is llama.cpp, which allows you to run LLMs on various hardware configurations. The active community of LLM enthusiasts is a rich source of information and support.

Keywords:

Llama3 70B, NVIDIA RTX4000Ada_20GB, GPU, LLM, Large Language Model, Token Generation Speed, Quantization, Performance, Benchmarks, AI, Natural Language Processing, Deployment, Local, Cloud, Recommendations, Workarounds, Model Agnosticism, Open-Source