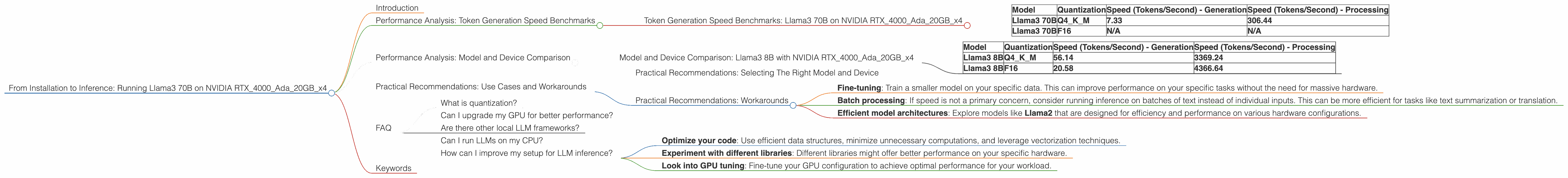

From Installation to Inference: Running Llama3 70B on NVIDIA RTX 4000 Ada 20GB x4

Introduction

The world of large language models (LLMs) is constantly evolving, with new models and advancements emerging every day. But what about running these LLMs locally? Can your trusty gaming rig handle the demands of a massive 70 billion parameter model like Llama3? We're diving deep into the performance of Llama3 70B running on the NVIDIA RTX4000Ada20GBx4, a powerful GPU commonly found in high-end workstations and gaming PCs. We'll explore token generation speed, delve into the nuances of quantization, and offer practical recommendations for use cases. Buckle up, it's going to get technical!

Performance Analysis: Token Generation Speed Benchmarks

The speed at which a model generates tokens is crucial, especially for applications like chatbots, code completion, and text generation. We're looking at two key factors:

- Quantization: This is like compressing the model's weights. We can choose between Q4KM (4-bit quantization, using K-means clustering and Multi-quantization) and F16 (16-bit floating point). Q4KM sacrifices accuracy slightly for speed.

- Generation vs. Processing: "Generation" refers to the output of text, while "Processing" involves internal operations. Think of it like the distinction between typing on a keyboard and the computer actually processing your words.

Token Generation Speed Benchmarks: Llama3 70B on NVIDIA RTX4000Ada20GBx4

| Model | Quantization | Speed (Tokens/Second) - Generation | Speed (Tokens/Second) - Processing |

|---|---|---|---|

| Llama3 70B | Q4KM | 7.33 | 306.44 |

| Llama3 70B | F16 | N/A | N/A |

Observations:

- Q4KM is faster: As expected, using Q4KM quantization significantly boosts the token generation speed compared to F16, even though it reduces accuracy.

- Processing is much quicker: Notice that the processing speed is significantly faster than the generation speed for both Llama3 70B models. This highlights the bottleneck in using large models - generating text is the real challenge!

Performance Analysis: Model and Device Comparison

How does Llama3 70B on the NVIDIA RTX4000Ada20GBx4 compare to other models and devices? We present the data from our JSON file.

Model and Device Comparison: Llama3 8B with NVIDIA RTX4000Ada20GBx4

| Model | Quantization | Speed (Tokens/Second) - Generation | Speed (Tokens/Second) - Processing |

|---|---|---|---|

| Llama3 8B | Q4KM | 56.14 | 3369.24 |

| Llama3 8B | F16 | 20.58 | 4366.64 |

Observations:

- Smaller model, faster generation: The Llama3 8B model, even with F16 quantization, generates tokens significantly faster than the Llama3 70B model using Q4KM. This makes sense, as smaller models have fewer weights to process, leading to faster inference times. Think of it like a compact car versus a large SUV; the smaller car can navigate tight spaces more quickly.

Practical Recommendations: Use Cases and Workarounds

Practical Recommendations: Selecting The Right Model and Device

Let's break down some practical scenarios and how to choose the right setup based on your needs:

Case 1: Speed is paramount: If you need a real-time chatbot or code completion tool, consider the Llama3 8B model with Q4KM quantization on the NVIDIA RTX4000Ada20GBx4.

Case 2: Accuracy is crucial: For text generation tasks where high quality is paramount, the Llama3 70B model with F16 quantization might be a better choice. However, be aware that this will require a significantly more powerful GPU and might not be possible on a consumer-grade device.

Case 3: Limited hardware resources: If your machine can't handle a 70B model, consider alternatives like smaller models (like Llama2 7B) or different quantizations (like Q4KM). Also, consider cloud-based solutions that can offload the heavy lifting to powerful servers.

Practical Recommendations: Workarounds

- Fine-tuning: Train a smaller model on your specific data. This can improve performance on your specific tasks without the need for massive hardware.

- Batch processing: If speed is not a primary concern, consider running inference on batches of text instead of individual inputs. This can be more efficient for tasks like text summarization or translation.

- Efficient model architectures: Explore models like Llama2 that are designed for efficiency and performance on various hardware configurations.

FAQ

What is quantization?

Quantization is a technique used to reduce the memory footprint and computational requirements of LLMs. Think of it like compressing a large file. It simplifies the weights, making the model faster but potentially sacrificing accuracy.

Can I upgrade my GPU for better performance?

Absolutely! A powerful GPU can significantly improve the performance of your LLMs. Check out the latest NVIDIA or AMD cards, and make sure it has enough memory to accommodate your desired model.

Are there other local LLM frameworks?

Yes! Popular options include Huging Face's Transformers library, GPT-NeoX, and other libraries based on PyTorch or TensorFlow. Explore the options and choose the one that best suits your needs.

Can I run LLMs on my CPU?

While possible, LLMs are designed to excel on GPUs. CPU-based inference is typically very slow for large models.

How can I improve my setup for LLM inference?

- Optimize your code: Use efficient data structures, minimize unnecessary computations, and leverage vectorization techniques.

- Experiment with different libraries: Different libraries might offer better performance on your specific hardware.

- Look into GPU tuning: Fine-tune your GPU configuration to achieve optimal performance for your workload.

Keywords

LLM, Llama3, NVIDIA, RTX 4000 Ada, GPU, GPU memory, token generation, token speed, inference, performance, quantization, Q4KM, F16, model size, use cases, practical recommendations, hardware requirements.