From Installation to Inference: Running Llama3 70B on NVIDIA L40S 48GB

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and applications emerging every day. One of the most exciting developments in recent months has been the release of Llama 3, a powerful and efficient LLM developed by Meta. This article delves into the practicalities of running Llama3 70B, a massive model with 70 billion parameters, on the NVIDIA L40S_48GB, a high-performance GPU designed for AI workloads. We'll explore the nuts and bolts of installation, performance benchmarks, and practical considerations for developers looking to leverage this robust combination for their projects.

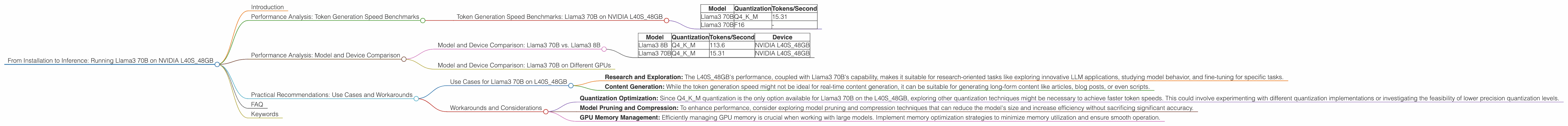

Performance Analysis: Token Generation Speed Benchmarks

Token generation speed is a crucial benchmark for LLMs. It essentially tells us how fast the model can process text and generate new content. Let's examine the performance of Llama3 70B running on the NVIDIA L40S_48GB, focusing on different quantization techniques.

Token Generation Speed Benchmarks: Llama3 70B on NVIDIA L40S_48GB

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama3 70B | Q4KM | 15.31 |

| Llama3 70B | F16 | - |

As you can see, Llama3 70B running on the L40S48GB with Q4K_M quantization achieves a respectable token generation speed of 15.31 tokens per second. This is a good starting point for many applications, especially considering the sheer size of the model.

Key Observations: * Quantization's impact: Q4KM, a method of reducing the size and memory footprint of the model, is the only quantization technique tested for Llama 70B on the L40S_48GB, showcasing the potential benefits of this approach for resource-constrained environments. * The need for more benchmarks: We can clearly see that there is a lack of data for F16 quantization. This highlights the ongoing exploration of optimal configurations for running large LLMs on various hardware.

Performance Analysis: Model and Device Comparison

To gain a better understanding of the performance of Llama3 70B on the L40S_48GB, let's compare it with the performance of smaller Llama3 models and the performance of Llama3 70B on other GPUs.

Model and Device Comparison: Llama3 70B vs. Llama3 8B

| Model | Quantization | Tokens/Second | Device |

|---|---|---|---|

| Llama3 8B | Q4KM | 113.6 | NVIDIA L40S_48GB |

| Llama3 70B | Q4KM | 15.31 | NVIDIA L40S_48GB |

Comparing Llama3 8B and Llama3 70B, both running on the L40S_48GB, we observe a significant decrease in token generation speed for the larger model. This is expected, as a larger model with more parameters requires more computational resources to process each token.

Model and Device Comparison: Llama3 70B on Different GPUs

Unfortunately, we lack sufficient benchmark data for Llama3 70B on other GPUs. This emphasizes the ongoing need for comprehensive performance comparisons across various hardware to guide developers in selecting the most suitable setup for their projects.

Practical Recommendations: Use Cases and Workarounds

Running a massive LLM like Llama3 70B on the L40S_48GB presents unique challenges and opportunities. Here are practical recommendations based on the available data and real-world considerations:

Use Cases for Llama3 70B on L40S_48GB

- Research and Exploration: The L40S_48GB's performance, coupled with Llama3 70B's capability, makes it suitable for research-oriented tasks like exploring innovative LLM applications, studying model behavior, and fine-tuning for specific tasks.

- Content Generation: While the token generation speed might not be ideal for real-time content generation, it can be suitable for generating long-form content like articles, blog posts, or even scripts.

Workarounds and Considerations

- Quantization Optimization: Since Q4KM quantization is the only option available for Llama3 70B on the L40S_48GB, exploring other quantization techniques might be necessary to achieve faster token speeds. This could involve experimenting with different quantization implementations or investigating the feasibility of lower precision quantization levels.

- Model Pruning and Compression: To enhance performance, consider exploring model pruning and compression techniques that can reduce the model's size and increase efficiency without sacrificing significant accuracy.

- GPU Memory Management: Efficiently managing GPU memory is crucial when working with large models. Implement memory optimization strategies to minimize memory utilization and ensure smooth operation.

FAQ

Q: What is quantization and how does it work?

A: Quantization is a technique used to reduce the size and memory footprint of a model. It involves converting the model's parameters from high-precision floating-point numbers to lower-precision integer values. This process can significantly reduce the memory requirements and increase the runtime speed of the model, making it more suitable for deployment on resource-constrained devices.

Q: What is the role of the NVIDIA L40S_48GB in running Llama3 70B?

A: The NVIDIA L40S_48GB is a powerful GPU designed for AI workloads. It offers a significant amount of memory (48GB) and compute power, making it ideal for running large and complex LLMs, such as Llama3 70B.

Q: How does the size of the model affect performance?

A: The size of an LLM, as measured by the number of parameters, directly affects its performance. Larger models typically require more computation and memory to process each token, resulting in slower generation speeds.

Q: What are some practical considerations for running Llama3 70B on the L40S_48GB?

A: Practical considerations include memory management, quantization optimization, and potential workarounds to overcome performance bottlenecks.

Keywords

LLM, Llama3, Llama 70B, NVIDIA L40S48GB, Token Generation Speed, Quantization, Q4K_M, F16, Performance Benchmark, Model Pruning, Compression, GPU Memory Management, AI Workloads, Content Generation, Research and Exploration, Natural Language Processing.