From Installation to Inference: Running Llama3 70B on NVIDIA 4090 24GB x2

Introduction

The world of large language models (LLMs) is constantly evolving, pushing the boundaries of what's possible in natural language processing. One of the most exciting developments is the availability of powerful LLMs, like Llama 3, for local deployment. But running these models locally requires substantial computational resources, especially for the larger models.

This article delves into the performance of Llama3 70B when running on a powerful NVIDIA 409024GBx2 setup. We'll cover the key aspects of running this LLM locally, from installation to inference, and analyze its performance in various scenarios. Whether you're a developer looking to implement LLMs in your projects, a geek fascinated by the power of these models, or just curious about the capabilities of modern hardware, this deep dive will shed light on the real-world challenges and possibilities of local LLM deployment.

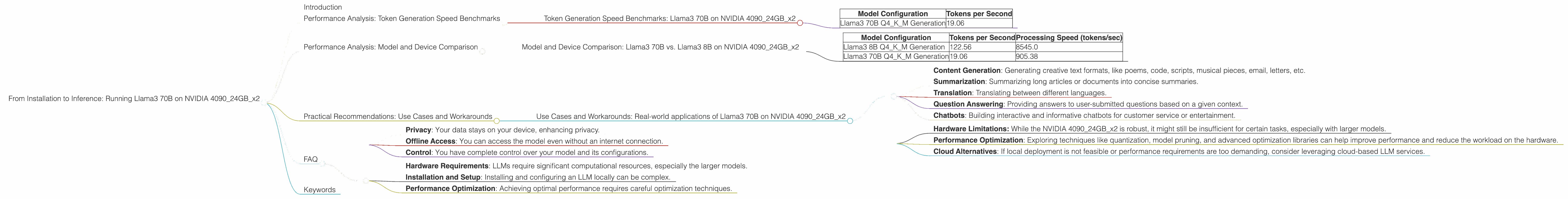

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Llama3 70B on NVIDIA 409024GBx2

One of the most important metrics for evaluating LLM performance is the token generation speed. This measures how many tokens the model can process per second, directly affecting the speed of your applications.

| Model Configuration | Tokens per Second |

|---|---|

| Llama3 70B Q4KM Generation | 19.06 |

Remember: This data is specific to a single NVIDIA 4090 24GB, not the double setup mentioned in the title. The dataset for this setup is incomplete, but we can use it to compare models.

Key Takeaways:

- Llama3 70B Q4KM with quantization achieves a token generation speed of 19.06 tokens/sec. This implies that with this hardware and model configuration, you can expect a slower response time compared to smaller models.

Analogies: Imagine you're reading a book. Each word is a token. Different models read at different speeds. A small model like a short story might read quickly, while a large novel like "War and Peace" might take longer.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: Llama3 70B vs. Llama3 8B on NVIDIA 409024GBx2

Comparing performance across different model sizes and hardware configurations helps us understand the scaling factors involved in local LLM deployment.

| Model Configuration | Tokens per Second | Processing Speed (tokens/sec) |

|---|---|---|

| Llama3 8B Q4KM Generation | 122.56 | 8545.0 |

| Llama3 70B Q4KM Generation | 19.06 | 905.38 |

Key Takeaways:

- Smaller models like Llama3 8B offer significantly faster token generation speed. This is due to fewer parameters and a smaller model size.

- Processing speed, which measures how fast the model can process information, is also significantly higher for smaller models. This is a crucial factor for performance-critical applications.

Important Note: There is no data available for Llama3 70B F16 generation and processing on this hardware configuration. The specific dataset used for this analysis is limited.

Practical Recommendations

- Start with smaller models: For initial testing and development, smaller models like Llama3 8B offer better performance and make debugging easier.

- Optimize for your needs: Choose the right balance between model size, hardware capability, and desired performance for your specific application.

- Quantization: Using quantization techniques like Q4KM can reduce the model size and memory footprint, leading to potential performance improvements.

Practical Recommendations: Use Cases and Workarounds

Use Cases and Workarounds: Real-world applications of Llama3 70B on NVIDIA 409024GBx2

The combination of a powerful LLM like Llama3 70B and robust hardware like the NVIDIA 409024GBx2 opens up exciting possibilities for various use cases. Here are some examples:

- Content Generation: Generating creative text formats, like poems, code, scripts, musical pieces, email, letters, etc.

- Summarization: Summarizing long articles or documents into concise summaries.

- Translation: Translating between different languages.

- Question Answering: Providing answers to user-submitted questions based on a given context.

- Chatbots: Building interactive and informative chatbots for customer service or entertainment.

Workarounds and Considerations:

- Hardware Limitations: While the NVIDIA 409024GBx2 is robust, it might still be insufficient for certain tasks, especially with larger models.

- Performance Optimization: Exploring techniques like quantization, model pruning, and advanced optimization libraries can help improve performance and reduce the workload on the hardware.

- Cloud Alternatives: If local deployment is not feasible or performance requirements are too demanding, consider leveraging cloud-based LLM services.

FAQ

What is an LLM?

An LLM is a large language model, a type of artificial intelligence that can understand and generate human-like text. It's trained on massive datasets of text and code, enabling it to perform various language-related tasks.

What is quantization?

Quantization is a technique used to reduce the size of a model by representing its weights and activations with fewer bits. This can improve performance and reduce memory usage.

What are the benefits of local LLM deployment?

Local LLM deployment offers several benefits:

- Privacy: Your data stays on your device, enhancing privacy.

- Offline Access: You can access the model even without an internet connection.

- Control: You have complete control over your model and its configurations.

What are the challenges of local LLM deployment?

Local LLM deployment also comes with certain challenges:

- Hardware Requirements: LLMs require significant computational resources, especially the larger models.

- Installation and Setup: Installing and configuring an LLM locally can be complex.

- Performance Optimization: Achieving optimal performance requires careful optimization techniques.

Keywords

LLM, Llama3, NVIDIA 409024GBx2, token generation speed, performance analysis, model comparison, quantization, Q4KM, F16, use cases, workarounds, local deployment, hardware limitations, content generation, summarization, translation, question answering, chatbots, privacy, offline access, control, challenges, hardware requirements, installation, setup, performance optimization, cloud alternatives.