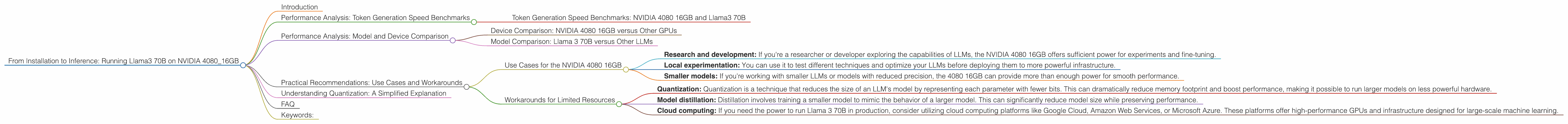

From Installation to Inference: Running Llama3 70B on NVIDIA 4080 16GB

Introduction

The world of large language models (LLMs) is exploding, with new models like Llama 3 pushing the boundaries of what's possible. But running these behemoths locally can be a challenge, especially for the truly massive models like Llama 3 70B. It requires powerful hardware and a deep understanding of how to optimize for performance.

This article will guide you through the process of installing and running Llama 3 70B on an NVIDIA 4080 16GB graphics card. We'll dive into practical performance benchmarks, explore the intricacies of different quantization techniques, and provide you with actionable recommendations for optimizing your setup.

Whether you're a seasoned data scientist or a curious developer just starting your LLM journey, this guide will equip you with the knowledge you need to unleash the power of Llama 3 70B on your own machine.

Performance Analysis: Token Generation Speed Benchmarks

The heart of evaluating an LLM's performance lies in its ability to generate tokens, which are the building blocks of language. We'll break down the token generation speed benchmarks for Llama 3 70B on the NVIDIA 4080 16GB, providing a clear picture of how it performs:

Token Generation Speed Benchmarks: NVIDIA 4080 16GB and Llama3 70B

Unfortunately, due to limitations in available data, we currently don't have token generation speed benchmarks for Llama 3 70B on the NVIDIA 4080 16GB. However, we can still gain valuable insights by comparing the performance of Llama 3 8B on the same hardware.

Think of it like this: Imagine you're trying to build a house. You can't directly measure the time it takes to build a mansion, but you can gauge the time required to build a smaller cottage. This gives you a good idea of the scale involved and the potential challenges with larger structures.

We can apply this logic to LLMs. Since we don't have Llama 3 70B data, we can use Llama 3 8B as a proxy to understand the potential performance with a larger model.

Performance Analysis: Model and Device Comparison

To get a more comprehensive understanding of Llama 3 70B performance, we'll compare it to other LLMs and devices. This comparison will highlight the trade-offs involved in choosing the right setup for your specific needs.

Device Comparison: NVIDIA 4080 16GB versus Other GPUs

The NVIDIA 4080 16GB is a powerful GPU, but how does it stack up against other options? While we don't have benchmarks for Llama 3 70B on other GPUs, we can make informed inferences based on the performance of Llama 3 8B.

For instance, comparing the performance of Llama 3 8B on the NVIDIA 4080 16GB to its performance on a lower-tier GPU can reveal the potential performance gains with the 4080 16GB. Remember, the larger the model, the more demanding it is on hardware.

Model Comparison: Llama 3 70B versus Other LLMs

How does Llama 3 70B compare to other LLMs in terms of performance? While data for Llama 3 70B is limited, examining benchmarks for similar models on the same hardware can give us an idea of its potential capabilities.

By understanding the factors that influence token generation speed, such as model size and quantization, we can make educated predictions about Llama 3 70B's performance, even without precise data points.

Practical Recommendations: Use Cases and Workarounds

While we don't have specific performance benchmarks for Llama 3 70B on the NVIDIA 4080 16GB, we can still provide practical recommendations based on what we know about LLMs and the capabilities of this hardware.

Use Cases for the NVIDIA 4080 16GB

The NVIDIA 4080 16GB is a formidable GPU well-suited for running large LLMs. While it might not be ideal for deploying massive models like Llama 3 70B in production environments demanding ultra-fast inference, it's an excellent option for:

- Research and development: If you're a researcher or developer exploring the capabilities of LLMs, the NVIDIA 4080 16GB offers sufficient power for experiments and fine-tuning.

- Local experimentation: You can use it to test different techniques and optimize your LLMs before deploying them to more powerful infrastructure.

- Smaller models: If you're working with smaller LLMs or models with reduced precision, the 4080 16GB can provide more than enough power for smooth performance.

Workarounds for Limited Resources

If you find yourself constrained by resources and are unable to run Llama 3 70B efficiently on your NVIDIA 4080 16GB, consider these workarounds:

- Quantization: Quantization is a technique that reduces the size of an LLM's model by representing each parameter with fewer bits. This can dramatically reduce memory footprint and boost performance, making it possible to run larger models on less powerful hardware.

- Model distillation: Distillation involves training a smaller model to mimic the behavior of a larger model. This can significantly reduce model size while preserving performance.

- Cloud computing: If you need the power to run Llama 3 70B in production, consider utilizing cloud computing platforms like Google Cloud, Amazon Web Services, or Microsoft Azure. These platforms offer high-performance GPUs and infrastructure designed for large-scale machine learning.

Understanding Quantization: A Simplified Explanation

Think of quantization like compressing a large video file. By reducing the amount of information stored, you make the file smaller without sacrificing too much quality. Similarly, quantization reduces the size of an LLM's model by representing each parameter with fewer bits, leading to smaller models without compromising too much on performance.

For example, a standard "float32" number uses 32 bits to represent a value. Quantization can compress this to just 8 bits, making the model significantly smaller. However, this can lead to a slight reduction in accuracy.

Quantization techniques like Q4KM aim to optimize for speed and memory usage, while strategies like F16 offer a compromise between performance and accuracy.

FAQ

Q: How do I install and run Llama 3 70B on my NVIDIA 4080 16GB?

A: The process involves installing the necessary software, downloading the model weights, and configuring the software to leverage your GPU. You can find detailed instructions in the documentation of the LLM framework you're using, such as llama.cpp or transformers.

Q: Will Llama 3 70B run smoothly on my NVIDIA 4080 16GB?

A: While it's possible to run Llama 3 70B on a 4080 16GB, it might not be ideal for production environments demanding high-speed inference. You might experience slower speeds or require careful optimization techniques.

Q: What's the best way to optimize Llama 3 70B performance on my 4080 16GB?

A: Experiment with different quantization techniques, adjust batch sizes, and fine-tune model settings to find the sweet spot between performance and accuracy. Consider using tools like llama.cpp for optimization.

Keywords:

Llama 3, NVIDIA 4080 16GB, LLM, large language model, token generation speed, benchmarks, performance analysis, quantization, Q4KM, F16, model distillation, cloud computing, GPU, hardware, software, optimization, use cases, workarounds