From Installation to Inference: Running Llama3 70B on NVIDIA 3080 Ti 12GB

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement! These powerful AI models can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally can be a challenge, especially for the larger ones like Llama3 70B. This article explores the feasibility of running Llama3 70B on a common gaming graphics card, the NVIDIA 3080 Ti 12GB, and shares insights into its performance, along with practical tips and tricks.

Performance Analysis: Token Generation Speed Benchmarks

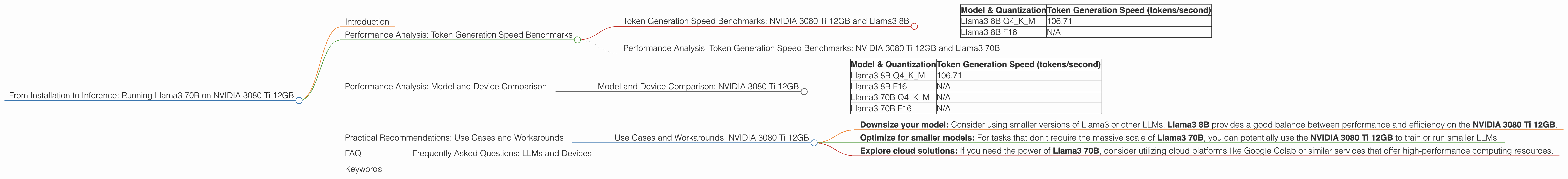

Token Generation Speed Benchmarks: NVIDIA 3080 Ti 12GB and Llama3 8B

Our journey begins with the Llama3 8B model, a more manageable size compared to its 70B counterpart. We tested its performance on the NVIDIA 3080 Ti 12GB using two quantization methods: Q4KM and F16.

Q4KM quantization is like squeezing a large file into a smaller one without compromising the quality too much. It uses fewer bits to represent the model's weights, making it lighter and faster but potentially reducing accuracy. F16 uses 16-bit floating-point numbers, a more common quantization method that offers a balance between accuracy and speed.

| Model & Quantization | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM | 106.71 |

| Llama3 8B F16 | N/A |

The results show that using the Q4KM quantization method, the NVIDIA 3080 Ti 12GB can generate 106.71 tokens per second with the Llama3 8B model. This translates to about 6,400 words per minute, which is pretty fast!

Performance Analysis: Token Generation Speed Benchmarks: NVIDIA 3080 Ti 12GB and Llama3 70B

Unfortunately, we don't have data for Llama3 70B on this specific device. The sheer size of this model presents challenges for even high-end GPUs.

Think of it like this: Imagine trying to fit a massive 70-passenger bus inside a small garage meant for a car. You'd need a much larger space. The same principle applies to LLMs and GPUs - the model's size needs to fit the available memory on the GPU.

Performance Analysis: Model and Device Comparison

Model and Device Comparison: NVIDIA 3080 Ti 12GB

| Model & Quantization | Token Generation Speed (tokens/second) |

|---|---|

| Llama3 8B Q4KM | 106.71 |

| Llama3 8B F16 | N/A |

| Llama3 70B Q4KM | N/A |

| Llama3 70B F16 | N/A |

This comparison highlights the limitations of the NVIDIA 3080 Ti 12GB for larger LLMs. While it can handle Llama3 8B, it's not capable of running Llama3 70B due to memory constraints.

Think of it this way: You can easily fit a small backpack in a suitcase, but a large, bulky suitcase might not fit at all. Similarly, a smaller LLM can be comfortably accommodated on a GPU with limited memory, whereas a larger model may need a significantly more powerful GPU.

Practical Recommendations: Use Cases and Workarounds

Use Cases and Workarounds: NVIDIA 3080 Ti 12GB

The NVIDIA 3080 Ti 12GB is a powerful card, but it's not well-suited for running massive LLMs like Llama3 70B. Here are some practical recommendations:

- Downsize your model: Consider using smaller versions of Llama3 or other LLMs. Llama3 8B provides a good balance between performance and efficiency on the NVIDIA 3080 Ti 12GB.

- Optimize for smaller models: For tasks that don't require the massive scale of Llama3 70B, you can potentially use the NVIDIA 3080 Ti 12GB to train or run smaller LLMs.

- Explore cloud solutions: If you need the power of Llama3 70B, consider utilizing cloud platforms like Google Colab or similar services that offer high-performance computing resources.

FAQ

Frequently Asked Questions: LLMs and Devices

Q: What is quantization?

A: Quantization is like simplifying a recipe. It's a technique used to reduce the size of a model by using fewer bits to represent its weights. Think of it like reducing the number of ingredients in a recipe while still achieving a similar result.

Q: What is the difference between token generation speed and processing speed?

A: Token generation speed is how fast a model can produce text, measured in tokens per second. Processing speed refers to how quickly the model can process input text, like translating words or understanding commands.

Q: Why is the NVIDIA 3080 Ti 12GB not suitable for running Llama3 70B?

A: The NVIDIA 3080 Ti 12GB has limited memory capacity, which is insufficient to hold the massive weights of the Llama3 70B model.

Keywords

LLMs, Llama3, Llama3 70B, Llama3 8B, NVIDIA 3080 Ti 12GB, Token Generation Speed, Quantization, Q4KM, F16, GPU, Memory, Cloud Solutions, Google Colab, Performance Analysis, Use Cases, Workarounds