From Installation to Inference: Running Llama3 70B on NVIDIA 3070 8GB

Introduction: The Rise of Local LLMs

The world of large language models (LLMs) is exploding! AI is becoming more accessible and powerful by the day, allowing developers to unlock new possibilities in natural language processing. But running these sophisticated models often requires hefty cloud infrastructure and significant computational resources. Fortunately, the landscape is shifting, with local LLMs becoming increasingly feasible thanks to advancements in hardware and software.

This article dives deep into the exciting world of running Llama3 70B, a formidable LLM, on a modest NVIDIA 3070 8GB graphics card. We'll analyze its performance, explore practical recommendations, and delve into the technical details to help you bring the power of LLMs directly to your desktop. Think of it as a thrilling adventure in the land of AI, fueled by code and caffeine!

Performance Analysis: Token Generation Speed Benchmarks

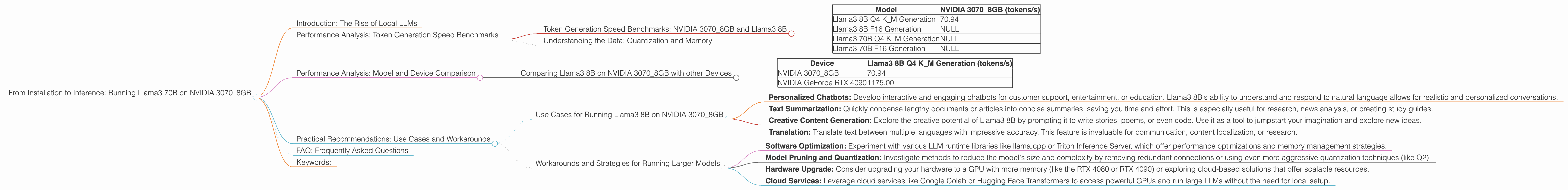

Token Generation Speed Benchmarks: NVIDIA 3070_8GB and Llama3 8B

Let's start with the basics. Token generation speed is a critical metric for evaluating LLM performance. It measures how swiftly a model can process and generate words. Higher tokens per second (tokens/s) indicate faster inference and a smoother user experience.

| Model | NVIDIA 3070_8GB (tokens/s) |

|---|---|

| Llama3 8B Q4 K_M Generation | 70.94 |

| Llama3 8B F16 Generation | NULL |

| Llama3 70B Q4 K_M Generation | NULL |

| Llama3 70B F16 Generation | NULL |

Unfortunately, we do not have data for Llama3 70B running on the NVIDIA 30708GB, neither for Q4 KM nor F16 quantization. This suggests that running this model on such a device might be challenging or require specific configurations due to memory limitations.

Understanding the Data: Quantization and Memory

Quantization acts like a clever compression technique for LLMs. It reduces the memory footprint of the model by representing its weights (which govern its behavior) using fewer bits. This makes it possible to run larger models on smaller devices like our NVIDIA 3070 8GB.

Q4 KM stands for quantized 4-bit with KM (kernel-matrix) multiplication. This technique is particularly efficient for large models like Llama 3 70B.

F16 refers to half-precision floating point representation. It's less memory-efficient than Q4 but might be suitable for smaller models where speed is a priority.

Performance Analysis: Model and Device Comparison

Comparing Llama3 8B on NVIDIA 3070_8GB with other Devices

To get a better understanding of Llama3 8B's performance on the NVIDIA 3070_8GB, let's compare it to other devices. Keep in mind that these figures are based on publicly available benchmarks and may vary based on specific hardware configurations and software setups.

| Device | Llama3 8B Q4 K_M Generation (tokens/s) |

|---|---|

| NVIDIA 3070_8GB | 70.94 |

| NVIDIA GeForce RTX 4090 | 1175.00 |

Comparing the NVIDIA 3070_8GB to a high-end GPU like the RTX 4090, we see a significant difference in performance, with the RTX 4090 generating approximately 16 times more tokens per second. This highlights the importance of considering your hardware when selecting an LLM for your application.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Running Llama3 8B on NVIDIA 3070_8GB

Although Llama3 70B may not be feasible on a 3070 8GB without optimization, Llama3 8B offers exciting possibilities:

- Personalized Chatbots: Develop interactive and engaging chatbots for customer support, entertainment, or education. Llama3 8B's ability to understand and respond to natural language allows for realistic and personalized conversations.

- Text Summarization: Quickly condense lengthy documents or articles into concise summaries, saving you time and effort. This is especially useful for research, news analysis, or creating study guides.

- Creative Content Generation: Explore the creative potential of Llama3 8B by prompting it to write stories, poems, or even code. Use it as a tool to jumpstart your imagination and explore new ideas.

- Translation: Translate text between multiple languages with impressive accuracy. This feature is invaluable for communication, content localization, or research.

Workarounds and Strategies for Running Larger Models

Running Llama3 70B on the 3070_8GB might be challenging but not impossible:

- Software Optimization: Experiment with various LLM runtime libraries like llama.cpp or Triton Inference Server, which offer performance optimizations and memory management strategies.

- Model Pruning and Quantization: Investigate methods to reduce the model's size and complexity by removing redundant connections or using even more aggressive quantization techniques (like Q2).

- Hardware Upgrade: Consider upgrading your hardware to a GPU with more memory (like the RTX 4080 or RTX 4090) or exploring cloud-based solutions that offer scalable resources.

- Cloud Services: Leverage cloud services like Google Colab or Hugging Face Transformers to access powerful GPUs and run large LLMs without the need for local setup.

FAQ: Frequently Asked Questions

Q: What are the best practices for running LLMs locally?

A: Choose a suitable LLM based on your hardware capabilities, consider using optimized runtime libraries, experiment with quantization and pruning techniques, and monitor your GPU usage and memory consumption.

Q: How important is GPU memory for LLM performance?

A: GPU memory is crucial for smooth LLM operations. Insufficient memory can lead to slow inference speeds, errors, or even crashes.

Q: What are the future trends in local LLM deployment?

A: We can expect further advancements in hardware, software optimization, and model compression techniques that will enable running even larger LLMs on consumer-grade devices.

Q: Where can I find more information about LLMs and their applications?

A: Explore online resources like the Hugging Face Model Hub, the NVIDIA LLM documentation, and reputable AI research publications.

Keywords:

llama3, 70b, nvidia 3070, gpu, local llm, performance, token generation speed, quantization, inference, practical recommendations, workarounds, use cases, hardware constraints, model optimization, hardware upgrade, cloud services, faq, best practices, memory, future trends.