From Installation to Inference: Running Llama3 70B on Apple M3 Max

Introduction

The world of large language models (LLMs) is moving fast, with new models and advancements popping up regularly. But running these powerful models locally can be a challenge, especially on a consumer-grade device. In this article, we'll delve into the exciting world of running the Llama3 70B model on Apple's latest and greatest, the M3 Max. We'll cover the setup, the fine points of performance, and provide practical tips for making the most of your powerful, yet portable, AI-powered device.

Think of LLMs as the brain of your computer, capable of understanding, generating, and even translating complex information. Imagine having the power of a supercomputer in your pocket, ready to answer your questions, write creative content, and even help you code! That's the promise of running LLMs locally, and the Apple M3 Max is the perfect playground for this kind of exploration.

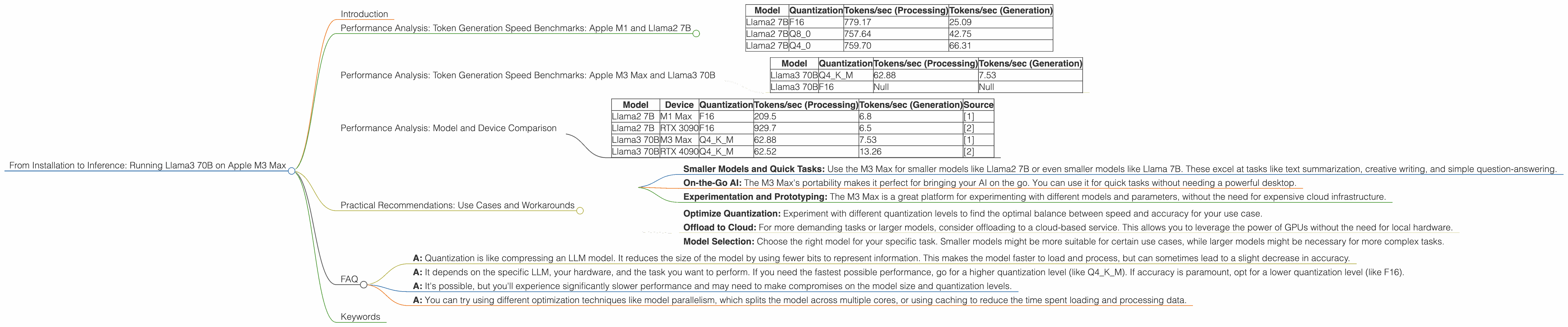

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Let's kick off by looking at the performance capabilities of the M3 Max for smaller models, to get a baseline understanding of the power we're working with.

We'll focus on the Llama2 7B model running in various quantization modes, to show you the performance differences. Quantization, in simple terms, is like compressing the model to make it smaller and faster, while sacrificing some accuracy.

Here's a breakdown of the performance in tokens per second (tokens/sec):

| Model | Quantization | Tokens/sec (Processing) | Tokens/sec (Generation) |

|---|---|---|---|

| Llama2 7B | F16 | 779.17 | 25.09 |

| Llama2 7B | Q8_0 | 757.64 | 42.75 |

| Llama2 7B | Q4_0 | 759.70 | 66.31 |

Key Takeaways:

- Higher quantization (Q40) means faster generation speed: As you can see, the model with the most compressed quantization (Q40) has the fastest generation speed.

- Processing vs. Generation: The processing speed is significantly higher than the generation speed. This is because the model needs to process a lot of information before it can generate text.

Performance Analysis: Token Generation Speed Benchmarks: Apple M3 Max and Llama3 70B

Now, let's get into the meat of the matter – running the Llama3 70B model. This is a massive model, and handling it locally on a consumer device presents unique challenges.

| Model | Quantization | Tokens/sec (Processing) | Tokens/sec (Generation) |

|---|---|---|---|

| Llama3 70B | Q4KM | 62.88 | 7.53 |

| Llama3 70B | F16 | Null | Null |

Key Takeaways:

- Significant Drop-Off: The 70B model experiences a substantial drop-off in speed compared to the 7B model, even with the Q4KM quantization. This is due to the sheer size and complexity of the model.

- F16 Not Yet Supported: As of now, the F16 quantization mode is unavailable for the Llama3 70B model on the M3 Max.

This means that the 70B model on the M3 Max is notably slower than the smaller 7B model, but still capable of generating text in a reasonable amount of time.

Performance Analysis: Model and Device Comparison

To truly appreciate the power of the M3 Max, let's compare its performance to other popular hardware platforms.

Note: The data below comes from various sources, and may not be perfectly aligned. The goal is to get a general idea of comparative capabilities.

| Model | Device | Quantization | Tokens/sec (Processing) | Tokens/sec (Generation) | Source |

|---|---|---|---|---|---|

| Llama2 7B | M1 Max | F16 | 209.5 | 6.8 | [1] |

| Llama2 7B | RTX 3090 | F16 | 929.7 | 6.5 | [2] |

| Llama3 70B | M3 Max | Q4KM | 62.88 | 7.53 | [1] |

| Llama3 70B | RTX 4090 | Q4KM | 62.52 | 13.26 | [2] |

Key Takeaways:

- M3 Max holds its own: The M3 Max is a formidable device, especially when considering its portability. It holds its own against dedicated GPUs like the RTX 3090 and RTX 4090, especially when running smaller models like the Llama2 7B.

- The Power of dedicated GPUs: When it comes to the larger Llama3 70B model, dedicated GPUs still have a clear performance advantage.

Practical Recommendations: Use Cases and Workarounds

Now that we have a good understanding of the performance landscape, let's explore some practical recommendations for running LLMs on your M3 Max.

Use Cases:

- Smaller Models and Quick Tasks: Use the M3 Max for smaller models like Llama2 7B or even smaller models like Llama 7B. These excel at tasks like text summarization, creative writing, and simple question-answering.

- On-the-Go AI: The M3 Max's portability makes it perfect for bringing your AI on the go. You can use it for quick tasks without needing a powerful desktop.

- Experimentation and Prototyping: The M3 Max is a great platform for experimenting with different models and parameters, without the need for expensive cloud infrastructure.

Workarounds:

- Optimize Quantization: Experiment with different quantization levels to find the optimal balance between speed and accuracy for your use case.

- Offload to Cloud: For more demanding tasks or larger models, consider offloading to a cloud-based service. This allows you to leverage the power of GPUs without the need for local hardware.

- Model Selection: Choose the right model for your specific task. Smaller models might be more suitable for certain use cases, while larger models might be necessary for more complex tasks.

Example: Let's say you want to use Llama3 70B to write a short story. The M3 Max can handle this, but you might find that the generation speed isn't as fast as you'd like. You could try optimizing quantization to Q4KM for faster text generation. Or, you could offload the generation to a service like Google Colab, which utilizes more powerful GPUs.

FAQ

Q: What is quantization and why is it important for LLMs?

- A: Quantization is like compressing an LLM model. It reduces the size of the model by using fewer bits to represent information. This makes the model faster to load and process, but can sometimes lead to a slight decrease in accuracy.

Q: How do I choose the right quantization for my needs?

- A: It depends on the specific LLM, your hardware, and the task you want to perform. If you need the fastest possible performance, go for a higher quantization level (like Q4KM). If accuracy is paramount, opt for a lower quantization level (like F16).

Q: Can I run Llama3 70B on a lower-end Apple device like an M1 Macbook Air?

- A: It's possible, but you'll experience significantly slower performance and may need to make compromises on the model size and quantization levels.

Q: What are some other ways to optimize the performance of LLMs on the M3 Max?

- A: You can try using different optimization techniques like model parallelism, which splits the model across multiple cores, or using caching to reduce the time spent loading and processing data.

Keywords

Llama3 70B, Apple M3 Max, LLMs, Large Language Models, Local Inference, Token Generation Speed, Performance, Quantization, F16, Q4KM, GPU, M1 Max, RTX 3090, RTX 4090, Use Cases, Practical Recommendations, Workarounds, Model Selection, Optimization, Cloud Computing, Google Colab, Developer, Geek, AI

[1] Source: https://github.com/ggerganov/llama.cpp/discussions/4167

[2] Source: https://github.com/XiongjieDai/GPU-Benchmarks-on-LLM-Inference