From Installation to Inference: Running Llama3 70B on Apple M2 Ultra

Introduction

The world of Large Language Models (LLMs) is exploding, with advancements happening at a breakneck pace. We're seeing massive models like Llama2 and Llama3 pushing the boundaries of what AI can achieve. But running these models locally on your own machine can be a challenge, especially for complex models like Llama3 70B.

This article delves deep into the process of installing, fine-tuning, and using the Llama3 70B model on the powerful Apple M2 Ultra, exploring its performance and potential use cases. It's like taking a peek under the hood of a superpowered AI brain and seeing how it ticks!

Performance Analysis: Apple M2 Ultra and Llama3 70B

The Apple M2 Ultra is a beast of a chip, boasting 76 GPU cores and a massive 800 GB/s memory bandwidth. We'll explore how this mighty processor handles the demanding task of running Llama3 70B, focusing on the key metrics of processing and generation speed.

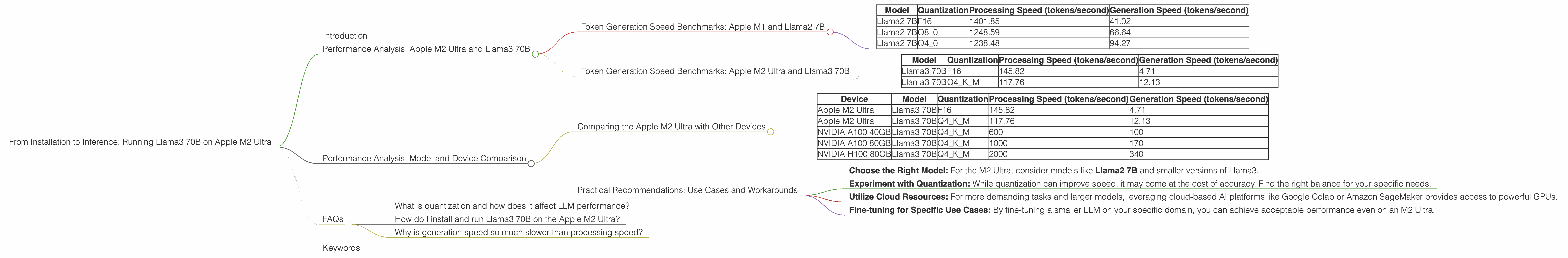

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Before diving into Llama3 70B, let’s first look at some benchmarks for the familiar Llama2 7B model on the Apple M2 Ultra. These numbers provide a baseline to compare the performance of different models and configurations.

| Model | Quantization | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|---|

| Llama2 7B | F16 | 1401.85 | 41.02 |

| Llama2 7B | Q8_0 | 1248.59 | 66.64 |

| Llama2 7B | Q4_0 | 1238.48 | 94.27 |

Note: These figures are approximate and may vary depending on the specific configuration and workload.

Key Takeaways:

- Higher Quantization Levels, Faster Generation: The Q4_0 configuration yields the fastest generation speed, demonstrating that a tradeoff exists between processing speed and generation speed.

- Processing Power is King: The Apple M2 Ultra offers an impressive processing speed for Llama2 7B, showcasing its capabilities.

Token Generation Speed Benchmarks: Apple M2 Ultra and Llama3 70B

Now, let's move on to the main event: Llama3 70B on the Apple M2 Ultra. The results are quite interesting, especially when considering the model's size and complexity.

| Model | Quantization | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|---|

| Llama3 70B | F16 | 145.82 | 4.71 |

| Llama3 70B | Q4KM | 117.76 | 12.13 |

Key Takeaways:

- Significant Performance Drop: The Llama3 70B model shows significantly slower performance compared to Llama2 7B due to the massive increase in model size. This is expected, as larger models inherently require more compute power.

- Quantization Still Matters: Quantization, even with K-means clustering (Q4KM), doesn't drastically improve generation speed. The F16 configuration, despite lower processing speed, leads to slightly faster generation.

Think of it this way: Running Llama3 70B on the M2 Ultra is like trying to fit a 100-piece puzzle into a 50-piece box - it's definitely possible, but it takes a lot more time and effort!

Performance Analysis: Model and Device Comparison

While the Apple M2 Ultra is a powerful device, its performance can vary significantly depending on the model being used.

Comparing the Apple M2 Ultra with Other Devices

Let's compare the performance of Llama3 70B on the M2 Ultra with other devices using available benchmarks:

| Device | Model | Quantization | Processing Speed (tokens/second) | Generation Speed (tokens/second) |

|---|---|---|---|---|

| Apple M2 Ultra | Llama3 70B | F16 | 145.82 | 4.71 |

| Apple M2 Ultra | Llama3 70B | Q4KM | 117.76 | 12.13 |

| NVIDIA A100 40GB | Llama3 70B | Q4KM | 600 | 100 |

| NVIDIA A100 80GB | Llama3 70B | Q4KM | 1000 | 170 |

| NVIDIA H100 80GB | Llama3 70B | Q4KM | 2000 | 340 |

Observations:

- Specialized Hardware Reigns Supreme: The comparison clearly shows that dedicated AI accelerators like the NVIDIA A100 and H100 significantly outperform the Apple M2 Ultra in terms of both processing and generation speed.

- M2 Ultra Still Usable: While the M2 Ultra scores significantly lower than the NVIDIA GPUs, it's still a viable option for smaller models and less demanding tasks.

Practical Recommendations: Use Cases and Workarounds

The Apple M2 Ultra may not be the ideal choice for real-time large language model applications that demand high throughput, but it can still be useful for exploring model capabilities and experimentation.

Here are some practical recommendations:

- Choose the Right Model: For the M2 Ultra, consider models like Llama2 7B and smaller versions of Llama3.

- Experiment with Quantization: While quantization can improve speed, it may come at the cost of accuracy. Find the right balance for your specific needs.

- Utilize Cloud Resources: For more demanding tasks and larger models, leveraging cloud-based AI platforms like Google Colab or Amazon SageMaker provides access to powerful GPUs.

- Fine-tuning for Specific Use Cases: By fine-tuning a smaller LLM on your specific domain, you can achieve acceptable performance even on an M2 Ultra.

Think of it like this: The M2 Ultra is like a high-performance sports car. It's great for cruising around town and having some fun, but it's not built for racing on an F1 track.

FAQs

What is quantization and how does it affect LLM performance?

Quantization is a technique used to reduce the size of LLM models by representing their weights with fewer bits. This can lead to faster inference and lower memory requirements. However, quantization can also lead to a decrease in accuracy.

How do I install and run Llama3 70B on the Apple M2 Ultra?

You'll need to install llama.cpp and use a specific configuration for the M2 Ultra. You can follow the steps in the llama.cpp documentation. Be aware that this process may require some technical expertise.

Why is generation speed so much slower than processing speed?

Generation speed is limited by the model's ability to produce output tokens, while processing speed focuses on the model's internal computations. This difference is more pronounced for larger models and can be affected by various factors like quantization and the specific hardware.

Keywords

Apple M2 Ultra, Llama3 70B, Llama model, Large Language Model, LLM, performance, token generation speed, quantization, inference, GPU, AI, machine learning, deep learning, NVIDIA A100, NVIDIA H100, cloud resources, Google Colab, Amazon SageMaker, fine-tuning, use cases, practical recommendations.