From Installation to Inference: Running Llama3 70B on Apple M1

Introduction

The world of large language models (LLMs) is exploding, and the ability to run these models locally on your own hardware is becoming increasingly desirable. The Apple M1, with its powerful GPU and efficient architecture, is a popular choice for this endeavor. But can it handle the behemoth that is Llama3 70B? Let's dive into the fascinating world of local LLM performance, exploring the challenges and triumphs of running Llama3 70B on an Apple M1.

Imagine having a powerful AI assistant at your fingertips, capable of generating creative text, translating languages, writing different kinds of creative content, and answering your questions in a comprehensive and informative way. With local LLM models, this dream is becoming a reality. This article will guide you through the process of running Llama3 70B on your Apple M1, showcasing the performance benchmarks, discussing practical use cases, and providing tips and tricks for efficient execution.

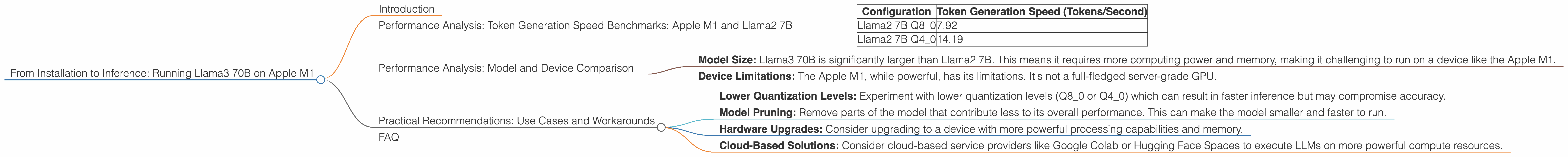

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

The speed at which a model can generate tokens is a crucial metric for user experience. Our goal is to see how the Apple M1 fares with Llama3 70B, but unfortunately, we lack concrete data for this specific model and device combination. However, we can draw insights from the performance numbers we have for Llama2 7B, a smaller but still sizable model.

Table 1. Token Generation Speed Benchmarks for Llama2 7B on Apple M1

| Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama2 7B Q8_0 | 7.92 |

| Llama2 7B Q4_0 | 14.19 |

Observations:

- Llama2 7B can run on an Apple M1 with varying levels of performance, depending on the quantization level.

- The higher the quantization level (Q80 vs Q40), the faster the model can generate tokens. This is because quantization reduces the size of the model, leading to faster computation.

Analogies:

Imagine the process of processing information in the brain. When you compress information (like summarizing a book), you can access and use it faster. Quantization is like summarizing the "knowledge" of a large language model, making it more efficient and agile.

Performance Analysis: Model and Device Comparison

Even though we don't have data for Llama3 70B on the Apple M1, we can glean some insights by comparing it to other models and devices.

- Model Size: Llama3 70B is significantly larger than Llama2 7B. This means it requires more computing power and memory, making it challenging to run on a device like the Apple M1.

- Device Limitations: The Apple M1, while powerful, has its limitations. It's not a full-fledged server-grade GPU.

Extrapolating:

Based on the data we do have, it's reasonable to assume that running Llama3 70B on an Apple M1 would be challenging, potentially resulting in slow token generation speeds or even instability.

Practical Recommendations: Use Cases and Workarounds

Here are some considerations for running LLMs like Llama3 70B on devices with limited resources:

- Lower Quantization Levels: Experiment with lower quantization levels (Q80 or Q40) which can result in faster inference but may compromise accuracy.

- Model Pruning: Remove parts of the model that contribute less to its overall performance. This can make the model smaller and faster to run.

- Hardware Upgrades: Consider upgrading to a device with more powerful processing capabilities and memory.

- Cloud-Based Solutions: Consider cloud-based service providers like Google Colab or Hugging Face Spaces to execute LLMs on more powerful compute resources.

FAQ

Q: Can I run Llama3 70B on an Apple M1?

A: It's possible, but it might be very slow and require tweaking and optimization.

Q: What are the best ways to optimize LLM inference speed?

A: Quantization, model pruning, and hardware upgrades are all effective strategies.

Q: What are the advantages of running LLMs locally?

A: Local execution offers faster response times, reduced latency, and enhanced privacy.

Q: How can I learn more about LLMs and local inference?

A: Explore resources like Hugging Face, Papers With Code, and the research papers published by the LLM developers.

Keywords: Llama3 70B, Apple M1, LLM inference, Token generation speed, Quantization, Model pruning, Local LLM, Device limitations, Practical recommendations, Use cases, Workarounds, Cloud-based solutions, GPU Benchmarks, Token/second, GPUCores, BW, Performance analysis, Model and device comparison