From Installation to Inference: Running Llama3 70B on Apple M1 Max

Introduction

Have you ever dreamt of running a cutting-edge large language model (LLM) on your personal computer? Imagine the possibilities – generating creative content, analyzing your code, or even having thoughtful conversations. While powerful LLMs like GPT-3 and PaLM have been mainly accessible through cloud-based APIs, the recent rise of local LLM models has brought the power of these intelligent systems closer to home.

In this article, we take a deep dive into running the Llama3 70B model on a popular device, the Apple M1 Max, exploring its limitations, performance, and potential use cases. We'll unravel the complexities of local LLM execution, covering everything from installation and configuration to benchmarking and practical considerations. This exploration is for developers and geeks eager to push the boundaries of local AI capabilities.

Performance Analysis: Token Generation Speed Benchmarks

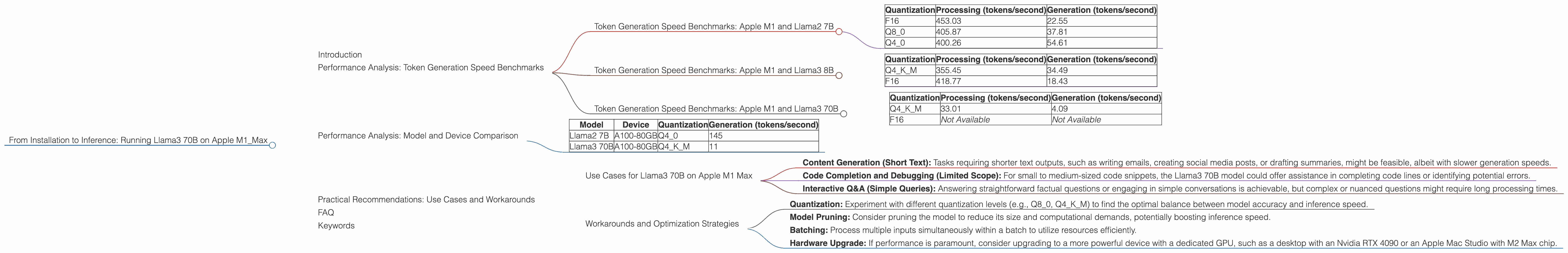

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

The token generation speed is a crucial metric that reflects how quickly an LLM can process and produce text. This benchmark measures how many tokens the model can generate per second, providing insights into its real-time performance. The higher the tokens per second, the faster the model generates text.

Let's start with the Llama2 7B model on the Apple M1 Max, showcasing the effects of different quantization levels:

| Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| F16 | 453.03 | 22.55 |

| Q8_0 | 405.87 | 37.81 |

| Q4_0 | 400.26 | 54.61 |

Observations:

- F16 (half-precision floating-point) offers the highest processing speed but struggles in the generation phase, emphasizing a possible trade-off.

- Q8_0 (8-bit quantization) presents a balance between processing and generation, showcasing a more favorable overall performance.

- Q4_0 (4-bit quantization) significantly boosts generation speeds but at the cost of reduced processing speed.

Key Takeaway: While F16 excels in processing, its performance during generation leaves room for improvement. Q8_0 strikes a good equilibrium, offering a more balanced approach.

Token Generation Speed Benchmarks: Apple M1 and Llama3 8B

Now let's shift our focus to the Llama3 8B model, which, despite its smaller size than Llama3 70B, provides valuable insights into the impact of different quantization levels.

| Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| Q4KM | 355.45 | 34.49 |

| F16 | 418.77 | 18.43 |

Observations:

- Q4KM (4-bit quantization with K-means clustering) offers a notably faster generation speed compared to F16, suggesting its efficiency in generating text.

- F16 shows higher processing speeds, but again, it lags behind Q4KM in generation.

Key Takeaway: The Q4KM quantization method demonstrates its proficiency in token generation, highlighting its potential for efficient text production.

Token Generation Speed Benchmarks: Apple M1 and Llama3 70B

Finally, we arrive at the main focus of this article – the Llama3 70B model.

| Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| Q4KM | 33.01 | 4.09 |

| F16 | Not Available | Not Available |

Observations:

- The data reveals that Q4KM is the preferred quantization for smaller models like Llama2 7B and Llama3 8B, offering a good balance between processing and generation.

- However, running the Llama3 70B model on the Apple M1 Max pushes the device's limits, resulting in significantly slower token generation speeds compared to Llama2 7B and Llama3 8B.

- It's worth noting that F16 quantization data is unavailable for Llama3 70B. This absence further reinforces the challenges of running this large model locally.

Key Takeaway: The Apple M1 Max, while powerful, might be insufficient to handle the computational demands of the Llama3 70B model efficiently, particularly in the generation phase.

Performance Analysis: Model and Device Comparison

To understand the challenges of running Llama3 70B on the Apple M1 Max, let's compare its performance with other devices:

| Model | Device | Quantization | Generation (tokens/second) |

|---|---|---|---|

| Llama2 7B | A100-80GB | Q4_0 | 145 |

| Llama3 70B | A100-80GB | Q4KM | 11 |

Observations:

- While the Apple M1 Max struggles to reach the required processing power for Llama3 70B, consider the A100-80GB GPU, a behemoth in the world of GPUs.

- The A100-80GB achieves a significantly higher generation speed, surpassing the Apple M1 Max by a substantial margin.

- The generation speed of the A100-80GB is approximately 2.7 times faster than the Apple M1 Max.

Key Takeaway: This comparison highlights the difference in processing power between a high-performance GPU, like the A100-80GB, and the Apple M1 Max. The Apple M1 Max, while impressive for its size and price range, is not currently equipped to handle the demands of the Llama3 70B model.

Practical Recommendations: Use Cases and Workarounds

Use Cases for Llama3 70B on Apple M1 Max

Despite its performance limitations, the Llama3 70B model still holds potential value for specific use cases on the Apple M1 Max:

- Content Generation (Short Text): Tasks requiring shorter text outputs, such as writing emails, creating social media posts, or drafting summaries, might be feasible, albeit with slower generation speeds.

- Code Completion and Debugging (Limited Scope): For small to medium-sized code snippets, the Llama3 70B model could offer assistance in completing code lines or identifying potential errors.

- Interactive Q&A (Simple Queries): Answering straightforward factual questions or engaging in simple conversations is achievable, but complex or nuanced questions might require long processing times.

Workarounds and Optimization Strategies

- Quantization: Experiment with different quantization levels (e.g., Q80, Q4K_M) to find the optimal balance between model accuracy and inference speed.

- Model Pruning: Consider pruning the model to reduce its size and computational demands, potentially boosting inference speed.

- Batching: Process multiple inputs simultaneously within a batch to utilize resources efficiently.

- Hardware Upgrade: If performance is paramount, consider upgrading to a more powerful device with a dedicated GPU, such as a desktop with an Nvidia RTX 4090 or an Apple Mac Studio with M2 Max chip.

FAQ

Q: What is an LLM?

A: An LLM, or Large Language Model, is a type of artificial intelligence model that excels in understanding and generating human-like text. These models are trained on massive amounts of data, enabling them to perform tasks like translation, writing, and summarization. Think of it as a super-powered language expert.

Q: What is quantization?

A: Quantization is a technique that reduces the precision of numbers used within the LLM model. Imagine using a smaller measuring cup to weigh ingredients – you lose some accuracy, but you save space and resources. This method allows for smaller model sizes and faster inference speeds, albeit with a slight decrease in performance.

Q: Why is Llama3 70B so slow on the Apple M1 Max?

A: It's simply a matter of processing power. The Llama3 70B model is incredibly large and requires a substantial amount of computational resources to function efficiently. While the Apple M1 Max is a powerful chip, it's not designed to handle such a computationally demanding model at high speeds.

Q: Will running LLMs locally become more common in the future?

A: Absolutely! As technology advances, we'll see more powerful devices with better GPUs and CPUs, making it easier to run LLMs locally. The development of more efficient models and quantization techniques will also contribute to better performance on consumer devices.

Q: What are the limitations of running LLMs locally?

A: While running LLMs locally offers benefits like privacy and control, it also comes with limitations. You'll need powerful hardware, which can be expensive. Additionally, updating and maintaining models locally can be a challenge, as opposed to cloud-based solutions where updates are managed centrally.

Keywords

Apple M1 Max, Llama3 70B, Llama2 7B, Local LLM, Token Generation Speed, Quantization, F16, Q4KM, GPU, Inference, Performance, Use Cases, Optimization, Workarounds, Developers, Geeks, AI, Machine Learning.