From Installation to Inference: Running Llama2 7B on Apple M3

The world of large language models (LLMs) is booming, and with it comes the desire to run these powerful models locally on your own device. This brings the potential for faster response times, increased privacy, and a whole new level of personalized artificial intelligence. But the reality is that these LLMs require significant processing power, making it challenging to run them smoothly on anything but high-end hardware.

This article focuses on the Apple M3 processor, the latest and greatest in Apple's custom silicon lineup, and its performance with the Llama2 7B model. We'll delve into the crucial aspects of running LLMs locally, from installation to inference, exploring the potential for Apple M3 to become a powerful tool for local LLM deployment.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

One of the key metrics for evaluating LLM performance is token generation speed. This measures how fast a model can process text and generate new tokens, ultimately influencing the overall responsiveness and efficiency of the model. With the Llama2 7B model, we can explore how the M3 chip measures up in this critical aspect.

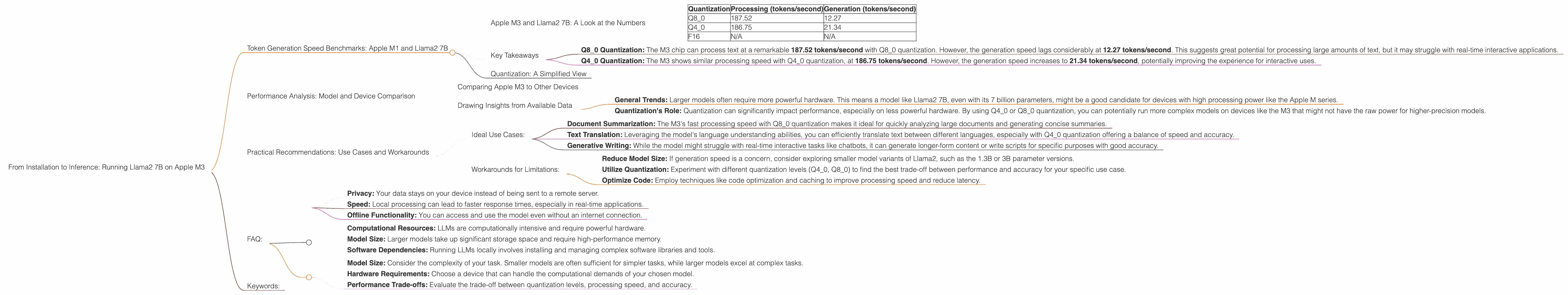

Apple M3 and Llama2 7B: A Look at the Numbers

Here's a breakdown of the token generation speed benchmarks for Llama2 7B on the M3, based on various quantization levels:

| Quantization | Processing (tokens/second) | Generation (tokens/second) |

|---|---|---|

| Q8_0 | 187.52 | 12.27 |

| Q4_0 | 186.75 | 21.34 |

| F16 | N/A | N/A |

Note: Data for Llama2 7B F16 quantization on M3 is not available.

Key Takeaways

- Q80 Quantization: The M3 chip can process text at a remarkable 187.52 tokens/second with Q80 quantization. However, the generation speed lags considerably at 12.27 tokens/second. This suggests great potential for processing large amounts of text, but it may struggle with real-time interactive applications.

- Q40 Quantization: The M3 shows similar processing speed with Q40 quantization, at 186.75 tokens/second. However, the generation speed increases to 21.34 tokens/second, potentially improving the experience for interactive uses.

Quantization: A Simplified View

Imagine a book with a lot of detailed illustrations. Quantization is like simplifying those illustrations to use fewer colors or smaller sizes. This makes the book smaller, easier to share, and can even speed up reading! In the same way, quantization in LLMs reduces the model's size without sacrificing too much accuracy, making it easier to run on limited devices.

Performance Analysis: Model and Device Comparison

While token generation speed is crucial, comparing performance across different models and devices provides a broader picture of the landscape.

Comparing Apple M3 to Other Devices

Unfortunately, there's no direct comparison data available for other devices running Llama2 7B alongside the Apple M3. However, benchmark data for other models and devices can be found in the llama.cpp GitHub discussions and the GPU Benchmarks on LLM Inference.

Drawing Insights from Available Data

While we can't directly compare M3 to others for Llama2 7B, we can infer some insights:

- General Trends: Larger models often require more powerful hardware. This means a model like Llama2 7B, even with its 7 billion parameters, might be a good candidate for devices with high processing power like the Apple M series.

- Quantization's Role: Quantization can significantly impact performance, especially on less powerful hardware. By using Q40 or Q80 quantization, you can potentially run more complex models on devices like the M3 that might not have the raw power for higher-precision models.

Practical Recommendations: Use Cases and Workarounds

Now that we've examined the performance of Llama2 7B on the Apple M3, let's explore practical use cases and potential workarounds to maximize its capabilities.

Ideal Use Cases:

- Document Summarization: The M3's fast processing speed with Q8_0 quantization makes it ideal for quickly analyzing large documents and generating concise summaries.

- Text Translation: Leveraging the model's language understanding abilities, you can efficiently translate text between different languages, especially with Q4_0 quantization offering a balance of speed and accuracy.

- Generative Writing: While the model might struggle with real-time interactive tasks like chatbots, it can generate longer-form content or write scripts for specific purposes with good accuracy.

Workarounds for Limitations:

- Reduce Model Size: If generation speed is a concern, consider exploring smaller model variants of Llama2, such as the 1.3B or 3B parameter versions.

- Utilize Quantization: Experiment with different quantization levels (Q40, Q80) to find the best trade-off between performance and accuracy for your specific use case.

- Optimize Code: Employ techniques like code optimization and caching to improve processing speed and reduce latency.

FAQ:

Q: What are the primary advantages of running LLMs locally?

A: Running LLMs locally offers several benefits:

- Privacy: Your data stays on your device instead of being sent to a remote server.

- Speed: Local processing can lead to faster response times, especially in real-time applications.

- Offline Functionality: You can access and use the model even without an internet connection.

Q: What are the challenges of running LLMs locally?

A: Local LLM deployment also presents challenges:

- Computational Resources: LLMs are computationally intensive and require powerful hardware.

- Model Size: Larger models take up significant storage space and require high-performance memory.

- Software Dependencies: Running LLMs locally involves installing and managing complex software libraries and tools.

Q: How do I choose the right LLM and hardware for my project?

A: Selecting the right combination of LLM and hardware depends on your specific needs:

- Model Size: Consider the complexity of your task. Smaller models are often sufficient for simpler tasks, while larger models excel at complex tasks.

- Hardware Requirements: Choose a device that can handle the computational demands of your chosen model.

- Performance Trade-offs: Evaluate the trade-off between quantization levels, processing speed, and accuracy.

Keywords:

LLM, Llama2, 7B, Apple M3, token generation speed, quantization, Q40, Q80, F16, local inference, performance benchmarks, use cases, workarounds, practical recommendations, developer audience, AI, natural language processing, NLP, deep learning, machine learning, hardware requirements, computational resources, privacy, efficiency, speed.