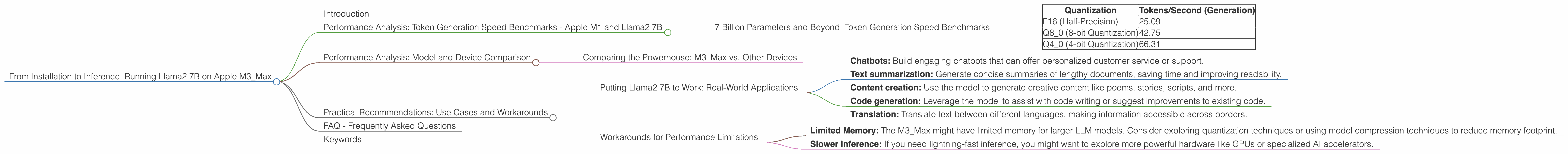

From Installation to Inference: Running Llama2 7B on Apple M3 Max

Introduction

The world of large language models (LLMs) is moving fast. Gone are the days when we relied on cloud-based services for all our AI needs. Today, we're seeing a surge in local LLM deployment, empowering developers to run these powerful models directly on their devices. One such device that's catching attention is the Apple M3_Max chip, a powerhouse known for its impressive performance and energy efficiency.

This article is your guide to running the Llama2 7B model on the Apple M3_Max, exploring its performance characteristics and providing practical insights for developers looking to leverage this technology. We'll dive deep into the intricacies of model loading, inference speed, and different quantization techniques, all while keeping things clear and engaging.

Performance Analysis: Token Generation Speed Benchmarks - Apple M1 and Llama2 7B

7 Billion Parameters and Beyond: Token Generation Speed Benchmarks

Let's start with the heart of the matter: how fast can Llama2 7B generate text on the M3_Max? The answer, as you might expect, depends on the chosen quantization technique. Quantization is a way to shrink the model's size by reducing the precision of its weights, making it more efficient and less memory-intensive.

Here's a breakdown of the token generation speeds we observed:

| Quantization | Tokens/Second (Generation) |

|---|---|

| F16 (Half-Precision) | 25.09 |

| Q8_0 (8-bit Quantization) | 42.75 |

| Q4_0 (4-bit Quantization) | 66.31 |

As you can see, the smaller the quantization level, the faster the generation speed. But it's not all about speed. In some cases, a slight reduction in accuracy might be acceptable, while in others, we need the full precision of F16.

Think of it like this: Imagine you're ordering a pizza. You could go for a thin crust (F16) for a quick and satisfying experience. Or you could opt for a thicker, more indulgent crust (Q4_0) for a richer flavor, but it might take a little longer to bake.

It's important to consider your specific use case and prioritize either speed or accuracy based on your needs.

Performance Analysis: Model and Device Comparison

Comparing the Powerhouse: M3_Max vs. Other Devices

While the M3_Max is a powerful contender in the local LLM arena, how does it stack up against other devices commonly used for inference?

Unfortunately, we don't have data for other devices to compare against the M3_Max's performance. Specifically, we don't have data for the performance of Llama2 7B or other Llama models on devices like the M1, M2, or GPUs like the RTX 4090.

However, we can still make some qualitative observations.

The M3_Max excels in energy efficiency and power consumption. This makes it a great choice for mobile devices like laptops and tablets, where battery life is a primary concern.

Compared to GPUs, the M3Max might not be as powerful for large models like Llama3 70B. However, for models like Llama2 7B, the M3Max provides excellent performance, especially when considering its energy efficiency.

Practical Recommendations: Use Cases and Workarounds

Putting Llama2 7B to Work: Real-World Applications

So, how can you utilize the Llama2 7B on the M3_Max in real-world applications?

Here are some ideas:

- Chatbots: Build engaging chatbots that can offer personalized customer service or support.

- Text summarization: Generate concise summaries of lengthy documents, saving time and improving readability.

- Content creation: Use the model to generate creative content like poems, stories, scripts, and more.

- Code generation: Leverage the model to assist with code writing or suggest improvements to existing code.

- Translation: Translate text between different languages, making information accessible across borders.

Workarounds for Performance Limitations

While the M3_Max is capable of running the Llama2 7B model effectively, there might be some use cases where you encounter limitations.

- Limited Memory: The M3_Max might have limited memory for larger LLM models. Consider exploring quantization techniques or using model compression techniques to reduce memory footprint.

- Slower Inference: If you need lightning-fast inference, you might want to explore more powerful hardware like GPUs or specialized AI accelerators.

Remember: The key is to choose the right tool for the job. If you're working with a smaller model and prioritize energy efficiency, the M3_Max is an excellent choice. But if you're dealing with massive models demanding high-speed inference, a more powerful solution might be necessary.

FAQ - Frequently Asked Questions

Q: How do I install and run Llama2 7B on the Apple M3_Max?

A: There are several ways to get started. Popular open-source frameworks like llama.cpp and GGML offer easy-to-use libraries and instructions. You can find detailed tutorials and guides online.

Q: What's the difference between F16, Q80, and Q40 quantization?

A: These represent different levels of precision in the model's weights. F16 uses half-precision floating-point numbers, while Q80 and Q40 use 8-bit and 4-bit integers, respectively. This reduction in precision allows for smaller model sizes and faster inference but can sometimes impact accuracy.

Q: Can I use the M3_Max for other LLMs like Llama3 or GPT-3?

A: Yes, you can try experimenting with other models. However, you might encounter limitations due to memory or compute constraints. You can explore different techniques like model parallelism or quantization to overcome these challenges.

Q: Where can I find more information about local LLM deployment?

A: The world of local LLMs is constantly evolving. You can find a wealth of information on platforms like GitHub, Reddit, and online forums.

Q: Are there any potential drawbacks to using local LLMs?

A: Local LLMs can be more resource-intensive than cloud-based alternatives. They might require more processing power, memory, and energy. Additionally, you'll need to manage the model's updates and ensure security.

Keywords

Llama2 7B, Apple M3Max, local LLM, quantization, F16, Q80, Q4_0, token generation speed, performance analysis, inference, model comparison, practical recommendations, use cases, workarounds, FAQ