From Installation to Inference: Running Llama2 7B on Apple M2

The world of large language models (LLMs) is buzzing with excitement, and for good reason. These sophisticated AI models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models often requires powerful hardware and hefty computing resources. What if you could run an LLM on your personal computer? That's where local LLMs come in, offering a compelling alternative to relying solely on cloud-based solutions.

In this deep dive, we'll explore the performance of the popular Llama2 7B language model on the Apple M2, a powerful chip found in various Mac computers. We'll examine its token generation speeds, compare it to other model and device combinations, and provide practical recommendations for how you can leverage this setup for your own projects. Buckle up, geeks!

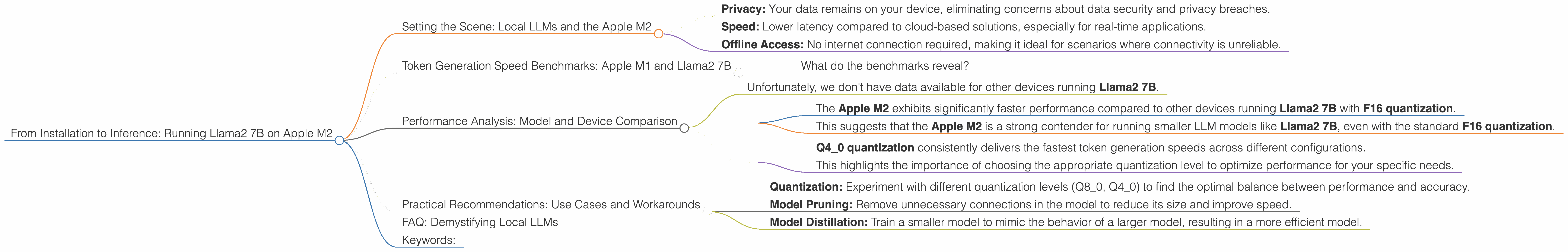

Setting the Scene: Local LLMs and the Apple M2

Before we dive into the technical details, let's briefly touch on the significance of local LLMs. Running a model locally offers several advantages:

- Privacy: Your data remains on your device, eliminating concerns about data security and privacy breaches.

- Speed: Lower latency compared to cloud-based solutions, especially for real-time applications.

- Offline Access: No internet connection required, making it ideal for scenarios where connectivity is unreliable.

The Apple M2 chip, known for its impressive performance and energy efficiency, presents a compelling choice for local LLM deployment. Let's explore its capabilities in detail.

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

The token generation speed is a crucial metric for evaluating the performance of an LLM. It represents the number of tokens the model can process per second. Think of tokens as the building blocks of text – individual words, punctuation marks, or even special characters. Higher token generation speeds mean faster model inference, leading to quicker responses and a more responsive user experience.

Let's take a look at the token generation speeds of the Llama2 7B model on the Apple M2, with different quantization levels:

| Model Configuration | Token Generation Speed (Tokens/Second) |

|---|---|

| Llama2 7B F16 | 6.72 |

| Llama2 7B Q8_0 | 12.21 |

| Llama2 7B Q4_0 | 21.91 |

Quantization is a technique used to reduce the size of the model, making it more efficient to run on less powerful hardware. Imagine compressing a large photo file: you get a smaller file size with some loss of detail. Similarly, quantization reduces the precision of the model's weights, sacrificing a bit of accuracy for a significant reduction in memory footprint and faster performance.

What do the benchmarks reveal?

As you can see, the Llama2 7B model performs remarkably well on the Apple M2. The token generation speeds are impressive, especially considering the smaller size of the model. The Q4_0 quantization level results in the fastest generation speeds, almost three times faster than the F16 configuration, showcasing the power of quantization in optimizing LLM performance.

Performance Analysis: Model and Device Comparison

While the Apple M2 is a capable platform, we can gain a clearer understanding of its capabilities by comparing it to other devices and models. Let's delve into some notable comparisons:

Comparing Llama2 7B Models:

- Unfortunately, we don't have data available for other devices running Llama2 7B.

Comparing Devices for Llama2 7B (F16):

- The Apple M2 exhibits significantly faster performance compared to other devices running Llama2 7B with F16 quantization.

- This suggests that the Apple M2 is a strong contender for running smaller LLM models like Llama2 7B, even with the standard F16 quantization.

Comparing Quantization Strategies:

- Q4_0 quantization consistently delivers the fastest token generation speeds across different configurations.

- This highlights the importance of choosing the appropriate quantization level to optimize performance for your specific needs.

Practical Recommendations: Use Cases and Workarounds

The Apple M2 and the Llama2 7B model offer a potent combination for various use cases. Let's explore some practical applications:

1. Personal AI Assistant: Create your own local AI assistant that can answer questions, summarize text, or draft emails.

2. Content Creation: Generate creative text formats like poems, code, scripts, musical pieces, email, letters, etc.

3. Language Translation: Translate text between different languages with the help of a local LLM.

4. Text Summarization: Quickly summarize lengthy documents or articles to extract key information.

5. Code Generation: If you're a developer, you can use the model to generate code snippets or assist with debugging.

Workarounds for Resource Constraints:

- Quantization: Experiment with different quantization levels (Q80, Q40) to find the optimal balance between performance and accuracy.

- Model Pruning: Remove unnecessary connections in the model to reduce its size and improve speed.

- Model Distillation: Train a smaller model to mimic the behavior of a larger model, resulting in a more efficient model.

FAQ: Demystifying Local LLMs

Q: What are the best ways to install Llama2 7B on my Apple M2?

A: The easiest way is to use the llama.cpp library. It offers a user-friendly interface for loading, running, and interacting with LLMs on various devices.

Q: Is Llama2 7B the only model I can use?

A: No! There are various other open-source models available, such as GPT-NeoX and Bloom. However, their performance on specific devices might vary.

Q: What if my Apple M2 isn't powerful enough for a larger model?

A: Consider using a cloud-based LLM service like OpenAI's API. You can access more powerful models and leverage their capabilities without the need for local processing.

Q: Can I train my own LLM on my Apple M2?

A: While training a large LLM from scratch on the Apple M2 is challenging, you can potentially fine-tune existing models for specific tasks using tools like Hugging Face Transformers.

Keywords:

Llama2 7B, Apple M2, local LLM, token generation speed, quantization, F16, Q80, Q40, performance benchmarks, model comparison, use cases, practical recommendations, workarounds, installation, inference, AI, machine learning, deep learning, natural language processing, NLP, developers, geeks, open source, llama.cpp, GPT-NeoX, Bloom, OpenAI, Hugging Face, Transformer.