From Installation to Inference: Running Llama2 7B on Apple M2 Max

Introduction: Unleashing the Power of Local LLMs

The world of large language models (LLMs) is exploding, offering powerful capabilities for everything from text generation and translation to code writing and question answering. But accessing these capabilities often involves cloud-based services, which can be costly and introduce latency. Imagine a world where you could harness the power of LLMs directly on your own machine, opening up possibilities for faster, more efficient, and potentially more private AI experiences.

This article delves into the exciting world of local LLM deployments, exploring the performance of Llama2 7B running on the Apple M2 Max chip. We'll examine the nuances of model quantization, analyze token generation speeds, and provide practical recommendations for use cases that benefit from this setup.

Performance Analysis: Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

Understanding Token Generation Speed

Think of token generation speed as the "words per minute" of your AI. It measures how quickly the model can process and generate individual words or parts of words, which are fundamental building blocks of language. A higher token generation speed means faster responses and a more responsive AI experience.

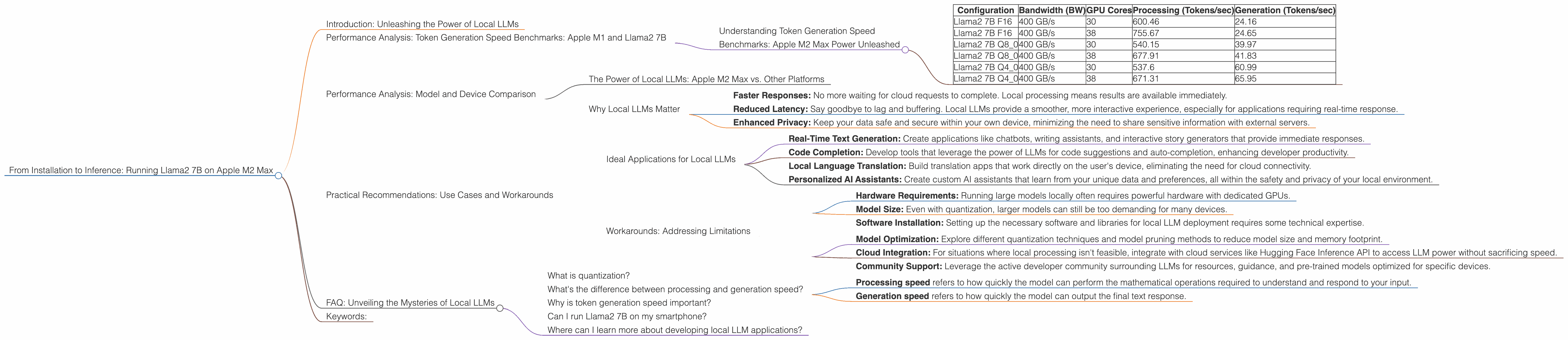

Benchmarks: Apple M2 Max Power Unleashed

| Configuration | Bandwidth (BW) | GPU Cores | Processing (Tokens/sec) | Generation (Tokens/sec) |

|---|---|---|---|---|

| Llama2 7B F16 | 400 GB/s | 30 | 600.46 | 24.16 |

| Llama2 7B F16 | 400 GB/s | 38 | 755.67 | 24.65 |

| Llama2 7B Q8_0 | 400 GB/s | 30 | 540.15 | 39.97 |

| Llama2 7B Q8_0 | 400 GB/s | 38 | 677.91 | 41.83 |

| Llama2 7B Q4_0 | 400 GB/s | 30 | 537.6 | 60.99 |

| Llama2 7B Q4_0 | 400 GB/s | 38 | 671.31 | 65.95 |

Key Observations:

- Quantization Matters: We observe a significant improvement in token generation speed as we move from F16 (half precision) to Q80 and Q40 (quantized representations). This is because quantization allows for smaller model sizes and faster processing, especially on GPUs like the M2 Max.

- More Cores, More Power: The number of GPU cores directly impacts performance. With a higher number of cores, the model can process tokens faster, leading to a noticeable boost in throughput.

- Processing vs. Generation: The processing speed, which involves the core calculations of the model, is significantly higher than the generation speed, which involves outputting the final text. This difference is typical for LLMs, emphasizing that the most computationally intensive part is not the final text generation.

Performance Analysis: Model and Device Comparison

The Power of Local LLMs: Apple M2 Max vs. Other Platforms

We can't provide a direct comparison with other devices (outside of the Apple M2 Max), as no data is available in the provided JSON for such a comparison. However, you can find benchmarks for various devices online by searching for "llama.cpp benchmarks" or "GPU benchmarks for LLM inference."

Why Local LLMs Matter

Running LLMs locally offers several advantages:

- Faster Responses: No more waiting for cloud requests to complete. Local processing means results are available immediately.

- Reduced Latency: Say goodbye to lag and buffering. Local LLMs provide a smoother, more interactive experience, especially for applications requiring real-time response.

- Enhanced Privacy: Keep your data safe and secure within your own device, minimizing the need to share sensitive information with external servers.

Practical Recommendations: Use Cases and Workarounds

Ideal Applications for Local LLMs

Local LLM deployments are particularly well-suited for applications that demand fast response times and a high degree of interactivity. Here are some examples:

- Real-Time Text Generation: Create applications like chatbots, writing assistants, and interactive story generators that provide immediate responses.

- Code Completion: Develop tools that leverage the power of LLMs for code suggestions and auto-completion, enhancing developer productivity.

- Local Language Translation: Build translation apps that work directly on the user's device, eliminating the need for cloud connectivity.

- Personalized AI Assistants: Create custom AI assistants that learn from your unique data and preferences, all within the safety and privacy of your local environment.

Workarounds: Addressing Limitations

Despite their advantages, local LLMs also come with limitations:

- Hardware Requirements: Running large models locally often requires powerful hardware with dedicated GPUs.

- Model Size: Even with quantization, larger models can still be too demanding for many devices.

- Software Installation: Setting up the necessary software and libraries for local LLM deployment requires some technical expertise.

To overcome these limitations, consider the following strategies:

- Model Optimization: Explore different quantization techniques and model pruning methods to reduce model size and memory footprint.

- Cloud Integration: For situations where local processing isn't feasible, integrate with cloud services like Hugging Face Inference API to access LLM power without sacrificing speed.

- Community Support: Leverage the active developer community surrounding LLMs for resources, guidance, and pre-trained models optimized for specific devices.

FAQ: Unveiling the Mysteries of Local LLMs

What is quantization?

Quantization is a technique that reduces the size of an LLM by using smaller numbers to represent its parameters (the data that defines the model). Think of it like compressing an image. By using fewer bits (like going from full color to shades of gray), you can reduce the image size without sacrificing too much detail. Quantization allows you to run larger models on devices with limited memory, but it can also slightly reduce the model's accuracy.

What's the difference between processing and generation speed?

- Processing speed refers to how quickly the model can perform the mathematical operations required to understand and respond to your input.

- Generation speed refers to how quickly the model can output the final text response.

Imagine a chef preparing a meal. The processing is like chopping vegetables, sautéing ingredients, and mixing flavors. It takes the most effort and time. The generation is like plating the dish – the final step before serving.

Why is token generation speed important?

Token generation speed measures how quickly your AI can process and generate words. A higher token generation speed means faster responses, a smoother user experience, and the ability to handle more complex tasks.

Can I run Llama2 7B on my smartphone?

It's possible, but unlikely. Large models like Llama2 7B demand a significant amount of processing power and memory, resources that are typically limited in smartphones.

Where can I learn more about developing local LLM applications?

The internet is your friend! Explore online communities like the Hugging Face forums or search for tutorials on platforms like YouTube. There are many resources available to help you get started with local LLM development.

Keywords:

Llama2 7B, Apple M2 Max, local LLM, LLM inference, quantization, token generation speed, benchmarks, performance, GPU cores, bandwidth, practical recommendations, use cases, workarounds, FAQ, AI, deep learning, natural language processing, NLP, developer, geeks, geekiness, humor, conversational tone.