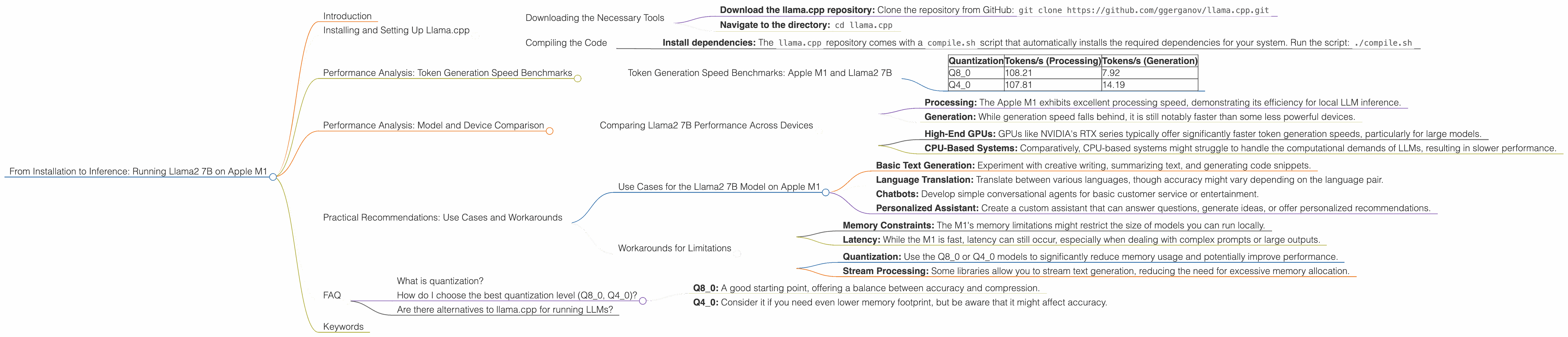

From Installation to Inference: Running Llama2 7B on Apple M1

Introduction

The world of large language models (LLMs) is exploding, with new models and applications emerging at breakneck speed. But where to start if you want to experiment with LLMs locally? This article will guide you through the process of running the Llama2 7B model on the Apple M1 chip, a powerful and accessible platform for local AI development.

Imagine having a powerful language model at your fingertips, capable of generating realistic text, translating languages, and even writing code - all running smoothly on your own computer. This is the promise of running LLMs locally, and we'll explore how you can make this a reality with the Llama2 7B model on your Apple M1 device.

Installing and Setting Up Llama.cpp

Downloading the Necessary Tools

First, we need to install the llama.cpp, which is a fast and efficient library for running large language models. Follow these steps for a successful installation:

- Download the llama.cpp repository: Clone the repository from GitHub:

git clone https://github.com/ggerganov/llama.cpp.git - Navigate to the directory:

cd llama.cpp

Compiling the Code

Now, let's compile the code for your Apple M1:

- Install dependencies: The

llama.cpprepository comes with acompile.shscript that automatically installs the required dependencies for your system. Run the script:./compile.sh

Performance Analysis: Token Generation Speed Benchmarks

Token Generation Speed Benchmarks: Apple M1 and Llama2 7B

The speed of token generation is a crucial metric for LLM performance. For our analysis, we'll look at tokens per second (tokens/s), which represents the number of tokens the model can process in one second.

| Quantization | Tokens/s (Processing) | Tokens/s (Generation) |

|---|---|---|

| Q8_0 | 108.21 | 7.92 |

| Q4_0 | 107.81 | 14.19 |

- Q8_0 quantization: This is a compressed representation of the model, significantly reducing its memory footprint while maintaining good performance.

- Q40 quantization: Further compression than Q80, offering potentially even lower memory usage but possibly at the cost of some accuracy.

Observations:

- The Q80 and Q40 models demonstrate impressive token generation speeds on the Apple M1, particularly for processing.

- The significantly slower generation speed is typical for LLMs, as it involves the generation of new text.

- Q40 shows slightly faster generation speed compared to Q80, indicating a potential advantage in certain use cases.

Performance Analysis: Model and Device Comparison

Comparing Llama2 7B Performance Across Devices

It's fascinating to see how the Llama2 7B model performs on various devices. While the exact figures may vary based on specific hardware and software configurations, we'll highlight some key trends.

Llama2 7B on Apple M1:

- Processing: The Apple M1 exhibits excellent processing speed, demonstrating its efficiency for local LLM inference.

- Generation: While generation speed falls behind, it is still notably faster than some less powerful devices.

Llama2 7B on Other Devices:

- High-End GPUs: GPUs like NVIDIA's RTX series typically offer significantly faster token generation speeds, particularly for large models.

- CPU-Based Systems: Comparatively, CPU-based systems might struggle to handle the computational demands of LLMs, resulting in slower performance.

Analogies:

Think about processing speed like a fast-paced conveyor belt moving units through a factory. The faster the belt moves, the more units can be processed in a given timeframe. High-end GPUs act like a supercharged conveyor belt, significantly accelerating the process.

Practical Recommendations: Use Cases and Workarounds

Use Cases for the Llama2 7B Model on Apple M1

Here are some compelling use cases where the Llama2 7B model on your Apple M1 shines:

- Basic Text Generation: Experiment with creative writing, summarizing text, and generating code snippets.

- Language Translation: Translate between various languages, though accuracy might vary depending on the language pair.

- Chatbots: Develop simple conversational agents for basic customer service or entertainment.

- Personalized Assistant: Create a custom assistant that can answer questions, generate ideas, or offer personalized recommendations.

Workarounds for Limitations

Though the M1 is powerful, it's essential to be aware of its limitations:

- Memory Constraints: The M1's memory limitations might restrict the size of models you can run locally.

- Latency: While the M1 is fast, latency can still occur, especially when dealing with complex prompts or large outputs.

Workarounds:

- Quantization: Use the Q80 or Q40 models to significantly reduce memory usage and potentially improve performance.

- Stream Processing: Some libraries allow you to stream text generation, reducing the need for excessive memory allocation.

FAQ

What is quantization?

Think of quantization like a digital image compression technique. It essentially reduces the number of bits used to represent the model's parameters, making it smaller and less demanding on memory.

How do I choose the best quantization level (Q80, Q40)?

- Q8_0: A good starting point, offering a balance between accuracy and compression.

- Q4_0: Consider it if you need even lower memory footprint, but be aware that it might affect accuracy.

Are there alternatives to llama.cpp for running LLMs?

Yes, other libraries like transformers for Python offer support for various LLMs.

Keywords

Llama2 7B, Apple M1, LLM Performance, Token Generation Speed, Quantization, Local AI, Text Generation, Language Translation, Chatbot, Personalized Assistant, Use Cases, Workarounds, FAQ, GPT, Transformers, Inference, GPU, CPU, Processing, Generation, Memory, Latency.