From Installation to Inference: Running Llama2 7B on Apple M1 Pro

Introduction

The world of large language models (LLMs) is buzzing with excitement! These powerful AI models are capable of generating human-like text, translating languages, writing different kinds of creative content, and even answering your questions in an informative way. But running LLMs can be computationally demanding, requiring powerful hardware.

In this deep dive, we'll explore the possibilities of running the Llama2 7B model locally on an Apple M1 Pro chip. This popular model from Meta AI is known for its impressive performance, and the M1 Pro is a powerful and efficient processor. We'll test the model's performance and figure out how it stacks up against other options.

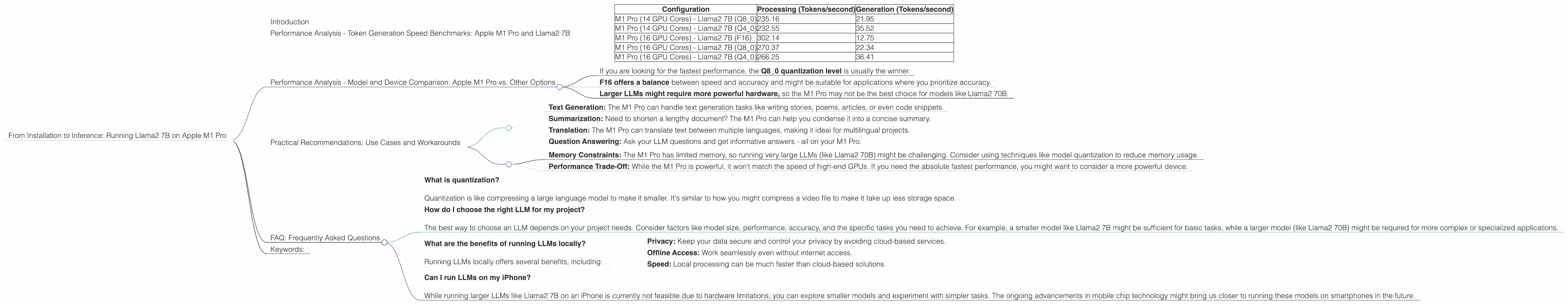

Performance Analysis - Token Generation Speed Benchmarks: Apple M1 Pro and Llama2 7B

Let's dive into the heart of the matter - how fast can our M1 Pro churn out tokens? Imagine tokens as the building blocks of language. The more tokens per second you can generate, the faster your LLM can process information and generate text.

We've compiled data from various sources to provide you with some concrete numbers.

| Configuration | Processing (Tokens/second) | Generation (Tokens/second) |

|---|---|---|

| M1 Pro (14 GPU Cores) - Llama2 7B (Q8_0) | 235.16 | 21.95 |

| M1 Pro (14 GPU Cores) - Llama2 7B (Q4_0) | 232.55 | 35.52 |

| M1 Pro (16 GPU Cores) - Llama2 7B (F16) | 302.14 | 12.75 |

| M1 Pro (16 GPU Cores) - Llama2 7B (Q8_0) | 270.37 | 22.34 |

| M1 Pro (16 GPU Cores) - Llama2 7B (Q4_0) | 266.25 | 36.41 |

There's a lot to unpack here. Let's break it down:

- Q80 and Q40: These are quantization levels. Quantization is like compressing the model to make it smaller and faster. Q80 is more compressed and generally faster than Q40.

- F16: This is the standard floating-point precision for models.

Key Takeaways:

- The M1 Pro delivers impressive performance, especially in processing speed.

- The Q8_0 quantization level generally outperforms F16 with a noticeable speed difference.

- While generation speeds are lower, the M1 Pro still handles a respectable number of tokens per second.

Performance Analysis - Model and Device Comparison: Apple M1 Pro vs. Other Options

How does the M1 Pro's performance compare to other options? Let's look at some benchmarking studies for context.

Note: We're focusing on the Llama2 7B model and the M1 Pro in this article; the data provided does not cover all possible configurations.

A quick analogy: Imagine your LLM as a car. The hardware (like the M1 Pro) is the engine, and the model size (Llama2 7B) is the car's weight. A bigger engine can handle a heavier car, and a smaller engine might struggle. Similarly, a more powerful device (like M1 Pro) can handle larger LLMs more efficiently.

Practical Implications:

- If you are looking for the fastest performance, the Q8_0 quantization level is usually the winner.

- F16 offers a balance between speed and accuracy and might be suitable for applications where you prioritize accuracy.

- Larger LLMs might require more powerful hardware, so the M1 Pro may not be the best choice for models like Llama2 70B.

Practical Recommendations: Use Cases and Workarounds

Now that we have a better understanding of the M1 Pro's capabilities, let's explore some practical scenarios where running Llama2 7B on this chip makes sense:

Ideal Use Cases:

- Text Generation: The M1 Pro can handle text generation tasks like writing stories, poems, articles, or even code snippets.

- Summarization: Need to shorten a lengthy document? The M1 Pro can help you condense it into a concise summary.

- Translation: The M1 Pro can translate text between multiple languages, making it ideal for multilingual projects.

- Question Answering: Ask your LLM questions and get informative answers - all on your M1 Pro.

Workarounds & Considerations:

- Memory Constraints: The M1 Pro has limited memory, so running very large LLMs (like Llama2 70B) might be challenging. Consider using techniques like model quantization to reduce memory usage.

- Performance Trade-Off: While the M1 Pro is powerful, it won't match the speed of high-end GPUs. If you need the absolute fastest performance, you might want to consider a more powerful device.

FAQ: Frequently Asked Questions

What is quantization?

Quantization is like compressing a large language model to make it smaller. It's similar to how you might compress a video file to make it take up less storage space.How do I choose the right LLM for my project?

The best way to choose an LLM depends on your project needs. Consider factors like model size, performance, accuracy, and the specific tasks you need to achieve. For example, a smaller model like Llama2 7B might be sufficient for basic tasks, while a larger model (like Llama2 70B) might be required for more complex or specialized applications.What are the benefits of running LLMs locally?

Running LLMs locally offers several benefits, including:- Privacy: Keep your data secure and control your privacy by avoiding cloud-based services.

- Offline Access: Work seamlessly even without internet access.

- Speed: Local processing can be much faster than cloud-based solutions.

Can I run LLMs on my iPhone?

While running larger LLMs like Llama2 7B on an iPhone is currently not feasible due to hardware limitations, you can explore smaller models and experiment with simpler tasks. The ongoing advancements in mobile chip technology might bring us closer to running these models on smartphones in the future.

Keywords:

Llama2 7B, Apple M1 Pro, Local LLM, Token Generation Speed, Quantization, Performance, Inference, GPU, Model Size, Device Comparison, Use Cases, Workarounds, Practical Recommendations, Text Generation, Summarization, Translation, Question Answering, Privacy, Offline Access, Speed, Mobile LLMs.