Cloud vs. Local: When to Choose NVIDIA RTX A6000 48GB for Your AI Infrastructure

Introduction

The world of artificial intelligence (AI) is exploding, and large language models (LLMs) are at the forefront of this revolution. These powerful models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models requires serious computing power – think of it like needing a super-fast car to drive on a racetrack.

One way to access this power is through cloud computing. You rent the resources you need and pay only for what you use. But another option is to buy your own powerful hardware and run your LLMs locally.

This article will delve into the world of local AI infrastructure, focusing on the NVIDIA RTX A6000 48GB graphics card and its capabilities when it comes to running LLMs. We'll compare the performance of this card with cloud options and explore what makes it a good choice for specific AI scenarios. So, grab your coffee (or your favorite AI-brewed beverage!), and let's dive in!

NVIDIA RTX A6000 48GB: A Powerful Workhorse for LLMs

The NVIDIA RTX A6000 48GB is a powerhouse of a graphics card specifically designed for demanding workloads like AI and deep learning. It's packed with features that make it a great choice for running LLMs locally:

- Ample Memory: Its 48GB of GDDR6 memory ensures that large language models, even the most complex ones, can be loaded entirely into the card's memory, reducing the need for constant disk access and speeding up processing. Imagine it like having a spacious garage to store all your tools for a big project, rather than having to run back and forth to the storage unit!

- High-Performance CUDA Cores: With thousands of CUDA cores, the RTX A6000 boasts blazing-fast parallel processing power, ideal for tackling complex AI calculations. It's like having a team of expert elves working tirelessly on your project, churning out results at warp speed!

- Versatile Architecture: The NVIDIA Ampere architecture behind the RTX A6000 offers advanced features like Tensor Cores, which are specialized processors optimized for matrix operations – the backbone of many AI algorithms. Think of Tensor Cores as super-powered calculators that make AI tasks run super fast.

The Local Advantage: Control and Cost-Efficiency

Now, let's talk about the benefits of running your LLM infrastructure locally:

- Full Control: You have complete control over your setup, from the operating system to the software stack. This allows you to fine-tune your environment for optimal performance and security. Imagine being the chef in your own kitchen, choosing exactly the ingredients and techniques you need to create a culinary masterpiece!

- Potential Cost Savings: While the initial investment for hardware like the RTX A6000 might seem steep, over time, it can be more cost-effective than renting cloud resources for heavy-duty AI tasks. Think of it like owning a reliable car versus renting one for every trip – you might save more in the long run.

Comparing NVIDIA RTX A6000 48GB with Cloud Options: A Head-to-Head Showdown!

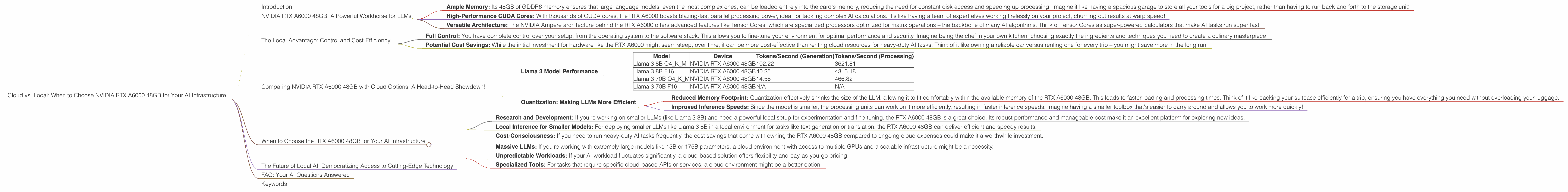

To truly understand the potential of the RTX A6000 48GB, we need to compare its performance with popular cloud computing options. Let's analyze some real-world benchmarks using the Llama 3 LLM model and see how the local setup fares:

Llama 3 Model Performance

| Model | Device | Tokens/Second (Generation) | Tokens/Second (Processing) |

|---|---|---|---|

| Llama 3 8B Q4KM | NVIDIA RTX A6000 48GB | 102.22 | 3621.81 |

| Llama 3 8B F16 | NVIDIA RTX A6000 48GB | 40.25 | 4315.18 |

| Llama 3 70B Q4KM | NVIDIA RTX A6000 48GB | 14.58 | 466.82 |

| Llama 3 70B F16 | NVIDIA RTX A6000 48GB | N/A | N/A |

Note: Data is derived from publicly available benchmarks.

Generation: Llama 3 8B Q4KM running on the RTX A6000 48GB generates tokens at a blazing fast rate of 102.22 tokens per second, which is quite impressive for local processing. This implies that you can generate text much faster when compared to other options.

Processing: The RTX A6000 48GB excels in processing LLM data. The Llama 3 8B F16 model achieves 4,315.18 tokens per second, demonstrating a significant performance advantage. This means you can handle large volumes of text data efficiently and quickly.

Scaling It Up: The performance of the RTX A6000 48GB is impressive for processing Llama 3 8B models. When you increase the size of the LLM, as we see with Llama 3 70B, the performance does decrease. This is expected, as the model's complexity requires more processing power. Notably, the RTX A6000 48GB can handle 70B models locally, but if you're working with even larger models, a cloud solution might be more suitable.

Quantization: Making LLMs More Efficient

One technique that helps improve the performance of LLMs on devices like the RTX A6000 is quantization. Think of it as compressing the model to make it smaller and faster, without losing too much accuracy. The Q4KM quantization scheme used in the benchmarks is like using a smart compression algorithm for your AI models.

Here's how it contributes to performance:

- Reduced Memory Footprint: Quantization effectively shrinks the size of the LLM, allowing it to fit comfortably within the available memory of the RTX A6000 48GB. This leads to faster loading and processing times. Think of it like packing your suitcase efficiently for a trip, ensuring you have everything you need without overloading your luggage.

- Improved Inference Speeds: Since the model is smaller, the processing units can work on it more efficiently, resulting in faster inference speeds. Imagine having a smaller toolbox that's easier to carry around and allows you to work more quickly!

When to Choose the RTX A6000 48GB for Your AI Infrastructure

So, now that we've explored the strengths and weaknesses of the RTX A6000 48GB, let's break down when it's a perfect fit for your AI needs:

Ideal Scenarios:

- Research and Development: If you're working on smaller LLMs (like Llama 3 8B) and need a powerful local setup for experimentation and fine-tuning, the RTX A6000 48GB is a great choice. Its robust performance and manageable cost make it an excellent platform for exploring new ideas.

- Local Inference for Smaller Models: For deploying smaller LLMs like Llama 3 8B in a local environment for tasks like text generation or translation, the RTX A6000 48GB can deliver efficient and speedy results.

- Cost-Consciousness: If you need to run heavy-duty AI tasks frequently, the cost savings that come with owning the RTX A6000 48GB compared to ongoing cloud expenses could make it a worthwhile investment.

Situations Where Cloud Might Be Better:

- Massive LLMs: If you're working with extremely large models like 13B or 175B parameters, a cloud environment with access to multiple GPUs and a scalable infrastructure might be a necessity.

- Unpredictable Workloads: If your AI workload fluctuates significantly, a cloud-based solution offers flexibility and pay-as-you-go pricing.

- Specialized Tools: For tasks that require specific cloud-based APIs or services, a cloud environment might be a better option.

The Future of Local AI: Democratizing Access to Cutting-Edge Technology

The NVIDIA RTX A6000 48GB is a testament to the exciting progress in local AI infrastructure. As hardware continues to improve and LLM models become more efficient, the line between cloud and local AI will blur even further. For developers and researchers, this means greater control, flexibility, and potentially even lower costs for harnessing the power of AI.

FAQ: Your AI Questions Answered

Q: What is the difference between the RTX A6000 48GB and the RTX 3090 when it comes to AI workloads?

The RTX A6000 48GB is designed specifically for professional workloads, including AI and deep learning. It features more CUDA cores, a larger amount of memory, and advanced technologies like Tensor Cores, making it significantly more powerful for handling demanding AI tasks. The RTX 3090, while still a high-performance gaming card, might not be as suitable for large-scale AI projects due to its limited memory and specialized features.

Q: What software do I need to run LLMs on the RTX A6000 48GB locally?

You'll need a suitable operating system (like Linux) and software libraries like CUDA, cuDNN, and the specific framework you're using for the LLM (for example, PyTorch or TensorFlow).

Q: Is the RTX A6000 48GB suitable for running other AI applications besides LLMs?

Absolutely! It's a powerful card capable of handling a wide range of AI applications, including image and video processing, 3D modeling, and other deep learning tasks.

Q: How much does the RTX A6000 48GB cost?

The cost of an RTX A6000 48GB can vary depending on the vendor and current market conditions. However, it's important to remember that this cost should be considered against the potential savings you might achieve by running your AI workloads locally compared to paying for cloud resources.

Keywords

NVIDIA RTX A6000 48GB, Llama 3, AI, LLM, local AI, cloud computing, GPU, performance, quantization, research, development, cost-efficiency, token speed, inference, hardware, software, CUDA, cuDNN, PyTorch, TensorFlow.