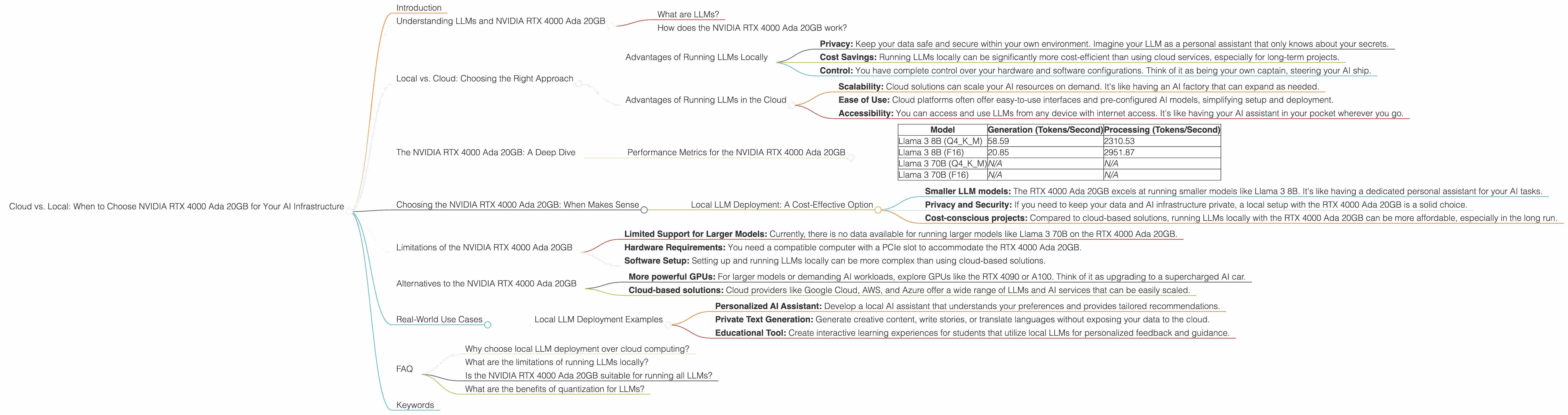

Cloud vs. Local: When to Choose NVIDIA RTX 4000 Ada 20GB for Your AI Infrastructure

Introduction

The world is abuzz with excitement about large language models (LLMs) like Llama 2, ChatGPT, and Bard. These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But when it comes to running these models, the question arises: Cloud or Local? The choice depends on your specific needs and budget. This article focuses on the NVIDIA RTX 4000 Ada 20GB, exploring its capabilities for running local LLMs, and helping you decide if it's the right fit for your AI infrastructure.

Understanding LLMs and NVIDIA RTX 4000 Ada 20GB

What are LLMs?

Imagine a super-powered computer program that can learn and understand human language, like a digital brain trained on massive datasets of text and code. That's what an LLM is. Think about it like this: if a human brain is a library with millions of books, an LLM is a library with an infinite number of books, constantly expanding and learning new information.

How does the NVIDIA RTX 4000 Ada 20GB work?

The NVIDIA RTX 4000 Ada 20GB is a powerful graphics card specifically designed to accelerate AI workloads. Think of it as a turbocharger for your computer, significantly boosting your AI performance. It's like giving your LLM a rocket-powered backpack to process information faster.

Local vs. Cloud: Choosing the Right Approach

Advantages of Running LLMs Locally

- Privacy: Keep your data safe and secure within your own environment. Imagine your LLM as a personal assistant that only knows about your secrets.

- Cost Savings: Running LLMs locally can be significantly more cost-efficient than using cloud services, especially for long-term projects.

- Control: You have complete control over your hardware and software configurations. Think of it as being your own captain, steering your AI ship.

Advantages of Running LLMs in the Cloud

- Scalability: Cloud solutions can scale your AI resources on demand. It's like having an AI factory that can expand as needed.

- Ease of Use: Cloud platforms often offer easy-to-use interfaces and pre-configured AI models, simplifying setup and deployment.

- Accessibility: You can access and use LLMs from any device with internet access. It's like having your AI assistant in your pocket wherever you go.

The NVIDIA RTX 4000 Ada 20GB: A Deep Dive

Performance Metrics for the NVIDIA RTX 4000 Ada 20GB

The NVIDIA RTX 4000 Ada 20GB is a powerful GPU designed to accelerate AI workloads. We analyze its performance using two key metrics: generation and processing speed, measured in tokens per second.

Note: The data below is based on benchmarks for Llama 3 models. Data for other models may vary and might not be available.

| Model | Generation (Tokens/Second) | Processing (Tokens/Second) |

|---|---|---|

| Llama 3 8B (Q4KM) | 58.59 | 2310.53 |

| Llama 3 8B (F16) | 20.85 | 2951.87 |

| Llama 3 70B (Q4KM) | N/A | N/A |

| Llama 3 70B (F16) | N/A | N/A |

Explanation:

- Generation: The speed at which the model generates text output. Think of it as the chatbot's typing speed.

- Processing: The speed at which the model processes input data. Think of it as the chatbot's reading speed.

- Q4KM (Quantization): A technique to compress the model size, making it more efficient and faster. Imagine taking a massive textbook and compressing it into a smaller, more manageable version.

- F16 (Floating Point): Represents the precision of the model's calculations. Think of it as the level of detail used in the model's thinking process.

Choosing the NVIDIA RTX 4000 Ada 20GB: When Makes Sense

Local LLM Deployment: A Cost-Effective Option

The NVIDIA RTX 4000 Ada 20GB offers a powerful and cost-effective solution for running LLMs locally. Here's when it's a good choice:

- Smaller LLM models: The RTX 4000 Ada 20GB excels at running smaller models like Llama 3 8B. It's like having a dedicated personal assistant for your AI tasks.

- Privacy and Security: If you need to keep your data and AI infrastructure private, a local setup with the RTX 4000 Ada 20GB is a solid choice.

- Cost-conscious projects: Compared to cloud-based solutions, running LLMs locally with the RTX 4000 Ada 20GB can be more affordable, especially in the long run.

Limitations of the NVIDIA RTX 4000 Ada 20GB

While the NVIDIA RTX 4000 Ada 20GB is a great choice for local LLMs, it has some limitations:

- Limited Support for Larger Models: Currently, there is no data available for running larger models like Llama 3 70B on the RTX 4000 Ada 20GB.

- Hardware Requirements: You need a compatible computer with a PCIe slot to accommodate the RTX 4000 Ada 20GB.

- Software Setup: Setting up and running LLMs locally can be more complex than using cloud-based solutions.

Alternatives to the NVIDIA RTX 4000 Ada 20GB

If the NVIDIA RTX 4000 Ada 20GB doesn't meet your needs, consider these alternatives:

- More powerful GPUs: For larger models or demanding AI workloads, explore GPUs like the RTX 4090 or A100. Think of it as upgrading to a supercharged AI car.

- Cloud-based solutions: Cloud providers like Google Cloud, AWS, and Azure offer a wide range of LLMs and AI services that can be easily scaled.

Real-World Use Cases

Local LLM Deployment Examples

- Personalized AI Assistant: Develop a local AI assistant that understands your preferences and provides tailored recommendations.

- Private Text Generation: Generate creative content, write stories, or translate languages without exposing your data to the cloud.

- Educational Tool: Create interactive learning experiences for students that utilize local LLMs for personalized feedback and guidance.

FAQ

Why choose local LLM deployment over cloud computing?

Local deployment offers greater privacy, cost savings, and control over your AI infrastructure. It's like having your own AI server in your basement.

What are the limitations of running LLMs locally?

Local LLM deployment can be more complex to set up, and may not be able to handle larger, more demanding LLM models. It's like having a small, personal AI lab that might not be suitable for large-scale projects.

Is the NVIDIA RTX 4000 Ada 20GB suitable for running all LLMs?

The NVIDIA RTX 4000 Ada 20GB is best suited for smaller LLMs like Llama 3 8B, offering a balance of performance and cost-effectiveness. Larger models might require more powerful hardware.

What are the benefits of quantization for LLMs?

Quantization compresses the size of LLM models, making them more efficient and faster. Think of it as streamlining your AI program, so it runs smoother and faster.

Keywords

NVIDIA RTX 4000 Ada 20GB, local LLM deployment, cloud computing, AI infrastructure, performance metrics, generation speed, processing speed, Llama 3, quantization, F16, cost-effectiveness, privacy, control, scalability, alternatives, use cases, FAQ, keywords, SEO optimization.