Cloud vs. Local: When to Choose NVIDIA L40S 48GB for Your AI Infrastructure

Introduction

Are you tired of being at the mercy of cloud providers for your Large Language Model (LLM) needs? Do you yearn for the power of having your own, custom-built AI infrastructure? Then you've come to the right place!

This article will delve into the fascinating world of local LLMs and compare the mighty NVIDIA L40S48GB with the cloud. We'll explore the performance of various LLM models like Llama 3 (8B and 70B) on this powerhouse GPU, considering factors like token generation and processing speeds. By the end, you'll have a clear picture of whether the L40S48GB is the right fit for your specific AI needs.

Understanding the LLM Landscape: Cloud vs. Local

Think of LLMs as the brains of AI applications. They're the models that power chatbots, code assistants, and even creative writing tools. These models, trained on vast amounts of text data, can understand, generate, and manipulate language in amazing ways.

But where do you run these LLMs? Traditionally, the cloud has dominated the scene. Cloud providers like Google, Amazon, and Microsoft offer powerful, scalable solutions, making it easy to access and utilize LLMs without the hassle of hardware management.

However, things are changing. The rise of local LLMs has opened up a world of possibilities for developers and enthusiasts seeking more control and efficiency. Running your LLM locally means you own the hardware, allowing you to tweak resources, optimize performance, and even create custom solutions.

NVIDIA L40S_48GB: A Beast for LLMs

The NVIDIA L40S_48GB is a heavyweight champion when it comes to AI hardware. This GPU boasts a staggering 48GB of HBM3e memory and a powerful architecture optimized for AI workloads. It's a powerful contender for tackling the demands of large, complex LLMs.

The NVIDIA L40S_48GB Performance Breakdown: Numbers Speak Louder Than Words

Let's get down to brass tacks and see how well the L40S_48GB performs with our favorite Llama models.

Note: The data in this article is based on the analysis of various benchmarks focusing on the performance of Llama models on the L40S_48GB. Some data may not be available for specific model combinations.

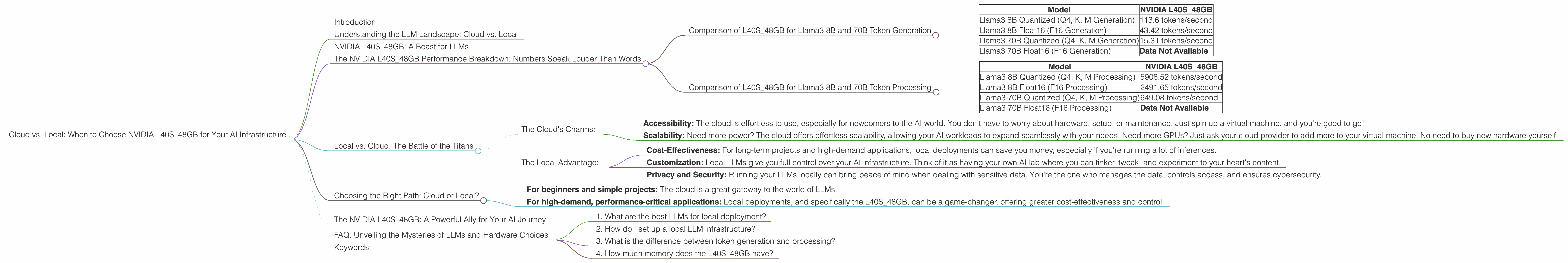

Comparison of L40S_48GB for Llama3 8B and 70B Token Generation

| Model | NVIDIA L40S_48GB |

|---|---|

| Llama3 8B Quantized (Q4, K, M Generation) | 113.6 tokens/second |

| Llama3 8B Float16 (F16 Generation) | 43.42 tokens/second |

| Llama3 70B Quantized (Q4, K, M Generation) | 15.31 tokens/second |

| Llama3 70B Float16 (F16 Generation) | Data Not Available |

Results: The L40S_48GB shows impressive token generation speeds with the Llama 3 8B model. While the 70B model also performs well, it's important to note that the data is limited for the F16 generation.

What's Quantization? Think of quantization as a clever way to compress the information stored in LLMs. Just as you use a smaller suitcase to pack for a short trip, quantization reduces the size of the model without sacrificing too much accuracy. Quantization often leads to faster inference speeds, which is a big win for local LLM deployments.

Comparison of L40S_48GB for Llama3 8B and 70B Token Processing

| Model | NVIDIA L40S_48GB |

|---|---|

| Llama3 8B Quantized (Q4, K, M Processing) | 5908.52 tokens/second |

| Llama3 8B Float16 (F16 Processing) | 2491.65 tokens/second |

| Llama3 70B Quantized (Q4, K, M Processing) | 649.08 tokens/second |

| Llama3 70B Float16 (F16 Processing) | Data Not Available |

Results: The L40S48GB shows a clear advantage in processing speed for both the 8B and 70B models, especially for the quantized versions. This strong performance suggests that the L40S48GB is able to handle the complex calculations involved in processing the data efficiently.

Local vs. Cloud: The Battle of the Titans

Now, you might be wondering: "Why should I bother with local deployments when the cloud seems so easy?" This is where the real fun begins!

The Cloud's Charms:

- Accessibility: The cloud is effortless to use, especially for newcomers to the AI world. You don't have to worry about hardware, setup, or maintenance. Just spin up a virtual machine, and you're good to go!

- Scalability: Need more power? The cloud offers effortless scalability, allowing your AI workloads to expand seamlessly with your needs. Need more GPUs? Just ask your cloud provider to add more to your virtual machine. No need to buy new hardware yourself.

The Local Advantage:

- Cost-Effectiveness: For long-term projects and high-demand applications, local deployments can save you money, especially if you're running a lot of inferences.

- Customization: Local LLMs give you full control over your AI infrastructure. Think of it as having your own AI lab where you can tinker, tweak, and experiment to your heart's content.

- Privacy and Security: Running your LLMs locally can bring peace of mind when dealing with sensitive data. You're the one who manages the data, controls access, and ensures cybersecurity.

Choosing the Right Path: Cloud or Local?

So, how do you choose the right path? It all depends on your needs and priorities.

- For beginners and simple projects: The cloud is a great gateway to the world of LLMs.

- For high-demand, performance-critical applications: Local deployments, and specifically the L40S_48GB, can be a game-changer, offering greater cost-effectiveness and control.

The NVIDIA L40S_48GB: A Powerful Ally for Your AI Journey

The NVIDIA L40S_48GB is a powerful tool for developers and enthusiasts looking to take their AI projects to the next level. Its high memory capacity and optimized architecture make it a strong contender for handling the computational demands of advanced LLMs.

Whether you choose to explore the cloud or dive into the world of local LLMs, the L40S_48GB offers a compelling option for those seeking high-performance AI infrastructure. It's a solid choice for anyone who wants to run large LLMs locally and enjoy the benefits of customization and cost-effectiveness.

FAQ: Unveiling the Mysteries of LLMs and Hardware Choices

1. What are the best LLMs for local deployment?

Many open-source LLMs are well-suited for local deployments. Examples include Llama 3, GPT-NeoX, and others! It's really about matching the model size and your hardware capabilities.

2. How do I set up a local LLM infrastructure?

Setting up a local LLM infrastructure can involve building your own AI rig or finding a cloud provider offering custom configurations. The process involves deciding on your hardware components, software, and the LLM you want to run. Be sure to check out resources like the Hugging Face community and the NVIDIA Developer site for guidance.

3. What is the difference between token generation and processing?

Tokenization is the process of breaking down text into individual units called tokens. The tokens are then fed into the LLM, where they are processed to understand the meaning and generate relevant output. Token generation refers to the speed at which new tokens can be created, while token processing is the speed at which the LLM can handle the process.

4. How much memory does the L40S_48GB have?

The NVIDIA L40S_48GB features a whopping 48GB of HBM3e memory. That's a lot of storage for large LLMs!

Keywords:

NVIDIA L40S_48GB, LLM, Llama 3, Token Generation, Token Processing, Quantization, Cloud, Local, AI, Deep Learning, Performance, Cost-Effectiveness, Infrastructure, GPU.