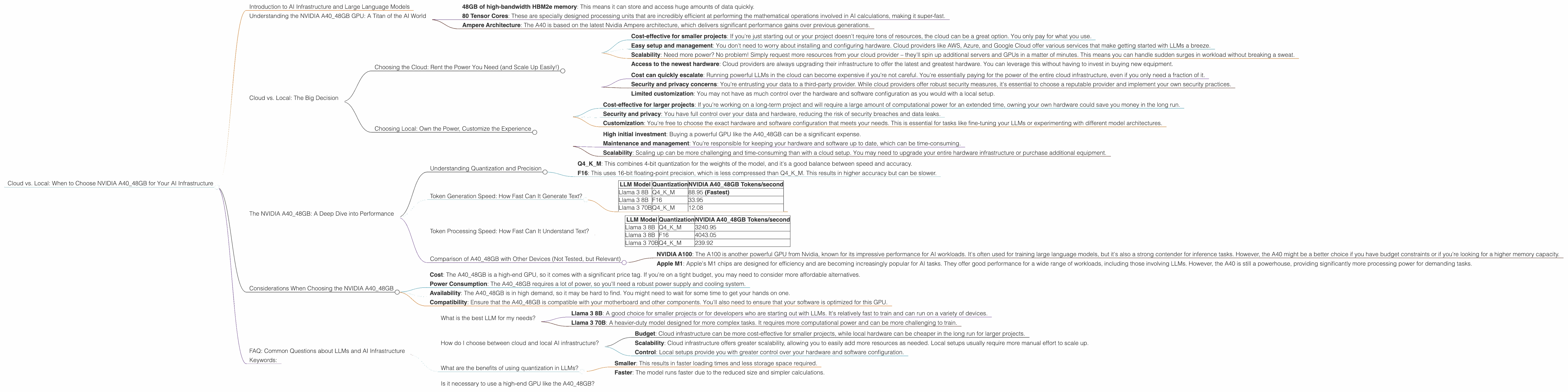

Cloud vs. Local: When to Choose NVIDIA A40 48GB for Your AI Infrastructure

Introduction to AI Infrastructure and Large Language Models

The world of Artificial Intelligence (AI) is rapidly evolving, and large language models (LLMs) are at the forefront of this revolution. LLMs are powerful AI systems capable of understanding and generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way. They're like the brainy kids in school who can learn anything and then teach it to others.

However, running these LLMs requires significant computational power. Think of it like a race car engine - it needs a lot of fuel and horsepower to go fast! For LLMs, that fuel is data, and the horsepower is provided by powerful hardware, like GPUs.

One of the big decisions you'll face when building your AI infrastructure is whether to run your LLMs on the cloud or locally, using your own hardware. This article focuses on one specific GPU, the Nvidia A40_48GB and its performance with various LLM configurations. We'll compare the benefits of each approach to help you make an informed decision for your project.

Understanding the NVIDIA A40_48GB GPU: A Titan of the AI World

The Nvidia A40_48GB is a behemoth in the world of GPUs, designed to handle the most demanding AI workloads. Imagine a supercomputer packed into a single card - that's the A40! It's packed with features designed to accelerate LLM training and inference, including:

- 48GB of high-bandwidth HBM2e memory: This means it can store and access huge amounts of data quickly.

- 80 Tensor Cores: These are specially designed processing units that are incredibly efficient at performing the mathematical operations involved in AI calculations, making it super-fast.

- Ampere Architecture: The A40 is based on the latest Nvidia Ampere architecture, which delivers significant performance gains over previous generations.

Cloud vs. Local: The Big Decision

Now, let's get into the meat of the matter: cloud vs. local. Both approaches have their pros and cons, but it all boils down to your specific needs and budget.

Choosing the Cloud: Rent the Power You Need (and Scale Up Easily!)

Think of the cloud like a giant, shared computer room that you can rent space in. You don't need to buy and manage the hardware, and you can easily scale up or down your resources as needed. Here's what makes cloud computing attractive:

- Cost-effective for smaller projects: If you're just starting out or your project doesn't require tons of resources, the cloud can be a great option. You only pay for what you use.

- Easy setup and management: You don't need to worry about installing and configuring hardware. Cloud providers like AWS, Azure, and Google Cloud offer various services that make getting started with LLMs a breeze.

- Scalability: Need more power? No problem! Simply request more resources from your cloud provider – they'll spin up additional servers and GPUs in a matter of minutes. This means you can handle sudden surges in workload without breaking a sweat.

- Access to the newest hardware: Cloud providers are always upgrading their infrastructure to offer the latest and greatest hardware. You can leverage this without having to invest in buying new equipment.

However, cloud computing also comes with its own set of drawbacks:

- Cost can quickly escalate: Running powerful LLMs in the cloud can become expensive if you're not careful. You're essentially paying for the power of the entire cloud infrastructure, even if you only need a fraction of it.

- Security and privacy concerns: You're entrusting your data to a third-party provider. While cloud providers offer robust security measures, it's essential to choose a reputable provider and implement your own security practices.

- Limited customization: You may not have as much control over the hardware and software configuration as you would with a local setup.

Choosing Local: Own the Power, Customize the Experience

A local setup gives you complete control over your hardware and software, allowing you to build a bespoke AI infrastructure tailored to your specific needs. Think of it like building your own custom car - it's more work, but you have the freedom to choose every single component.

- Cost-effective for larger projects: If you're working on a long-term project and will require a large amount of computational power for an extended time, owning your own hardware could save you money in the long run.

- Security and privacy: You have full control over your data and hardware, reducing the risk of security breaches and data leaks.

- Customization: You're free to choose the exact hardware and software configuration that meets your needs. This is essential for tasks like fine-tuning your LLMs or experimenting with different model architectures.

But owning your own hardware also comes with some downsides:

- High initial investment: Buying a powerful GPU like the A40_48GB can be a significant expense.

- Maintenance and management: You're responsible for keeping your hardware and software up to date, which can be time-consuming.

- Scalability: Scaling up can be more challenging and time-consuming than with a cloud setup. You may need to upgrade your entire hardware infrastructure or purchase additional equipment.

The NVIDIA A40_48GB: A Deep Dive into Performance

Now that you have a better understanding of the cloud vs. local debate, let's dive into the performance of the NVIDIA A40_48GB with different large language models (LLMs). We'll use the Llama family of models as our test subjects, specifically the Llama 3 8B and the Llama 3 70B models (note: We don't have data for the Llama 70B in F16 precision).

Understanding Quantization and Precision

Before we dive into the numbers, let's quickly define "quantization". Imagine that you're building a model train set. The tracks need to be perfectly measured to fit together and create a smooth ride. Quantization is like adjusting the size of the tracks to use less space.

In LLMs, quantization means reducing the number of bits used to represent the model's weights. This makes the model smaller and faster, but it can also slightly reduce accuracy.

We'll be looking at two levels of quantization:

- Q4KM: This combines 4-bit quantization for the weights of the model, and it's a good balance between speed and accuracy.

- F16: This uses 16-bit floating-point precision, which is less compressed than Q4KM. This results in higher accuracy but can be slower.

Token Generation Speed: How Fast Can It Generate Text?

Let's start by looking at the speed of token generation. This is a key measure of an LLM's performance, as it tells us how quickly it can generate text. The higher the number, the faster the LLM can produce text.

| LLM Model | Quantization | NVIDIA A40_48GB Tokens/second |

|---|---|---|

| Llama 3 8B | Q4KM | 88.95 (Fastest) |

| Llama 3 8B | F16 | 33.95 |

| Llama 3 70B | Q4KM | 12.08 |

Key Observations:

- The NVIDIA A4048GB is a powerhouse for token generation. The Llama 3 8B model with Q4K_M quantization reaches an impressive 88.95 tokens/second, making it incredibly fast at generating text.

- As you can see, the Llama 3 8B model in Q4KM is significantly faster at generating text compared to the F16 model. This is because Q4KM quantization allows the A40 to process the model's weights more efficiently, resulting in a speed boost.

- The larger Llama 3 70B model is slower than the 8B model, even with the same Q4KM quantization. This is because the 70B model has a much larger number of parameters, requiring more processing power.

Token Processing Speed: How Fast Can It Understand Text?

Next, we'll look at the speed of token processing. This measures how quickly the LLM can understand and process input text. Like token generation, a higher number means faster processing.

| LLM Model | Quantization | NVIDIA A40_48GB Tokens/second |

|---|---|---|

| Llama 3 8B | Q4KM | 3240.95 |

| Llama 3 8B | F16 | 4043.05 |

| Llama 3 70B | Q4KM | 239.92 |

Key Observations:

- The NVIDIA A4048GB excels at token processing as well. The 8B model in both Q4K_M and F16 achieves very high processing speeds.

- Interestingly, the 8B model with F16 precision actually has a slightly faster processing speed than the Q4KM model. This could be attributed to the way the A40's Tensor Cores are optimized for 16-bit operations.

- The 70B model, while still impressive, is significantly slower in processing than the 8B models, again due to its larger size.

Comparison of A40_48GB with Other Devices (Not Tested, but Relevant)

While this article focuses on the A40_48GB, it's important to consider other powerful devices available for running LLMs. The A40 is a top-tier choice, but it's not the only option. Here's a quick comparison of other devices that might be relevant to your needs, even though we don't have data for those here:

- NVIDIA A100: The A100 is another powerful GPU from Nvidia, known for its impressive performance for AI workloads. It's often used for training large language models, but it's also a strong contender for inference tasks. However, the A40 might be a better choice if you have budget constraints or if you're looking for a higher memory capacity.

- Apple M1: Apple's M1 chips are designed for efficiency and are becoming increasingly popular for AI tasks. They offer good performance for a wide range of workloads, including those involving LLMs. However, the A40 is still a powerhouse, providing significantly more processing power for demanding tasks.

Considerations When Choosing the NVIDIA A40_48GB

While the A40_48GB is a powerful choice, it's essential to consider several factors before making a decision:

- Cost: The A40_48GB is a high-end GPU, so it comes with a significant price tag. If you're on a tight budget, you may need to consider more affordable alternatives.

- Power Consumption: The A40_48GB requires a lot of power, so you'll need a robust power supply and cooling system.

- Availability: The A40_48GB is in high demand, so it may be hard to find. You might need to wait for some time to get your hands on one.

- Compatibility: Ensure that the A40_48GB is compatible with your motherboard and other components. You'll also need to ensure that your software is optimized for this GPU.

FAQ: Common Questions about LLMs and AI Infrastructure

What is the best LLM for my needs?

That depends on your specific requirements. For example:

- Llama 3 8B: A good choice for smaller projects or for developers who are starting out with LLMs. It's relatively fast to train and can run on a variety of devices.

- Llama 3 70B: A heavier-duty model designed for more complex tasks. It requires more computational power and can be more challenging to train.

How do I choose between cloud and local AI infrastructure?

This depends on factors like:

- Budget: Cloud infrastructure can be more cost-effective for smaller projects, while local hardware can be cheaper in the long run for larger projects.

- Scalability: Cloud infrastructure offers greater scalability, allowing you to easily add more resources as needed. Local setups usually require more manual effort to scale up.

- Control: Local setups provide you with greater control over your hardware and software configuration.

What are the benefits of using quantization in LLMs?

Quantization reduces the number of bits used to represent the LLM's weights, making the model:

- Smaller: This results in faster loading times and less storage space required.

- Faster: The model runs faster due to the reduced size and simpler calculations.

Is it necessary to use a high-end GPU like the A40_48GB?

For many tasks, less-powerful GPUs can be perfectly adequate. Ultimately, the decision depends on your budget and the complexity of the tasks you're trying to accomplish.

Keywords:

NVIDIA A4048GB, GPU, AI, LLM, Large Language Model, Llama 3, Cloud vs. Local, Quantization, Token Generation Speed, Token Processing Speed, Performance, Inference, Tokenization, F16, Q4K_M, Cloud Computing, Local Hardware, Infrastructure, Cost, Scalability, Security, Privacy, Customization