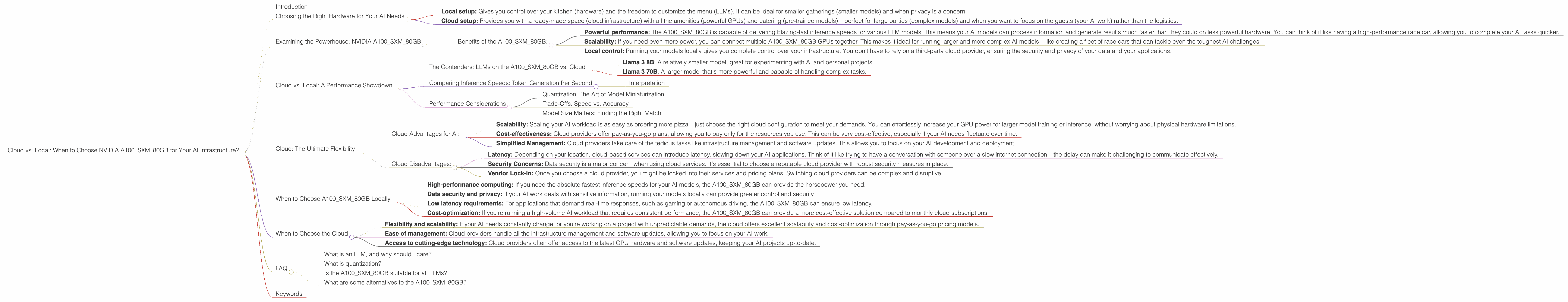

Cloud vs. Local: When to Choose NVIDIA A100 SXM 80GB for Your AI Infrastructure

Introduction

The world of Artificial Intelligence (AI) is booming, and Large Language Models (LLMs) are at the forefront of this revolution. These powerful AI models, capable of generating human-like text, translating languages, writing different kinds of creative content, and answering your questions in an informative way, are changing how we interact with technology.

But running these models can be resource-intensive, requiring powerful hardware and substantial computing power. That's where the NVIDIA A100SXM80GB comes in – a powerhouse GPU designed specifically for accelerating AI workloads.

This article will deep dive into the world of LLMs and the A100SXM80GB, comparing the performance of running these LLMs locally on this GPU versus using cloud-based solutions. We'll explore the benefits and drawbacks of each approach, helping you make the best decision for your specific needs.

Let's get started!

Choosing the Right Hardware for Your AI Needs

The world of AI is as diverse as the people and companies using it. Some users need to run their models on-premise for security or latency reasons, while others prefer the flexibility and scalability of the cloud.

Here's a simple analogy: Imagine you're hosting a party. Would you rather prepare everything at your own place (local) or rent a venue (cloud)?

- Local setup: Gives you control over your kitchen (hardware) and the freedom to customize the menu (LLMs). It can be ideal for smaller gatherings (smaller models) and when privacy is a concern.

- Cloud setup: Provides you with a ready-made space (cloud infrastructure) with all the amenities (powerful GPUs) and catering (pre-trained models) – perfect for large parties (complex models) and when you want to focus on the guests (your AI work) rather than the logistics.

But how do you choose the right "kitchen" for your AI party?

Examining the Powerhouse: NVIDIA A100SXM80GB

The NVIDIA A100SXM80GB is a beast of a GPU, specifically designed for accelerating AI workloads. It's packed with 40GB of HBM2e memory (think of it as the workspace for the GPU) and 5,120 CUDA cores (like tiny processors working together) – enough to make your AI models run faster than a cheetah chasing a gazelle.

Benefits of the A100SXM80GB:

- Powerful performance: The A100SXM80GB is capable of delivering blazing-fast inference speeds for various LLM models. This means your AI models can process information and generate results much faster than they could on less powerful hardware. You can think of it like having a high-performance race car, allowing you to complete your AI tasks quicker.

- Scalability: If you need even more power, you can connect multiple A100SXM80GB GPUs together. This makes it ideal for running larger and more complex AI models – like creating a fleet of race cars that can tackle even the toughest AI challenges.

- Local control: Running your models locally gives you complete control over your infrastructure. You don't have to rely on a third-party cloud provider, ensuring the security and privacy of your data and your applications.

Cloud vs. Local: A Performance Showdown

Now that you know about the A100SXM80GB, let's compare its performance for running different LLMs locally versus using a cloud provider's infrastructure.

The Contenders: LLMs on the A100SXM80GB vs. Cloud

We'll compare the A100SXM80GB performance on the following LLMs:

- Llama 3 8B: A relatively smaller model, great for experimenting with AI and personal projects.

- Llama 3 70B: A larger model that's more powerful and capable of handling complex tasks.

Note: We will focus only on the A100SXM80GB and its performance comparisons. Other devices or cloud providers are not part of this analysis.

Comparing Inference Speeds: Token Generation Per Second

For our comparison, we'll look at Tokens Per Second (TPS), a measure of how quickly a model can generate text. Consider it the speed at which your AI model can type. The higher the TPS, the faster the model can create its output.

| Model | A100SXM80GB (Q4KM) | A100SXM80GB (F16) |

|---|---|---|

| Llama 3 8B | 133.38 | 53.18 |

| Llama 3 70B | 24.33 | (No data) |

Interpretation

The A100SXM80GB shines when running the Llama 3 8B model. It can generate over 133 tokens per second using Q4KM quantization – a technique that reduces the size of the model, while maintaining performance. The F16 setting, which uses half-precision floating-point numbers, generates 53 tokens per second, still significantly faster than many other GPU configurations.

For the larger Llama 3 70B model, the A100SXM80GB achieves 24.33 tokens per second using Q4KM quantization.

Performance Considerations

Quantization: The Art of Model Miniaturization

Quantization is like shrinking a large model into a smaller, more manageable size. It's a powerful technique that allows you to run larger models on less powerful hardware.

For example, the A100SXM80GB can run Llama 3 8B in Q4KM format, which is a smaller version of the model compared to the original F16 format. This can be crucial for developers who want to perform inference on a model that demands more memory, especially with larger LLMs like Llama 70B.

Trade-Offs: Speed vs. Accuracy

While quantization offers significant advantages in performance, it's crucial to understand the trade-offs. Reducing the size of a model can sometimes lead to a slight drop in accuracy. This is like making a smaller version of a detailed painting – while you can see the general picture, some of the intricate details might be lost.

Model Size Matters: Finding the Right Match

Choosing the right model size depends on your specific needs. If you need extremely fast inference speeds and are willing to sacrifice a bit of accuracy, the A100SXM80GB can handle even larger models like Llama 70B with Q4KM quantization.

But if you need the highest accuracy possible, you might need to consider a larger GPU or more powerful cloud infrastructure.

Cloud: The Ultimate Flexibility

Cloud-based solutions offer incredible flexibility and scalability. You can easily access and use powerful GPUs, like the A100SXM80GB, without the hassle of setting up and maintaining your own hardware.

Cloud Advantages for AI:

- Scalability: Scaling your AI workload is as easy as ordering more pizza – just choose the right cloud configuration to meet your demands. You can effortlessly increase your GPU power for larger model training or inference, without worrying about physical hardware limitations.

- Cost-effectiveness: Cloud providers offer pay-as-you-go plans, allowing you to pay only for the resources you use. This can be very cost-effective, especially if your AI needs fluctuate over time.

- Simplified Management: Cloud providers take care of the tedious tasks like infrastructure management and software updates. This allows you to focus on your AI development and deployment.

Cloud Disadvantages:

- Latency: Depending on your location, cloud-based services can introduce latency, slowing down your AI applications. Think of it like trying to have a conversation with someone over a slow internet connection – the delay can make it challenging to communicate effectively.

- Security Concerns: Data security is a major concern when using cloud services. It's essential to choose a reputable cloud provider with robust security measures in place.

- Vendor Lock-in: Once you choose a cloud provider, you might be locked into their services and pricing plans. Switching cloud providers can be complex and disruptive.

When to Choose A100SXM80GB Locally

So, how do you decide whether to use the A100SXM80GB locally or go with the cloud?

Here are some scenarios where the A100SXM80GB might be the perfect choice for your AI journey:

- High-performance computing: If you need the absolute fastest inference speeds for your AI models, the A100SXM80GB can provide the horsepower you need.

- Data security and privacy: If your AI work deals with sensitive information, running your models locally can provide greater control and security.

- Low latency requirements: For applications that demand real-time responses, such as gaming or autonomous driving, the A100SXM80GB can ensure low latency.

- Cost-optimization: If you're running a high-volume AI workload that requires consistent performance, the A100SXM80GB can provide a more cost-effective solution compared to monthly cloud subscriptions.

When to Choose the Cloud

The cloud is a fantastic option when you prioritize:

- Flexibility and scalability: If your AI needs constantly change, or you're working on a project with unpredictable demands, the cloud offers excellent scalability and cost-optimization through pay-as-you-go pricing models.

- Ease of management: Cloud providers handle all the infrastructure management and software updates, allowing you to focus on your AI work.

- Access to cutting-edge technology: Cloud providers often offer access to the latest GPU hardware and software updates, keeping your AI projects up-to-date.

FAQ

What is an LLM, and why should I care?

An LLM, or Large Language Model, is like a super-smart AI that can understand and generate human-like text. Imagine having a conversation with a very knowledgeable friend who can summarize articles, translate languages, write different kinds of creative text formats, and answer your questions in a comprehensive and informative way. That's what LLMs are capable of doing. LLMs are revolutionizing various industries, from content creation and customer service to education and research.

What is quantization?

Quantization is a technique that reduces the size of a model, allowing it to run more efficiently on less powerful hardware. Think of it like compressing a large file into a smaller version, without losing too much quality.

Is the A100SXM80GB suitable for all LLMs?

The A100SXM80GB is a powerful GPU, but it's important to choose the right model based on your specific needs. For smaller LLMs like Llama 3 8B, it's a perfect choice. But for larger models like Llama 3 70B, you might need more powerful hardware or consider running them in the cloud.

What are some alternatives to the A100SXM80GB?

For local setup, the NVIDIA A100_PCIe and A40 GPUs are other strong contenders. For cloud-based solutions, AWS, Google Cloud, and Microsoft Azure offer various GPU options and pre-trained models.

Keywords

NVIDIA A100SXM80GB, A100, GPU, AI, LLM, Llama 3, Llama 3 8B, Llama 3 70B, Cloud, Local, Inference Speed, Token Per Second (TPS), Quantization, Q4KM, F16, Performance, Trade-Offs, Accuracy, Model Size, Data Security, Privacy, Latency, Cost-Effectiveness, Scalability, Flexibility, Management, Alternatives, AWS, Google Cloud, Microsoft Azure, Keywords, SEO, Data, AI Infrastructure, AI Workloads, AI Deployment, AI Development, AI Models