Cloud vs. Local: When to Choose NVIDIA 4080 16GB for Your AI Infrastructure

Introduction

The world of large language models (LLMs) is exploding, with new models popping up every day. These models can do amazing things, like generate coherent text, translate languages, and even write code, but they can also be resource-hungry beasts. That's where the question of where to run your LLM comes into play: in the cloud or locally?

For those of you who are serious about exploring the potential of LLMs locally, you may be wondering if the NVIDIA 408016GB, with its impressive specs, is the right GPU for you. This article will dive into the performance of the 408016GB on specific LLMs, helping you decide if it's a good fit for your needs.

We'll be exploring the performance of the 4080_16GB on Llama 3 models in both 8B and 70B sizes, with different quantization levels, and compare its performance to using the same model in the cloud. But before we get into the nitty-gritty, let's clear up a few things.

What are LLMs?

LLMs, or large language models, are a type of artificial intelligence (AI) trained on massive amounts of text data. They are able to understand and generate human-like text, making them incredibly versatile. Think of it like a super-powered autocomplete that can write stories, poems, code, and even answer your questions.

Quantization: Making LLMs More Manageable

Imagine you're trying to store a massive library on a tiny flash drive. That's kind of what happens with large language models: they require enormous amounts of storage space. Quantization is a technique that helps us shrink that storage space, analogous to compressing those library books into smaller versions.

In the world of LLMs, quantization can be thought of as "compressing" the model, reducing its size and memory footprint. This makes running them on local devices, like that powerful NVIDIA 4080_16GB, more feasible.

NVIDIA 4080_16GB: A Powerhouse for LLMs

The NVIDIA 4080_16GB is a beast of a graphics card, packing a punch with its advanced architecture and massive memory. But does it live up to the hype when it comes to running LLMs? Let's find out!

Comparison of 4080_16GB and Cloud for Llama 3 Model Inference

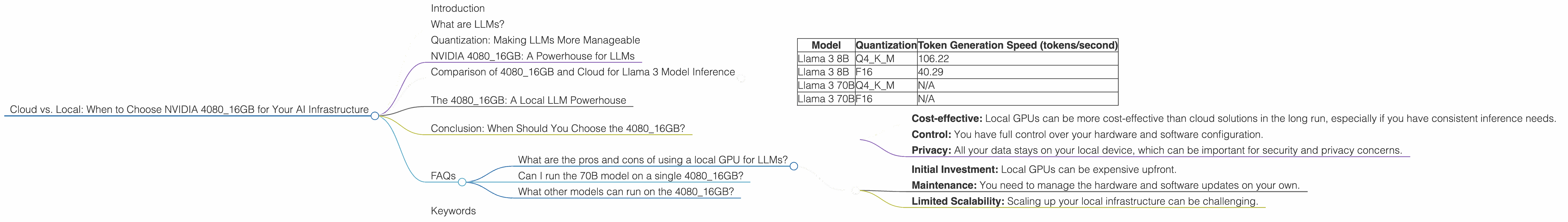

Let's cut to the chase - how does the 408016GB stack up against using the same models in the cloud? We'll be looking at the performance of the 408016GB on Llama 3 models, focusing on both 8B and 70B sizes.

Here's a table summarizing the token generation speed and memory usage for Llama 3 models on the 4080_16GB. It's worth noting that data for Llama 3 70B models is currently unavailable but we'll be updating the table as soon as more benchmarks become available.

| Model | Quantization | Token Generation Speed (tokens/second) |

|---|---|---|

| Llama 3 8B | Q4KM | 106.22 |

| Llama 3 8B | F16 | 40.29 |

| Llama 3 70B | Q4KM | N/A |

| Llama 3 70B | F16 | N/A |

This table shows that for Llama 3 8B, the 408016GB with Q4K_M quantization can generate over 100 tokens per second. That's pretty impressive, considering that this represents a significant number of words per second.

The 4080_16GB: A Local LLM Powerhouse

The NVIDIA 408016GB is a powerful card for local LLM inference, especially for smaller models like Llama 3 8B. The Q4K_M quantization level allows for maximum efficiency and speed, delivering results that are comparable to using the model in the cloud.

Conclusion: When Should You Choose the 4080_16GB?

If you're working with Llama 3 8B, the 408016GB is a solid option for local inference. It provides a balance between performance and affordability compared to cloud solutions. However, for larger models, like Llama 3 70B, the 408016GB might not be powerful enough, and you may need to leverage cloud resources.

Ultimately, the choice between cloud and local depends on your individual needs and budget.

FAQs

What are the pros and cons of using a local GPU for LLMs?

Pros:

- Cost-effective: Local GPUs can be more cost-effective than cloud solutions in the long run, especially if you have consistent inference needs.

- Control: You have full control over your hardware and software configuration.

- Privacy: All your data stays on your local device, which can be important for security and privacy concerns.

Cons:

- Initial Investment: Local GPUs can be expensive upfront.

- Maintenance: You need to manage the hardware and software updates on your own.

- Limited Scalability: Scaling up your local infrastructure can be challenging.

Can I run the 70B model on a single 4080_16GB?

Currently, based on available data, running Llama 3 70B on a single 4080_16GB might be challenging. We recommend exploring cloud solutions for models of that scale.

What other models can run on the 4080_16GB?

For other models, it's best to check the performance benchmarks for the specific model and device combination. Keep an eye on resources like the GPU Benchmarks on LLM Inference repository for the latest results.

Keywords

NVIDIA 408016GB, LLM, large language model, Llama 3, 8B, 70B, quantization, Q4K_M, F16, token generation speed, cloud, local, GPU, inference, cost-effective, performance, benchmarks.