Cloud vs. Local: When to Choose NVIDIA 4070 Ti 12GB for Your AI Infrastructure

Introduction

The AI world is buzzing with excitement about Large Language Models (LLMs). These powerful models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these AI models can be resource-intensive, and that's where the question of cloud vs. local hardware comes in.

Should you opt for the convenience and scalability of cloud-based computing, or go local with beefy hardware like an NVIDIA 4070 Ti 12GB? Let's dive deep into the pros and cons of each approach, and ultimately, decide when the 4070 Ti is the right tool for your AI projects.

The Rise of Local LLMs

The traditional wisdom was that LLMs were the domain of cloud giants like Google and Microsoft. After all, training and running these models requires massive computational power. However, the recent emergence of lighter LLM models and advancements in GPU technology have paved the way for local AI inference.

This means you can run LLMs on your personal computer, without needing a powerful cloud server. It's like having a mini-AI lab in your own home, ready to do your bidding!

NVIDIA 4070 Ti 12GB: Powerhouse for Local AI

The NVIDIA 4070 Ti 12GB is a compelling option for local LLM inference. It’s a powerful graphics card designed for demanding tasks like gaming and video editing, but it also excels at AI computations. Let's break down its strengths:

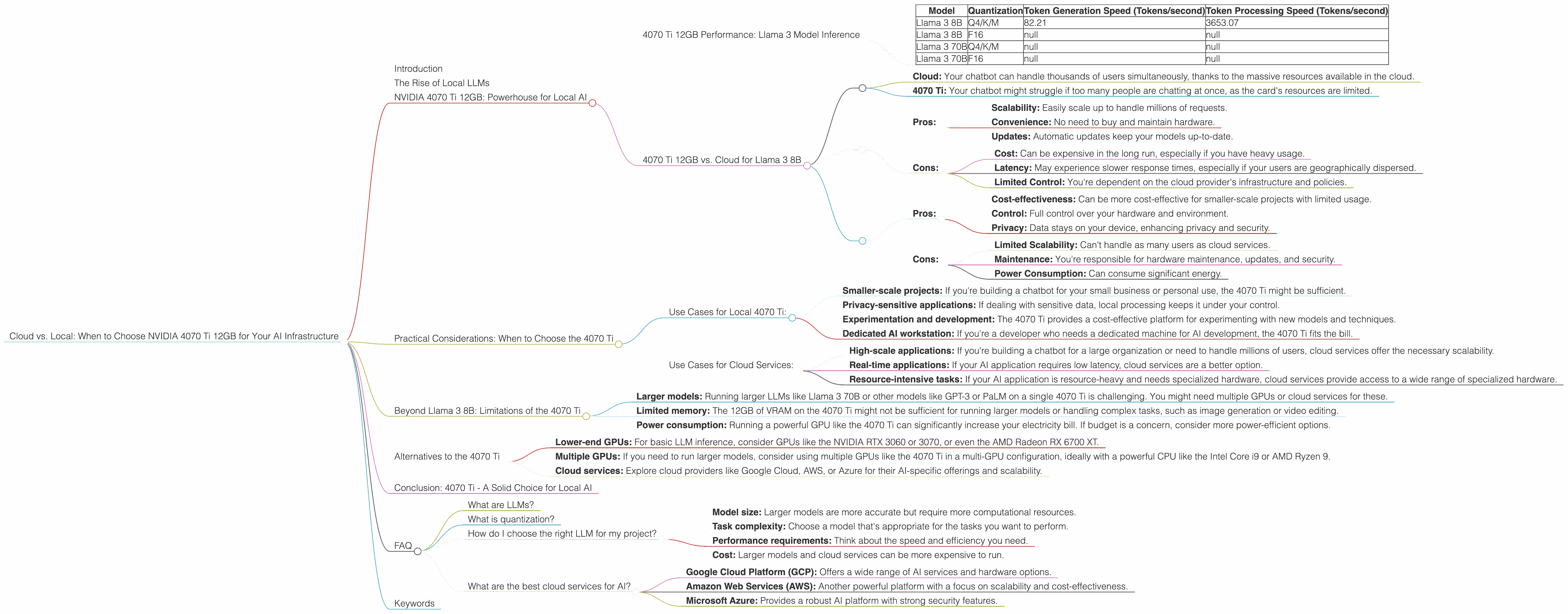

4070 Ti 12GB Performance: Llama 3 Model Inference

The 4070 Ti 12GB shines when it comes to running the Llama 3 8B model, especially with quantization. Quantization is a technique that reduces the size of the model and makes it faster to run. The 4070 Ti can handle the Llama 3 8B model quantized to Q4/K/M format, achieving impressive performance while keeping the model relatively small.

Here’s a table summarizing the 4070 Ti's performance with the Llama 3 8B model (numbers are tokens per second):

| Model | Quantization | Token Generation Speed (Tokens/second) | Token Processing Speed (Tokens/second) |

|---|---|---|---|

| Llama 3 8B | Q4/K/M | 82.21 | 3653.07 |

| Llama 3 8B | F16 | null | null |

| Llama 3 70B | Q4/K/M | null | null |

| Llama 3 70B | F16 | null | null |

Note: The table shows that we have no data for other quantization levels and larger models. It's important to consider this limitation when evaluating the 4070 Ti's performance.

4070 Ti 12GB vs. Cloud for Llama 3 8B

When comparing the 4070 Ti with cloud services, the picture becomes more nuanced. While the 4070 Ti provides impressive performance for the Llama 3 8B model, cloud services offer a level of scalability and convenience that's hard to match locally.

Imagine you're building a chatbot:

- Cloud: Your chatbot can handle thousands of users simultaneously, thanks to the massive resources available in the cloud.

- 4070 Ti: Your chatbot might struggle if too many people are chatting at once, as the card's resources are limited.

Here's a breakdown of the pros and cons:

Cloud:

Pros:

- Scalability: Easily scale up to handle millions of requests.

- Convenience: No need to buy and maintain hardware.

- Updates: Automatic updates keep your models up-to-date.

Cons:

- Cost: Can be expensive in the long run, especially if you have heavy usage.

- Latency: May experience slower response times, especially if your users are geographically dispersed.

- Limited Control: You're dependent on the cloud provider's infrastructure and policies.

4070 Ti:

Pros:

- Cost-effectiveness: Can be more cost-effective for smaller-scale projects with limited usage.

- Control: Full control over your hardware and environment.

- Privacy: Data stays on your device, enhancing privacy and security.

Cons:

- Limited Scalability: Can't handle as many users as cloud services.

- Maintenance: You're responsible for hardware maintenance, updates, and security.

- Power Consumption: Can consume significant energy.

Practical Considerations: When to Choose the 4070 Ti

Ultimately, the choice between cloud and local AI comes down to your specific needs and priorities. Here's a framework to guide your decision:

Use Cases for Local 4070 Ti:

- Smaller-scale projects: If you're building a chatbot for your small business or personal use, the 4070 Ti might be sufficient.

- Privacy-sensitive applications: If dealing with sensitive data, local processing keeps it under your control.

- Experimentation and development: The 4070 Ti provides a cost-effective platform for experimenting with new models and techniques.

- Dedicated AI workstation: If you're a developer who needs a dedicated machine for AI development, the 4070 Ti fits the bill.

Use Cases for Cloud Services:

- High-scale applications: If you're building a chatbot for a large organization or need to handle millions of users, cloud services offer the necessary scalability.

- Real-time applications: If your AI application requires low latency, cloud services are a better option.

- Resource-intensive tasks: If your AI application is resource-heavy and needs specialized hardware, cloud services provide access to a wide range of specialized hardware.

Beyond Llama 3 8B: Limitations of the 4070 Ti

While the 4070 Ti is a workhorse for the Llama 3 8B model, keep in mind that its capabilities are limited:

- Larger models: Running larger LLMs like Llama 3 70B or other models like GPT-3 or PaLM on a single 4070 Ti is challenging. You might need multiple GPUs or cloud services for these.

- Limited memory: The 12GB of VRAM on the 4070 Ti might not be sufficient for running larger models or handling complex tasks, such as image generation or video editing.

- Power consumption: Running a powerful GPU like the 4070 Ti can significantly increase your electricity bill. If budget is a concern, consider more power-efficient options.

Alternatives to the 4070 Ti

If the NVIDIA 4070 Ti doesn't meet your needs, there are other options available:

- Lower-end GPUs: For basic LLM inference, consider GPUs like the NVIDIA RTX 3060 or 3070, or even the AMD Radeon RX 6700 XT.

- Multiple GPUs: If you need to run larger models, consider using multiple GPUs like the 4070 Ti in a multi-GPU configuration, ideally with a powerful CPU like the Intel Core i9 or AMD Ryzen 9.

- Cloud services: Explore cloud providers like Google Cloud, AWS, or Azure for their AI-specific offerings and scalability.

Conclusion: 4070 Ti - A Solid Choice for Local AI

The NVIDIA 4070 Ti 12GB is a powerful GPU capable of running smaller LLMs like the Llama 3 8B with impressive speed and efficiency. However, it's not a one-size-fits-all solution.

For smaller-scale projects with privacy concerns, or for developers who need a dedicated AI workstation, the 4070 Ti is a great choice. But for large-scale applications or those requiring extreme performance, cloud services might be a better fit.

Ultimately, the best choice depends on your specific needs, budget, and technical expertise. Weigh the pros and cons carefully before making your decision.

FAQ

What are LLMs?

Large Language Models (LLMs) are powerful AI models trained on massive amounts of text data. They can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way.

What is quantization?

Quantization is like compressing the size of an LLM. Instead of using 32-bit numbers to represent the model, we use fewer bits, like 8-bit or 4-bit, to store the information. This makes the model smaller and faster to run, but might result in a slight decrease in accuracy.

How do I choose the right LLM for my project?

Consider the following factors:

- Model size: Larger models are more accurate but require more computational resources.

- Task complexity: Choose a model that's appropriate for the tasks you want to perform.

- Performance requirements: Think about the speed and efficiency you need.

- Cost: Larger models and cloud services can be more expensive to run.

What are the best cloud services for AI?

Some popular cloud services for AI include:

- Google Cloud Platform (GCP): Offers a wide range of AI services and hardware options.

- Amazon Web Services (AWS): Another powerful platform with a focus on scalability and cost-effectiveness.

- Microsoft Azure: Provides a robust AI platform with strong security features.

Keywords

LLMs, Large Language Models, AI, Cloud, Local, NVIDIA 4070 Ti 12GB, GPU, Llama 3, Quantization, Model Inference, Performance, Token Generation, Token Processing, Scalability, Cost, Privacy, Cloud Services, Google Cloud, AWS, Azure, Alternatives, AMD Radeon RX 6700 XT, Multi-GPU, Intel Core i9, AMD Ryzen 9