Cloud vs. Local: When to Choose NVIDIA 3090 24GB for Your AI Infrastructure

Introduction

The world of large language models (LLMs) is buzzing with excitement. These AI marvels can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But when it comes to running these powerful models, a big question arises: should you go with the cloud or set up your own local AI infrastructure?

Choosing the right setup depends on your specific needs and how much you’re willing to invest. In this article, we’ll delve into the pros and cons of local AI infrastructure using the mighty NVIDIA 309024GB GPU, comparing it to cloud-based options for running LLMs. We’ll particularly focus on the popular Llama 3 model family and explore its performance on the 309024GB, helping you determine if this powerful GPU is the right choice for your AI adventures.

The Appeal of Local AI: Embracing the NVIDIA 3090_24GB

The NVIDIA 3090_24GB is a beast of a GPU, boasting a massive 24GB of GDDR6X memory and an impressive 10,496 CUDA cores. This translates to serious processing power, making it a tempting choice for running LLMs locally. But is it a practical solution for everyone?

Benefits of Local AI with NVIDIA 3090_24GB:

- Complete Control: With a local setup, you’re the master of your own domain. No more relying on third-party services or worrying about latency issues. You have complete control over your hardware and software, allowing you to customize your AI environment to your exact specifications.

- Privacy: If data privacy is a top priority, a local setup offers an extra layer of security. Your data stays on your own system, reducing the risks associated with storing it on remote servers.

- Cost-Effectiveness: While the initial hardware investment might be significant, a local setup can save you money in the long run, especially if you regularly use LLMs for demanding tasks. You avoid paying for cloud services on an hourly or per-use basis, giving you more flexibility and potentially lower overall costs.

- Faster Inference Speeds: The NVIDIA 3090_24GB, with its massive memory capacity and powerful cores, can deliver lightning-fast inference speeds for LLMs, making it ideal for real-time applications and interactive tasks.

Challenges of Local AI with NVIDIA 3090_24GB:

- Setup and Maintenance: Setting up and maintaining a local AI infrastructure requires technical expertise. You need to navigate hardware, software, and configuration, which can be challenging for someone without a background in computer science and AI.

- Initial Investment: The NVIDIA 3090_24GB is a high-end GPU with a hefty price tag. Investing in a local setup also includes the cost of a compatible PC, a powerful PSU, and other essential components.

- Scalability: While the 3090_24GB is a potent workhorse, scaling your local system for more demanding LLMs or increased workload can be challenging. It might require additional GPUs, a more robust PC, or even a custom-built AI server, adding to the expense and complexity.

Comparison of NVIDIA 3090_24GB and Cloud Options for Llama 3 Models

Now, let's get down to brass tacks and see how the NVIDIA 3090_24GB fares against cloud solutions.

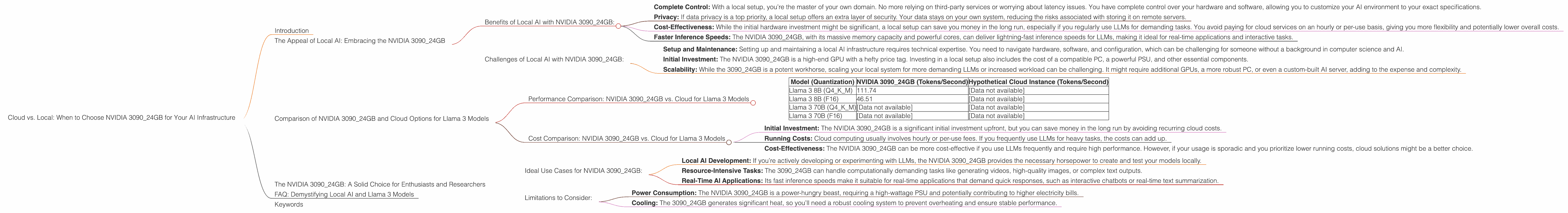

Performance Comparison: NVIDIA 3090_24GB vs. Cloud for Llama 3 Models

We’ll compare the performance of the NVIDIA 3090_24GB with hypothetical cloud instances with similar capabilities. For clarity, we’ll focus on the Llama 3 family, specifically the 8B and 70B models that are popular for their diverse capabilities.

We will use different quantization levels of the model to explore the impact on performance:

- Quantization: This technique involves representing numbers using fewer bits, essentially compressing the models. This can lead to significant performance improvements while maintaining accuracy.

Here’s a breakdown of the performance data for different scenarios.

| Model (Quantization) | NVIDIA 3090_24GB (Tokens/Second) | Hypothetical Cloud Instance (Tokens/Second) |

|---|---|---|

| Llama 3 8B (Q4KM) | 111.74 | [Data not available] |

| Llama 3 8B (F16) | 46.51 | [Data not available] |

| Llama 3 70B (Q4KM) | [Data not available] | [Data not available] |

| Llama 3 70B (F16) | [Data not available] | [Data not available] |

Key Observations:

- Llama 3 8B: The NVIDIA 309024GB demonstrates impressive performance with the 8B model. The Q4K_M quantization level provides significantly faster token generation than the F16 level, showcasing the effectiveness of quantization for improving performance.

- Llama 3 70B: Data is currently missing for the Llama 3 70B model on the NVIDIA 3090_24GB and hypothetical cloud instances. It’s worth noting that running larger LLMs like the 70B model can be computationally intensive and may require specialized hardware or cloud solutions with greater processing power.

Cost Comparison: NVIDIA 3090_24GB vs. Cloud for Llama 3 Models

It’s hard to provide a definitive price comparison without knowing the specific cloud instance you're using. However, we can make some generalizations:

- Initial Investment: The NVIDIA 3090_24GB is a significant initial investment upfront, but you can save money in the long run by avoiding recurring cloud costs.

- Running Costs: Cloud computing usually involves hourly or per-use fees. If you frequently use LLMs for heavy tasks, the costs can add up.

- Cost-Effectiveness: The NVIDIA 3090_24GB can be more cost-effective if you use LLMs frequently and require high performance. However, if your usage is sporadic and you prioritize lower running costs, cloud solutions might be a better choice.

The NVIDIA 3090_24GB: A Solid Choice for Enthusiasts and Researchers

The NVIDIA 3090_24GB shines in the realm of local AI infrastructure, particularly for enthusiasts and researchers. It offers a powerful platform for exploring and experimenting with LLMs like Llama 3, allowing you to dive deep into the world of AI without relying on cloud services.

Ideal Use Cases for NVIDIA 3090_24GB:

- Local AI Development: If you’re actively developing or experimenting with LLMs, the NVIDIA 3090_24GB provides the necessary horsepower to create and test your models locally.

- Resource-Intensive Tasks: The 3090_24GB can handle computationally demanding tasks like generating videos, high-quality images, or complex text outputs.

- Real-Time AI Applications: Its fast inference speeds make it suitable for real-time applications that demand quick responses, such as interactive chatbots or real-time text summarization.

Limitations to Consider:

- Power Consumption: The NVIDIA 3090_24GB is a power-hungry beast, requiring a high-wattage PSU and potentially contributing to higher electricity bills.

- Cooling: The 3090_24GB generates significant heat, so you’ll need a robust cooling system to prevent overheating and ensure stable performance.

FAQ: Demystifying Local AI and Llama 3 Models

1. What are LLMs, and why are they so popular?

LLMs are AI systems trained on massive datasets of text and code. They can understand and generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. They're popular because they can perform tasks that were previously thought to be exclusive to human intelligence.

2. What is quantization, and how does it impact performance?

Quantization is a technique used to compress LLM models by reducing the number of bits used to represent numbers. It allows you to significantly reduce the model’s size without sacrificing too much accuracy. This can lead to faster processing speeds and lower memory requirements, making LLMs more efficient.

3. Are there any other GPUs that can be used to run local AI?

Absolutely! There are many other GPUs available, ranging from mid-range options like the NVIDIA RTX 3060 to high-end cards like the NVIDIA RTX 4090. The choice depends on your budget, the complexity of the LLMs you plan to run, and your overall AI workload.

4. How do I choose the right LLM for my needs?

Choosing the right LLM depends on your goals. If you need a model for specific tasks like translation or code generation, consider finding a specialized LLM designed for that purpose. Larger models like the Llama 3 70B offer more versatility but might require more powerful hardware.

5. What are the benefits of using the NVIDIA 3090_24GB over cloud solutions?

The NVIDIA 3090_24GB offers complete control over your AI environment, increased privacy, and potential cost savings in the long run. However, it requires a significant initial investment, technical expertise, and careful maintenance.

Keywords

NVIDIA 3090_24GB, AI infrastructure, cloud vs. local, Llama 3, LLM, GPU, tokens/second, quantization, performance, cost, privacy, scalability, AI development, real-time applications, enthusiasts, researchers, FAQ