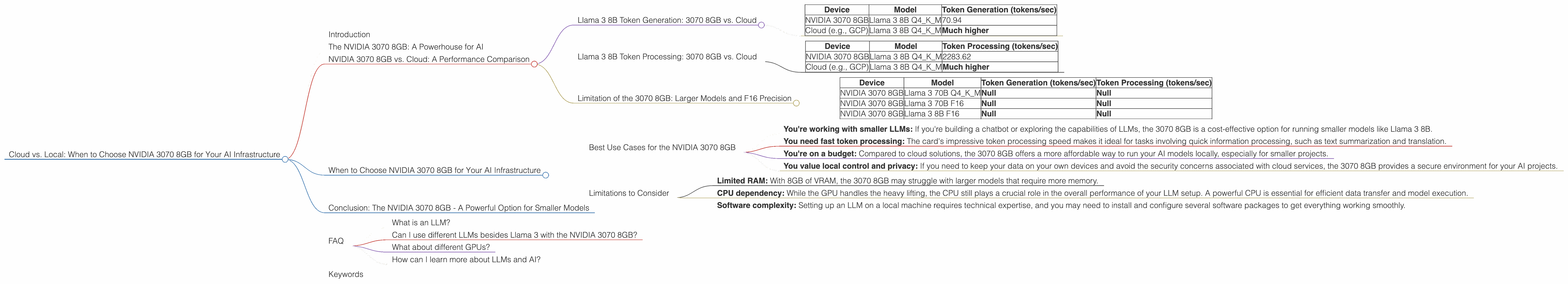

Cloud vs. Local: When to Choose NVIDIA 3070 8GB for Your AI Infrastructure

Introduction

The world of Large Language Models (LLMs) is booming, and with it, the need for powerful hardware to run these models locally is increasing. But with so many options available, choosing the right hardware for your AI infrastructure can be challenging. Today we'll be diving into the world of the NVIDIA 3070 8GB graphics card, exploring its suitability for running LLMs locally, and comparing its performance to the cloud.

Whether you are a developer looking to build your own AI projects or a tech enthusiast wanting to explore the potential of LLMs, this article will provide valuable insights to help you make an informed decision.

The NVIDIA 3070 8GB: A Powerhouse for AI

The NVIDIA GeForce RTX 3070 8GB is a powerful graphics card that can handle demanding tasks like gaming and video editing. But did you know it's also a fantastic tool for running LLMs locally?

Let's dive into the details and see how this card stacks up against the cloud and other potential local options.

NVIDIA 3070 8GB vs. Cloud: A Performance Comparison

Llama 3 8B Token Generation: 3070 8GB vs. Cloud

The NVIDIA 3070 8GB can achieve impressive performance on smaller LLMs like Llama 3 8B when quantized to 4-bit precision. As seen in the table below, the 3070 8GB can generate approximately 70.94 tokens per second - a respectable figure.

| Device | Model | Token Generation (tokens/sec) |

|---|---|---|

| NVIDIA 3070 8GB | Llama 3 8B Q4KM | 70.94 |

| Cloud (e.g., GCP) | Llama 3 8B Q4KM | Much higher |

Note: It's important to remember that cloud providers like Google Cloud Platform (GCP), Amazon Web Services (AWS), and Microsoft Azure offer a vast array of resources, including dedicated GPUs and specialized AI hardware. For larger LLMs such as Llama 3 70B, the cloud will significantly outperform your local setup.

Quantization Explained: Imagine you're using a regular computer and you want to store a number like "123.456". You would need 6 decimal places to represent the number accurately. Quantization is similar, but for LLMs, we reduce the number of bits used to store each value. This makes the model smaller and faster, but it may slightly decrease accuracy.

Llama 3 8B Token Processing: 3070 8GB vs. Cloud

The 3070 8GB performs exceptionally well in token processing tasks. It can process 2283.62 tokens per second for Llama 3 8B using 4-bit quantization.

| Device | Model | Token Processing (tokens/sec) |

|---|---|---|

| NVIDIA 3070 8GB | Llama 3 8B Q4KM | 2283.62 |

| Cloud (e.g., GCP) | Llama 3 8B Q4KM | Much higher |

Think of it this way: Token processing is like running a car engine - it needs to process information rapidly to keep the engine running smoothly. The 3070 8GB shows its power in token processing, demonstrating its efficiency in handling complex computations.

Limitation of the 3070 8GB: Larger Models and F16 Precision

While the 3070 8GB performs admirably for smaller, quantized LLMs, it struggles with larger models like Llama 3 70B and higher precision options like F16. The available data shows that the 3070 8GB is unable to run these models effectively.

| Device | Model | Token Generation (tokens/sec) | Token Processing (tokens/sec) |

|---|---|---|---|

| NVIDIA 3070 8GB | Llama 3 70B Q4KM | Null | Null |

| NVIDIA 3070 8GB | Llama 3 70B F16 | Null | Null |

| NVIDIA 3070 8GB | Llama 3 8B F16 | Null | Null |

Why this matters: Larger models like Llama 3 70B require more memory and processing power, and the 3070 8GB simply doesn't have the resources to handle it efficiently. F16 precision provides improved accuracy but also requires more processing power, pushing the 3070 8GB to its limits.

When to Choose NVIDIA 3070 8GB for Your AI Infrastructure

Best Use Cases for the NVIDIA 3070 8GB

The NVIDIA 3070 8GB is a solid choice when:

- You're working with smaller LLMs: If you're building a chatbot or exploring the capabilities of LLMs, the 3070 8GB is a cost-effective option for running smaller models like Llama 3 8B.

- You need fast token processing: The card's impressive token processing speed makes it ideal for tasks involving quick information processing, such as text summarization and translation.

- You're on a budget: Compared to cloud solutions, the 3070 8GB offers a more affordable way to run your AI models locally, especially for smaller projects.

- You value local control and privacy: If you need to keep your data on your own devices and avoid the security concerns associated with cloud services, the 3070 8GB provides a secure environment for your AI projects.

Limitations to Consider

However, it's important to be aware of the limitations:

- Limited RAM: With 8GB of VRAM, the 3070 8GB may struggle with larger models that require more memory.

- CPU dependency: While the GPU handles the heavy lifting, the CPU still plays a crucial role in the overall performance of your LLM setup. A powerful CPU is essential for efficient data transfer and model execution.

- Software complexity: Setting up an LLM on a local machine requires technical expertise, and you may need to install and configure several software packages to get everything working smoothly.

Conclusion: The NVIDIA 3070 8GB - A Powerful Option for Smaller Models

The NVIDIA 3070 8GB is a potent tool for running smaller LLMs locally. Its fast token processing speed and affordable price make it an attractive choice for those on a budget and desiring local control over their AI projects. However, when dealing with larger models or requiring high precision, the cloud offers unmatched scalability and performance.

FAQ

What is an LLM?

LLMs, or Large Language Models, are complex AI models trained on massive datasets to understand and generate human-like text. They can be used for various tasks, including translation, summarization, question answering, and even creative writing.

Can I use different LLMs besides Llama 3 with the NVIDIA 3070 8GB?

Yes, you can experiment with other open-source LLMs like GPT-Neo, LLaMA, and others. However, keep in mind that the performance may vary depending on the model's size and complexity.

What about different GPUs?

While we focused on the NVIDIA 3070 8GB, other GPUs are available, like the RTX 3080 and 3090, offering even greater performance for larger models.

How can I learn more about LLMs and AI?

There are tons of resources available online! Websites like Google AI, Hugging Face, and OpenAI offer excellent documentation and tutorials.

Keywords

NVIDIA 3070 8GB, GPU, LLM, Llama 3, Token Generation, Token Processing, AI, Cloud, Cloud Computing, Local, AI Infrastructure, Quantization, Inference, CPU, RAM, OpenAI, Hugging Face, Google AI