Choosing the Best NVIDIA GPU for Local LLMs: NVIDIA RTX A6000 48GB Benchmark Analysis

Introduction

The world of large language models (LLMs) is rapidly evolving, with new models and applications popping up every day. If you're a developer or enthusiast eager to explore the fascinating world of LLMs, running them locally offers unparalleled control and flexibility. However, choosing the right GPU is crucial for optimal performance. This article dives deep into the capabilities of the NVIDIA RTX A6000 48GB, a powerhouse GPU designed to handle the demanding computational requirements of LLMs. We'll analyze how it performs with the latest Llama 3 models, providing insights into its suitability for various LLM workloads.

Understanding the NVIDIA RTX A6000 48GB

The NVIDIA RTX A6000 is a top-of-the-line workstation GPU designed for demanding tasks like deep learning and scientific computing. Its key features make it a compelling choice for local LLM enthusiasts:

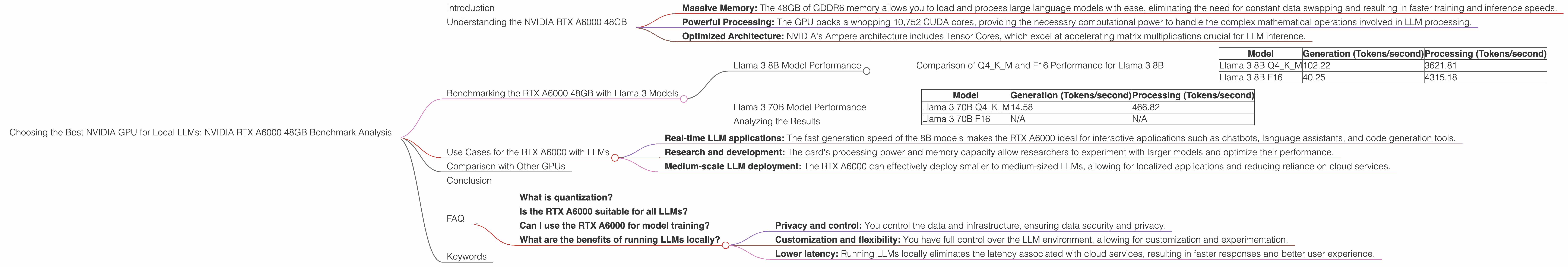

- Massive Memory: The 48GB of GDDR6 memory allows you to load and process large language models with ease, eliminating the need for constant data swapping and resulting in faster training and inference speeds.

- Powerful Processing: The GPU packs a whopping 10,752 CUDA cores, providing the necessary computational power to handle the complex mathematical operations involved in LLM processing.

- Optimized Architecture: NVIDIA's Ampere architecture includes Tensor Cores, which excel at accelerating matrix multiplications crucial for LLM inference.

Benchmarking the RTX A6000 48GB with Llama 3 Models

We'll focus on Llama 3 models since they represent the current state-of-the-art in open-source LLMs. Before we dive into the results, let's clarify some important terminology:

- Q4KM: This refers to the quantization method used for the LLM. Quantization is like a data diet for LLMs. It reduces the precision of model weights and activations, allowing for faster inference and smaller model sizes. Q4KM stands for "quantization with 4 bits for key and value matrices and mixed precision for the input/output (I/O) matrices."

- F16: This represents the use of 16-bit floating-point precision for the model weights and activations. While it offers slightly lower precision than Q4KM, it generally provides faster inference speeds.

- Generation: This refers to the process of generating text using the LLM.

- Processing: This encompasses all the backend operations involved in running the LLM, such as tokenization, embedding, attention, and output decoding.

Llama 3 8B Model Performance

Comparison of Q4KM and F16 Performance for Llama 3 8B

| Model | Generation (Tokens/second) | Processing (Tokens/second) |

|---|---|---|

| Llama 3 8B Q4KM | 102.22 | 3621.81 |

| Llama 3 8B F16 | 40.25 | 4315.18 |

The RTX A6000 consistently shines in both generation and processing tasks. As expected, the Q4KM model provides a significant speed advantage in generation, outputting over 100 tokens per second. This performance translates to a notably snappy user experience, making it ideal for real-time applications. On the other hand, the F16 model excels in processing speed, generating over 4,000 tokens per second. This efficiency makes it suitable for tasks that require high throughput, such as batch processing or scientific research.

Llama 3 70B Model Performance

| Model | Generation (Tokens/second) | Processing (Tokens/second) |

|---|---|---|

| Llama 3 70B Q4KM | 14.58 | 466.82 |

| Llama 3 70B F16 | N/A | N/A |

While the RTX A6000 can successfully run the Llama 3 70B Q4KM model, it struggles with the larger F16 variant. This limitation highlights the importance of considering memory requirements when selecting a GPU for LLMs.

Analyzing the Results

The RTX A6000 excels at running smaller LLMs like the 8B Llama 3, demonstrating remarkable efficiency in both generation and processing tasks. Its memory capacity allows it to handle these models with ease. When confronted with the significantly larger 70B model, the RTX A6000 still performs well with the Q4KM variant. However, it struggles with the F16 variant due to the increased memory demands.

Use Cases for the RTX A6000 with LLMs

The RTX A6000 is well-suited for various LLM use cases:

- Real-time LLM applications: The fast generation speed of the 8B models makes the RTX A6000 ideal for interactive applications such as chatbots, language assistants, and code generation tools.

- Research and development: The card's processing power and memory capacity allow researchers to experiment with larger models and optimize their performance.

- Medium-scale LLM deployment: The RTX A6000 can effectively deploy smaller to medium-sized LLMs, allowing for localized applications and reducing reliance on cloud services.

Comparison with Other GPUs

While the RTX A6000 reigns supreme in terms of memory capacity, other GPUs may offer competitive performance for specific LLM models and use cases. For example, the RTX 4090 offers impressive processing speeds for smaller models but has less memory.

Conclusion

The NVIDIA RTX A6000 48GB is a powerful GPU that excels in local LLM deployment, particularly for smaller to medium-sized models. Its combination of memory capacity, processing power, and optimized architecture delivers exceptional performance across generation and processing tasks. While it struggles with larger models like the F16 variant of the 70B Llama 3, its performance for smaller models makes it an excellent choice for real-time applications, research, and medium-scale deployments.

FAQ

What is quantization?

Quantization is a technique used to reduce the precision of model weights and activations, resulting in smaller model sizes and faster inference. Think of it like a data diet for LLMs. You're essentially reducing the "calories" of the model without significantly impacting its ability to perform.

Is the RTX A6000 suitable for all LLMs?

While the RTX A6000 is powerful, it's not suitable for all LLMs. Its memory capacity might be a bottleneck for the larger models, especially those using full precision. For larger models, you might need to explore GPUs with even more memory or utilize model quantization techniques.

Can I use the RTX A6000 for model training?

Yes, the RTX A6000 is a great choice for training LLMs. Its powerful processing and memory capacity enable efficient training, even for larger models.

What are the benefits of running LLMs locally?

Running LLMs locally offers numerous benefits:

- Privacy and control: You control the data and infrastructure, ensuring data security and privacy.

- Customization and flexibility: You have full control over the LLM environment, allowing for customization and experimentation.

- Lower latency: Running LLMs locally eliminates the latency associated with cloud services, resulting in faster responses and better user experience.

Keywords

NVIDIA RTX A6000, local LLMs, Llama 3, GPU, quantization, Q4KM, F16, generation, token speed, processing, performance benchmark, memory capacity, deep learning, AI, machine learning.