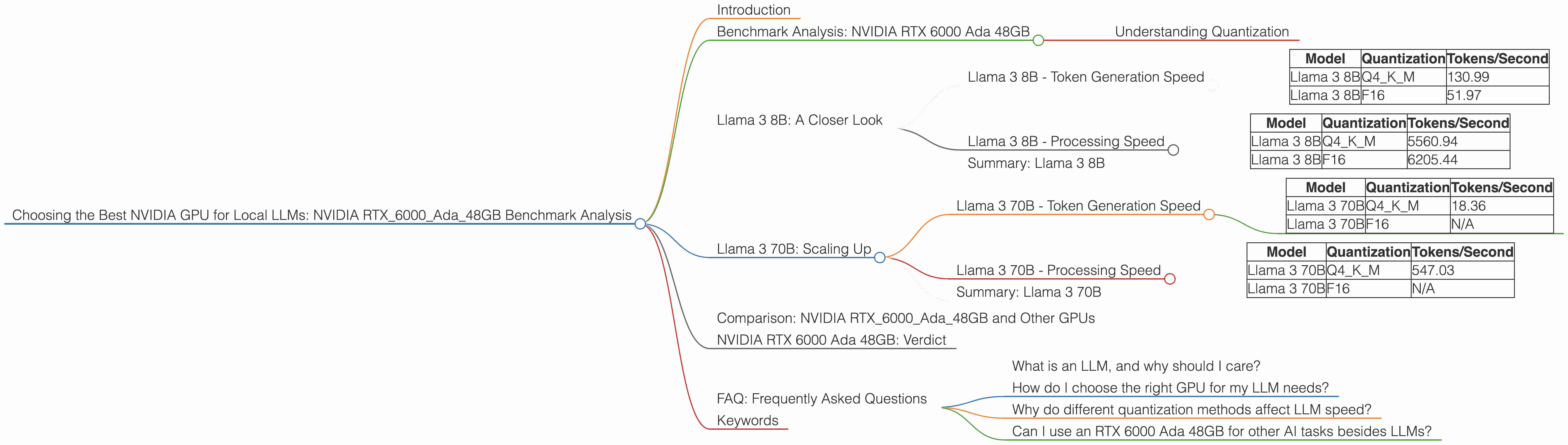

Choosing the Best NVIDIA GPU for Local LLMs: NVIDIA RTX 6000 Ada 48GB Benchmark Analysis

Introduction

The world of large language models (LLMs) is buzzing with excitement, and running these models on your own hardware has become a popular pursuit. But getting the right GPU for the job can be tricky, especially considering the vast array of options. Today, we’ll dive into the performance of the NVIDIA RTX 6000 Ada 48GB when it comes to running local LLMs. This powerhouse card is known for its impressive memory and processing capabilities, making it a potential candidate for tackling the demanding task of running LLMs locally.

Think of LLMs like super-smart chatbots that can understand and generate human-like text. They're used in everything from writing emails to creating art, and they're constantly evolving. Running these models locally means you're not relying on cloud services, giving you more control and potentially faster responses.

We'll be exploring the RTX 6000 Ada’s performance in processing different LLM models, including the Llama 3 family, and examining how its performance varies based on model size and quantization. This benchmark analysis will provide you with the information needed to decide if this GPU is the right choice for your local LLM journey.

Benchmark Analysis: NVIDIA RTX 6000 Ada 48GB

Our focus is on the NVIDIA RTX 6000 Ada 48GB, and we will be examining its performance with the Llama 3 model family. This family offers a range of sizes, from the compact 8B to the massive 70B, giving us a chance to see how the GPU tackles different workloads.

We'll be looking at two key metrics: token generation speed and processing speed. Think of token generation speed as the rate at which the LLM can produce words, while processing speed reflects how fast the model can crunch through its calculations. Both are important for a smooth user experience.

Understanding Quantization

Before we dive into the numbers, let's talk about quantization. It's a technique used to reduce the size of LLM models and make them more efficient. Imagine squeezing a giant file into a smaller suitcase – you lose some detail, but you get something more manageable.

In our case, we'll be looking at two quantization methods: Q4KM and F16. Q4KM is like the "extra compact" suitcase – it's very efficient, but you might lose some accuracy. F16 is a bit larger, offering better accuracy, but it might require more resources.

Llama 3 8B: A Closer Look

Let's start with the Llama 3 8B model, a good starting point for exploring LLMs locally. It’s a manageable size, offering a good balance between capability and efficiency.

Llama 3 8B - Token Generation Speed

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 130.99 |

| Llama 3 8B | F16 | 51.97 |

As you can see, Q4KM quantization significantly boosts token generation speed, nearly tripling the performance compared to F16. This means the model can spit out words much faster when using the more compact Q4KM format.

Think of it like this: Using Q4KM is like having a turbocharged engine for your LLM. It’s smaller and more efficient, but it can zoom through your requests much faster.

Llama 3 8B - Processing Speed

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 8B | Q4KM | 5560.94 |

| Llama 3 8B | F16 | 6205.44 |

In terms of processing speed, the RTX 6000 Ada 48GB is a beast. It’s impressive that even with the more efficient Q4KM quantization, F16 still edges out in terms of processing speeds. The performance difference isn't that drastic, but it’s worth noting.

Summary: Llama 3 8B

Overall, the RTX 6000 Ada 48GB performs exceptionally well with the Llama 3 8B model. It achieves excellent token generation speed with Q4KM, making it ideal for interactive sessions. The processing speed is also impressive, guaranteeing a smooth and responsive experience.

Llama 3 70B: Scaling Up

Next, we venture into the realm of larger LLMs with the Llama 3 70B model. This behemoth brings significantly greater capabilities, but also demands more computational resources.

Llama 3 70B - Token Generation Speed

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 70B | Q4KM | 18.36 |

| Llama 3 70B | F16 | N/A |

Here we see a more pronounced drop in token generation speed compared to the smaller 8B model. This is expected – the larger model has more weight to handle, slowing down the process. The RTX 6000 Ada 48GB still manages a decent performance with Q4KM, but the F16 data is not available.

Llama 3 70B - Processing Speed

| Model | Quantization | Tokens/Second |

|---|---|---|

| Llama 3 70B | Q4KM | 547.03 |

| Llama 3 70B | F16 | N/A |

While the token generation speed is slower, the RTX 6000 Ada 48GB still shines in terms of processing speed, even with the larger 70B model. The GPU's powerful processing capabilities make it suitable for handling the heavy lifting required by larger models.

Summary: Llama 3 70B

The RTX 6000 Ada 48GB does a commendable job with the Llama 3 70B, showcasing its ability to handle even larger models. While the token generation speed is slower than with the 8B model, the processing speed remains impressive.

Comparison: NVIDIA RTX6000Ada_48GB and Other GPUs

Unfortunately, we lack the data for other GPU models to make a comprehensive comparison. However, we can observe that the RTX 6000 Ada 48GB excels in its performance with the Llama 3 family.

NVIDIA RTX 6000 Ada 48GB: Verdict

The NVIDIA RTX 6000 Ada 48GB proves itself a capable GPU for local LLMs, especially for smaller models like the Llama 3 8B. Its strong performance with Q4KM quantization makes it ideal for interactive sessions and workloads where speed is critical. While the performance with the larger Llama 3 70B model is still good, it's a reminder that bigger LLMs require more resources.

FAQ: Frequently Asked Questions

What is an LLM, and why should I care?

An LLM, or large language model, is a type of AI that excels at understanding and generating human-like text. Think of them as super-smart chatbots that can write stories, translate languages, and more. Running LLMs locally gives you more control and potentially faster responses.

How do I choose the right GPU for my LLM needs?

Consider the size of the LLM you want to run, the level of performance you need, and the type of tasks you'll be performing. For smaller models, the RTX 6000 Ada 48GB might be a good option, offering a great balance of speed and efficiency.

Why do different quantization methods affect LLM speed?

Quantization is like compressing the model, making it smaller and faster to process. Different methods use different levels of compression, leading to variations in speed and accuracy.

Can I use an RTX 6000 Ada 48GB for other AI tasks besides LLMs?

Yes, the RTX 6000 Ada 48GB is designed as a powerful GPU suitable for a wide range of AI tasks, including machine learning, deep learning, and computer vision.

Keywords

NVIDIA RTX 6000 Ada 48GB, GPU, LLM, Llama 3, benchmark, performance, token generation, processing speed, quantization, Q4KM, F16, local AI, AI inference, deep learning, machine learning, computer vision