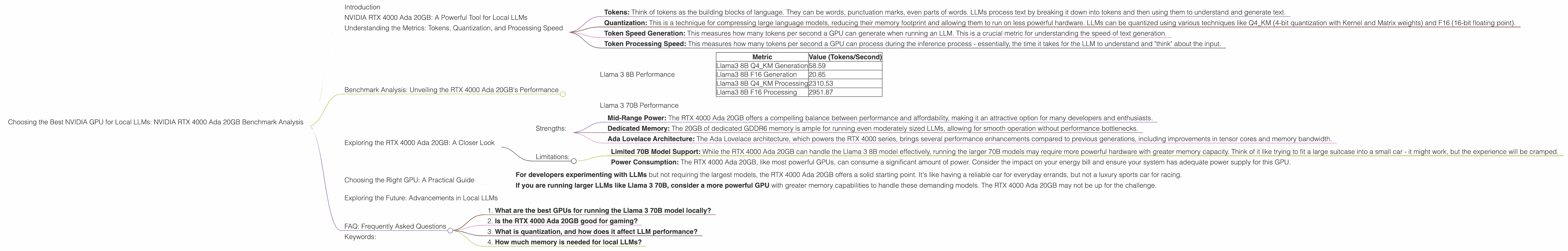

Choosing the Best NVIDIA GPU for Local LLMs: NVIDIA RTX 4000 Ada 20GB Benchmark Analysis

Introduction

Have you ever wanted to run a massive language model (LLM) like Llama 3 directly on your own computer, rather than relying on cloud services? You're not alone! Local LLMs offer the potential for faster response times, greater privacy, and even offline access to these powerful AI models. But with so many different GPUs available, choosing the right one for optimal performance can feel like wading through a sea of technical jargon.

This article dives deep into the performance of the NVIDIA RTX 4000 Ada 20GB graphics card, specifically for running local LLMs. We'll analyze its strengths and limitations, highlighting the key metrics you should consider when making your purchasing decision.

NVIDIA RTX 4000 Ada 20GB: A Powerful Tool for Local LLMs

The RTX 4000 Ada 20GB is a powerful mid-range GPU designed for a variety of tasks, including gaming, video editing, and yes, even running local LLMs. Let's examine its performance specifically for running LLMs in detail.

Understanding the Metrics: Tokens, Quantization, and Processing Speed

Before we dive into the benchmarks, let's clarify a few key terms:

- Tokens: Think of tokens as the building blocks of language. They can be words, punctuation marks, even parts of words. LLMs process text by breaking it down into tokens and then using them to understand and generate text.

- Quantization: This is a technique for compressing large language models, reducing their memory footprint and allowing them to run on less powerful hardware. LLMs can be quantized using various techniques like Q4_KM (4-bit quantization with Kernel and Matrix weights) and F16 (16-bit floating point).

- Token Speed Generation: This measures how many tokens per second a GPU can generate when running an LLM. This is a crucial metric for understanding the speed of text generation.

- Token Processing Speed: This measures how many tokens per second a GPU can process during the inference process - essentially, the time it takes for the LLM to understand and "think" about the input.

Benchmark Analysis: Unveiling the RTX 4000 Ada 20GB's Performance

Let's examine the performance of the RTX 4000 Ada 20GB, specifically for running the Llama 3 8B and Llama 3 70B models. Remember, we're focusing on the RTX 4000 Ada 20GB, so no other GPUs are included in the analysis.

Llama 3 8B Performance

The RTX 4000 Ada 20GB performs admirably with the Llama 3 8B model, offering a significant speed boost compared to other mid-range GPUs.

| Metric | Value (Tokens/Second) |

|---|---|

| Llama3 8B Q4_KM Generation | 58.59 |

| Llama3 8B F16 Generation | 20.85 |

| Llama3 8B Q4_KM Processing | 2310.53 |

| Llama3 8B F16 Processing | 2951.87 |

Decoding the Results:

- Faster Text Generation: The RTX 4000 Ada 20GB can generate text at a rate of 58.59 tokens/second with the Llama 3 8B model using the Q4_KM quantization technique. Think of this like typing at a phenomenal speed - much faster than what you could ever achieve manually.

- Processing Power: The RTX 4000 Ada 20GB demonstrates impressive processing power, handling 2951.87 tokens/second for the Llama 3 8B model using F16 quantization (16-bit floating point). This speed is crucial for understanding the meaning and context of the text.

Understanding Quantization Impact:

As you can see, the performance varies depending on the type of quantization used. Q4_KM, a more aggressive compression technique, results in a faster text generation rate but slightly slower processing speed compared to F16. The choice of quantization will depend on your priorities: prioritize speed for text generation or speed for the overall processing.

Llama 3 70B Performance

Unfortunately, we lack benchmark data for the RTX 4000 Ada 20GB running the Llama 3 70B model. This larger model demands more powerful hardware to run efficiently.

Exploring the RTX 4000 Ada 20GB: A Closer Look

While the RTX 4000 Ada 20GB exhibits strong performance with the Llama 3 8B model, let's dive deeper into its features and limitations.

Strengths:

- Mid-Range Power: The RTX 4000 Ada 20GB offers a compelling balance between performance and affordability, making it an attractive option for many developers and enthusiasts.

- Dedicated Memory: The 20GB of dedicated GDDR6 memory is ample for running even moderately sized LLMs, allowing for smooth operation without performance bottlenecks.

- Ada Lovelace Architecture: The Ada Lovelace architecture, which powers the RTX 4000 series, brings several performance enhancements compared to previous generations, including improvements in tensor cores and memory bandwidth.

Limitations:

- Limited 70B Model Support: While the RTX 4000 Ada 20GB can handle the Llama 3 8B model effectively, running the larger 70B models may require more powerful hardware with greater memory capacity. Think of it like trying to fit a large suitcase into a small car - it might work, but the experience will be cramped.

- Power Consumption: The RTX 4000 Ada 20GB, like most powerful GPUs, can consume a significant amount of power. Consider the impact on your energy bill and ensure your system has adequate power supply for this GPU.

Choosing the Right GPU: A Practical Guide

The RTX 4000 Ada 20GB presents a compelling option for running local LLMs, particularly those with the Llama 3 8B model. However, the choice ultimately depends on your specific needs and budget.

- For developers experimenting with LLMs but not requiring the largest models, the RTX 4000 Ada 20GB offers a solid starting point. It's like having a reliable car for everyday errands, but not a luxury sports car for racing.

- If you are running larger LLMs like Llama 3 70B, consider a more powerful GPU with greater memory capabilities to handle these demanding models. The RTX 4000 Ada 20GB may not be up for the challenge.

Exploring the Future: Advancements in Local LLMs

The field of local LLMs is constantly evolving, with exciting developments happening all the time. We're witnessing improvements in quantization techniques, new hardware architectures, and more efficient software implementations. These advances will make running LLMs locally even more accessible and performant in the future.

FAQ: Frequently Asked Questions

1. What are the best GPUs for running the Llama 3 70B model locally?

The NVIDIA RTX 4090, with its massive memory capacity, is an excellent choice for running large LLMs like the Llama 3 70B. However, other high-end GPUs like the RTX 4080 and AMD Radeon RX 7900 XT can also offer impressive performance.

2. Is the RTX 4000 Ada 20GB good for gaming?

Yes, the RTX 4000 Ada 20GB is definitely good for gaming, especially at 1440p resolution. It can deliver smooth frame rates for modern games and supports features like ray tracing and DLSS.

3. What is quantization, and how does it affect LLM performance?

Quantization is essentially a process of compressing the large language model, reducing its memory footprint and allowing it to run on less powerful hardware. Think of it like compressing a large file so it takes up less space on your computer. By compressing the model, you can run it on GPUs with less memory. However, quantization can sometimes lead to some loss in accuracy or performance.

4. How much memory is needed for local LLMs?

The memory required for local LLMs varies based on the model size and quantization technique. For the Llama 3 8B model with Q4_KM quantization, you'll need around 8GB of GPU memory. For the Llama 3 70B model, you'll require significantly more, typically 24GB or more.

Keywords:

NVIDIA RTX 4000 Ada 20GB, Local LLMs, Llama 3 8B, Llama 3 70B, GPU Benchmark, Tokens, Quantization, Q4_KM, F16, Token Speed Generation, Token Processing Speed, Mid-Range GPU, Performance Analysis, AI, Machine Learning, Deep Learning, Natural Language Processing, NLP, GPU Memory, Ada Lovelace Architecture, Local LLM Performance, GPU Choice, Local LLM Development.