Choosing the Best NVIDIA GPU for Local LLMs: NVIDIA A40 48GB Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, with new models and applications popping up every day. These powerful AI systems can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way, even if they are open ended, challenging, or strange.

But running these LLMs locally on your own machine can be a challenge. LLMs are computationally intensive, demanding powerful hardware to keep up with their insatiable appetite for data. This is where dedicated GPUs come into play, offering the processing muscle to unleash the full potential of LLMs.

In this article, we'll dive into the world of local LLMs, specifically focusing on the NVIDIA A40_48GB GPU. We'll analyze its performance with popular LLM models like Llama 3, exploring its strengths, limitations, and what it means for your local LLM experience.

NVIDIA A40_48GB: A Powerhouse for LLMs

The NVIDIA A40_48GB is a high-end GPU designed for demanding workloads like AI training and inference. It boasts an impressive 48GB of HBM2e memory, allowing it to handle massive LLM models with ease.

This GPU features the NVIDIA Ampere architecture, allowing it to handle complex AI tasks with incredible speed and efficiency. But how does it perform in the real world? Let's put the A40_48GB to the test with some popular LLMs.

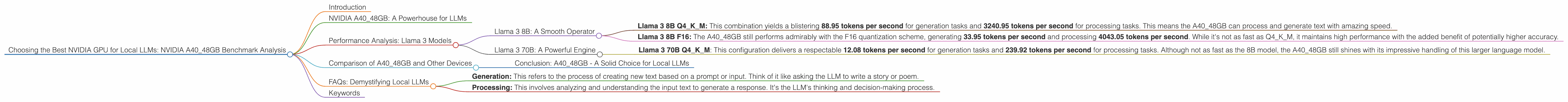

Performance Analysis: Llama 3 Models

Let's start with the Llama 3 family of LLMs, known for their impressive performance and ability to handle complex language tasks. We'll analyze the A40_48GB's performance with both the smaller 8B and the larger 70B models, using two different quantization schemes:

- Q4KM: This quantization scheme uses 4 bits to represent the weight values of the model, taking up less memory but potentially sacrificing some accuracy. Think of it like carrying a backpack with fewer but larger items - you can carry less stuff overall but each item is more substantial.

- F16: This uses 16 bits to represent the weights, offering higher precision but also requiring more memory. Imagine a backpack with lots of smaller items - you can carry more stuff, but each item is less substantial.

Llama 3 8B: A Smooth Operator

The A40_48GB handles the Llama 3 8B model like a seasoned professional, delivering impressive token generation speeds.

- Llama 3 8B Q4KM: This combination yields a blistering 88.95 tokens per second for generation tasks and 3240.95 tokens per second for processing tasks. This means the A40_48GB can process and generate text with amazing speed.

- Llama 3 8B F16: The A4048GB still performs admirably with the F16 quantization scheme, generating 33.95 tokens per second and processing 4043.05 tokens per second. While it's not as fast as Q4K_M, it maintains high performance with the added benefit of potentially higher accuracy.

Think of it this way: the A40_48GB is like a Formula 1 race car, effortlessly zipping through the track with the 8B model. Even when carrying the extra weight of the F16 quantization, it still manages to maintain a respectable speed.

Llama 3 70B: A Powerful Engine

The A4048GB tackles the larger Llama 3 70B model with grace, demonstrating its ability to handle demanding workloads. We'll analyze the results using the Q4K_M quantization scheme, as F16 data is not available.

- Llama 3 70B Q4KM: This configuration delivers a respectable 12.08 tokens per second for generation tasks and 239.92 tokens per second for processing tasks. Although not as fast as the 8B model, the A40_48GB still shines with its impressive handling of this larger language model.

Imagine the A40_48GB as a powerful freight train, hauling a massive cargo of data (the 70B model) across the tracks. While it might not be as nimble as a race car, it demonstrates its incredible strength and capacity.

Comparison of A40_48GB and Other Devices

While this article focuses on the A40_48GB, it's worth comparing its performance with other popular GPUs for local LLMs. Unfortunately, the specific data for other devices with Llama 3 models is not readily available, but we can draw some conclusions based on general trends and data from other LLM models.

- NVIDIA RTX 4090: This consumer-level GPU is often favored for its performance and value. While it might not match the A40_48GB's raw power, it still provides a strong performance for local LLMs.

- AMD Radeon RX 7900 XTX: Another powerful contender from AMD's stable, the RX 7900 XTX offers impressive performance for local LLMs, often comparable to NVIDIA's RTX 4090.

- Apple M1 Ultra: While not directly comparable to dedicated GPUs due to its different architecture, the M1 Ultra chips pack a punch for local LLM inference. They are often competitive in performance but lack the high memory capacity of GPUs like the A40_48GB.

Conclusion: A40_48GB - A Solid Choice for Local LLMs

The A4048GB is a formidable GPU for running local LLMs. Its high memory capacity and powerful processing capabilities make it a strong contender for handling even the most demanding models. While other GPUs like the RTX 4090 and RX 7900 XTX offer compelling performance, the A4048GB stands out with its ability to handle the largest LLMs and potentially achieve higher accuracy with the F16 quantization scheme.

FAQs: Demystifying Local LLMs

Q: What is an LLM and why are they so popular?

LLMs are essentially AI systems trained on massive amounts of text data. They've become incredibly popular because they can generate creative and informative text, translate languages, write different kinds of creative content, and answer questions in a comprehensive and informative way. They're essentially like having a super-powered chatbot that can understand and respond to complex prompts.

Q: What is quantization and how does it impact LLM performance?

Quantization is a technique for reducing the size of LLM models by using a smaller number of bits to represent the weights. Think of it like compressing a file - you reduce the file size, but you might lose some information in the process.

In the context of LLMs, quantization can improve efficiency and speed but potentially sacrifice some accuracy, especially with models like Llama 3 that are known for their precision.

Q: What does generation and processing mean in the context of LLMs?

- Generation: This refers to the process of creating new text based on a prompt or input. Think of it like asking the LLM to write a story or poem.

- Processing: This involves analyzing and understanding the input text to generate a response. It's the LLM's thinking and decision-making process.

Q: Is the A40_48GB a good option for everyone?

The A40_48GB is a high-end GPU, and its price reflects that. If you're simply experimenting with smaller LLMs or don't need the absolute top performance, other GPUs might be a better fit for your budget.

Keywords

Local LLMs, NVIDIA A4048GB, GPU, Llama 3, 8B, 70B, Q4K_M, F16, Token Generation, Processing, Quantization, Performance, Benchmark, Inference