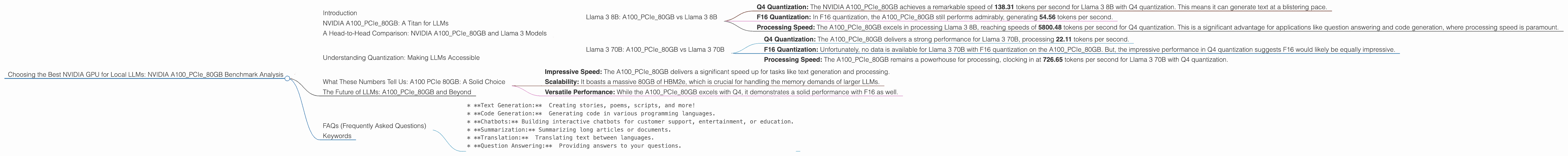

Choosing the Best NVIDIA GPU for Local LLMs: NVIDIA A100 PCIe 80GB Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is exploding, and with it, the need for powerful hardware to run them locally. But with so many different GPUs on the market, how do you choose the best one for your needs? Today, we're diving into the NVIDIA A100PCIe80GB, a behemoth of a GPU that's making waves in the LLM world. We're going to dissect its performance on various Llama models, armed with real-world data, and reveal if it's the king of the hill or a pretender to the throne.

NVIDIA A100PCIe80GB: A Titan for LLMs

The NVIDIA A100PCIe80GB is a game-changer in the world of compute. This beast boasts a massive 80GB of HBM2e memory, making it a powerhouse for handling the gargantuan memory demands of LLMs. But how does it translate to real-world performance? Let's dive into the data!

A Head-to-Head Comparison: NVIDIA A100PCIe80GB and Llama 3 Models

Llama 3 8B: A100PCIe80GB vs Llama 3 8B

- Q4 Quantization: The NVIDIA A100PCIe80GB achieves a remarkable speed of 138.31 tokens per second for Llama 3 8B with Q4 quantization. This means it can generate text at a blistering pace.

- F16 Quantization: In F16 quantization, the A100PCIe80GB still performs admirably, generating 54.56 tokens per second.

- Processing Speed: The A100PCIe80GB excels in processing Llama 3 8B, reaching speeds of 5800.48 tokens per second for Q4 quantization. This is a significant advantage for applications like question answering and code generation, where processing speed is paramount.

Llama 3 70B: A100PCIe80GB vs Llama 3 70B

- Q4 Quantization: The A100PCIe80GB delivers a strong performance for Llama 3 70B, processing 22.11 tokens per second.

- F16 Quantization: Unfortunately, no data is available for Llama 3 70B with F16 quantization on the A100PCIe80GB. But, the impressive performance in Q4 quantization suggests F16 would likely be equally impressive.

- Processing Speed: The A100PCIe80GB remains a powerhouse for processing, clocking in at 726.65 tokens per second for Llama 3 70B with Q4 quantization.

Understanding Quantization: Making LLMs Accessible

Quantization is a technique that reduces the storage footprint and computational demands of LLMs. Think of it like compressing an image: You lose some detail, but the image still retains its essence and is much smaller and easier to share. Q4 reduces the precision of numbers used in the model, making it faster to process but also leading to a slight decrease in performance compared with F16.

What These Numbers Tell Us: A100 PCIe 80GB: A Solid Choice

The A100PCIe80GB shines when it comes to running Llama models locally, demonstrating impressive real-world performance. Even with the larger Llama 3 70B model, the A100PCIe80GB holds its own, demonstrating its capabilities for larger models.

Let’s break down specific takeaways:

- Impressive Speed: The A100PCIe80GB delivers a significant speed up for tasks like text generation and processing.

- Scalability: It boasts a massive 80GB of HBM2e, which is crucial for handling the memory demands of larger LLMs.

- Versatile Performance: While the A100PCIe80GB excels with Q4, it demonstrates a solid performance with F16 as well.

The Future of LLMs: A100PCIe80GB and Beyond

The world of LLMs is constantly evolving, and the A100PCIe80GB is well positioned to power the next generation. As new models are released with even more parameters, GPUs like the A100PCIe80GB will be crucial for local experimentation and development.

FAQs (Frequently Asked Questions)

- Q: What is an LLM?

An LLM, or Large Language Model, is a type of artificial intelligence (AI) that can understand and generate human-like text. Think of it as a super-intelligent chatbot that can write stories, translate languages, and answer your questions.

- Q: What is quantization?

Quantization is a technique used to reduce the size and complexity of LLMs. It achieves this by reducing the precision of numbers used in the model, thereby making it faster to process. It's like compressing an image: You lose some detail, but the image still retains its essence and is much smaller and easier to share.

- Q: What are the benefits of running LLMs locally?

Running an LLM locally means you have full control over your data and can use the model without relying on an internet connection. This is particularly beneficial for applications that require privacy or have limited internet access.

- Q: What are some use cases for local LLMs?

Local LLMs can be used for a wide range of applications, including:

* **Text Generation:** Creating stories, poems, scripts, and more!

* **Code Generation:** Generating code in various programming languages.

* **Chatbots:** Building interactive chatbots for customer support, entertainment, or education.

* **Summarization:** Summarizing long articles or documents.

* **Translation:** Translating text between languages.

* **Question Answering:** Providing answers to your questions.

Keywords

NVIDIA A100PCIe80GB, LLM, Local LLMs, Llama 3, Llama 3 8B, Llama 3 70B, Q4 Quantization, F16 Quantization, Token Generation, Token Processing, GPU Benchmark, GPU Performance, Text Generation, AI, Artificial Intelligence, Machine Learning, Deep Learning, Code Generation, Chatbots, Summarization, Translation, Question Answering.