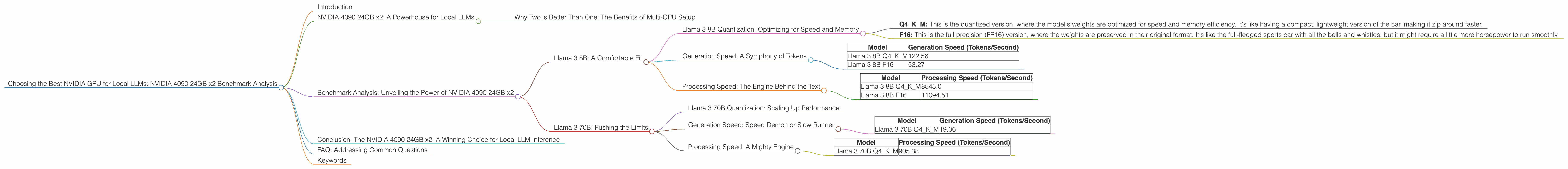

Choosing the Best NVIDIA GPU for Local LLMs: NVIDIA 4090 24GB x2 Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is buzzing with excitement. These powerful AI models can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running LLMs locally on your own machine can be a computational challenge, especially for the larger models. This is where powerful GPUs like the NVIDIA 4090 come into play.

In this article, we're diving deep into the performance of two NVIDIA 4090 24GB GPUs, analyzing their capabilities for running local LLMs. We'll benchmark them using different Llama 3 models, focusing on both generation (text output) and processing (internal calculations) speeds. Let's find out if this beastly setup is truly the dream team for local LLM enthusiasts!

NVIDIA 4090 24GB x2: A Powerhouse for Local LLMs

The NVIDIA 4090 with 24GB of GDDR6X memory is currently considered the top-tier consumer GPU, known for its exceptional performance across various tasks, including gaming and AI workloads. Using two of these behemoths in tandem creates a truly insane setup for local LLM inference.

But before we get into the nitty-gritty of the benchmarks, let's understand why two GPUs are so beneficial.

Why Two is Better Than One: The Benefits of Multi-GPU Setup

Imagine you have a massive puzzle to complete. One way to approach it is to do it alone, piece by piece. But with two people working side-by-side, the puzzle gets completed much faster! This is similar to how multi-GPU setups work for LLMs. Two GPUs effectively divide the workload (like the puzzle pieces), speeding up the overall inference process. Think of it as having two powerful brains working in parallel!

Benchmark Analysis: Unveiling the Power of NVIDIA 4090 24GB x2

We're putting the NVIDIA 4090 24GB x2 to the test with two popular Llama 3 models: Llama 3 8B and Llama 3 70B. These models are known for their impressive language capabilities, but require a considerable amount of computational horsepower to run effectively. However, the NVIDIA 4090 24GB x2 is more than ready to handle the challenge.

We'll explore their performance in two key aspects:

- Generation Speed: How fast these GPUs can generate text (tokens per second)

- Processing Speed: How effectively these GPUs handle the internal calculations involved in inference

Llama 3 8B: A Comfortable Fit

Let's start with the smaller model, Llama 3 8B. This model is a great choice for those new to local LLM inference, offering a balance between performance and resource requirements. It's like the entry-level sports car: fast enough to be thrilling, but still manageable for everyday use.

Llama 3 8B Quantization: Optimizing for Speed and Memory

The Llama 3 8B model comes in two flavors:

- Q4KM: This is the quantized version, where the model's weights are optimized for speed and memory efficiency. It's like having a compact, lightweight version of the car, making it zip around faster.

- F16: This is the full precision (FP16) version, where the weights are preserved in their original format. It's like the full-fledged sports car with all the bells and whistles, but it might require a little more horsepower to run smoothly.

Generation Speed: A Symphony of Tokens

Here's how the NVIDIA 4090 24GB x2 performs with the Llama 3 8B model:

| Model | Generation Speed (Tokens/Second) |

|---|---|

| Llama 3 8B Q4KM | 122.56 |

| Llama 3 8B F16 | 53.27 |

Analysis: As you can see, the Q4KM version of the Llama 3 8B model significantly outperforms the F16 version in terms of generation speed. This is because quantization allows the model to process information much faster, even if it may sacrifice a bit of accuracy. Think of it as being able to type much faster on a smaller keyboard!

Processing Speed: The Engine Behind the Text

Now, let's look at the processing speed, which represents the model's ability to handle the complex calculations behind text generation. This is like the engine of the sports car: the faster it churns, the smoother the experience.

| Model | Processing Speed (Tokens/Second) |

|---|---|

| Llama 3 8B Q4KM | 8545.0 |

| Llama 3 8B F16 | 11094.51 |

Analysis: Interestingly, the F16 version of the Llama 3 8B model actually outperforms the Q4KM version in terms of processing speed. This is because full precision allows for more accurate computations. However, the difference in generation speed is significant enough to make the Q4KM version the more attractive option for most users. It's like having a slightly less powerful engine but still achieving a blistering pace!

Llama 3 70B: Pushing the Limits

Now, let's step up the game and explore the Llama 3 70B model. This is a seriously powerful LLM, capable of generating highly sophisticated text, but it demands a significant amount of processing power. Think of it as a supercar: it's incredible, but it requires a skilled driver and a dedicated track to shine.

Llama 3 70B Quantization: Scaling Up Performance

Similar to the Llama 3 8B model, the Llama 3 70B also benefits from quantization. The Q4KM version optimizes the model for speed and memory usage, while the F16 requires more resources.

Generation Speed: Speed Demon or Slow Runner

In terms of generation speed, unfortunately, we only have data for the Q4KM version of Llama 3 70B. This is because F16 inference for this model on the NVIDIA 4090 24GB x2 setup is not yet supported or has not been benchmarked (it is still in development).

| Model | Generation Speed (Tokens/Second) |

|---|---|

| Llama 3 70B Q4KM | 19.06 |

Analysis: Although the generation speed is significantly slower compared to the Llama 3 8B model, it's important to remember that this is still a massive model, capable of generating complex text at a respectable rate.

Processing Speed: A Mighty Engine

The Llama 3 70B model, like its 8B counterpart, demonstrates a strong processing speed:

| Model | Processing Speed (Tokens/Second) |

|---|---|

| Llama 3 70B Q4KM | 905.38 |

Analysis: The NVIDIA 4090 24GB x2 handles the processing workload for the Llama 3 70B Q4KM model with ease. This is a testament to the power of this GPU setup and its capability to manage even the most demanding AI tasks.

Conclusion: The NVIDIA 4090 24GB x2: A Winning Choice for Local LLM Inference

The NVIDIA 4090 24GB x2 setup proves to be a formidable combination for local LLM inference. It delivers impressive performance with both the Llama 3 8B and Llama 3 70B models, particularly with the Q4KM versions, which significantly boost speed and efficiency through quantization.

Whether you're experimenting with smaller models or tackling the complexities of larger ones, the NVIDIA 4090 24GB x2 offers the raw power to unlock the potential of local LLMs. It's like having a race car in your garage, ready to take you on an exciting journey through the world of AI!

FAQ: Addressing Common Questions

1. Why is quantization so important?

Quantization is a technique that reduces the size of the LLM's weights, resulting in faster inference and lower memory usage. It's like compressing a file to make it more efficient while still keeping the essential information.

2. What's the difference between generation and processing speed?

Generation speed refers to how fast the LLM can output text, while processing speed reflects how quickly it can handle internal computations. Think of it as the difference between the driver's reaction time and the car's engine power: both contribute to the overall performance.

3. Can I run LLMs on my CPU?

Yes, but the performance will be significantly slower compared to using a GPU. Think of it as driving a car with a very small engine: it might get you there, but it's not going to be a thrilling ride.

4. What about other GPUs besides NVIDIA 4090?

The NVIDIA 4090 24GB x2 is currently the top-tier GPU for local LLM inference. However, other options like the NVIDIA 4080 or A100, depending on your specific needs and budget, can also provide solid performance for local LLMs.

5. Should I use a Q4KM or F16 model?

It depends on your priorities. The Q4KM version is typically faster but may sacrifice some accuracy. The F16 version will usually be more accurate but require more resources. Think of it like choosing between a compact car for speed and a luxury car for comfort!

Keywords

LLMs, Local LLM, NVIDIA 4090, NVIDIA 4090 24GB x2, GPU, Benchmark, Llama 3, Llama 3 8B, Llama 3 70B, Quantization, Q4KM, F16, Generation Speed, Processing Speed, Token/Second, AI, Deep Learning, Machine Learning, Inference, NLP, Natural Language Processing