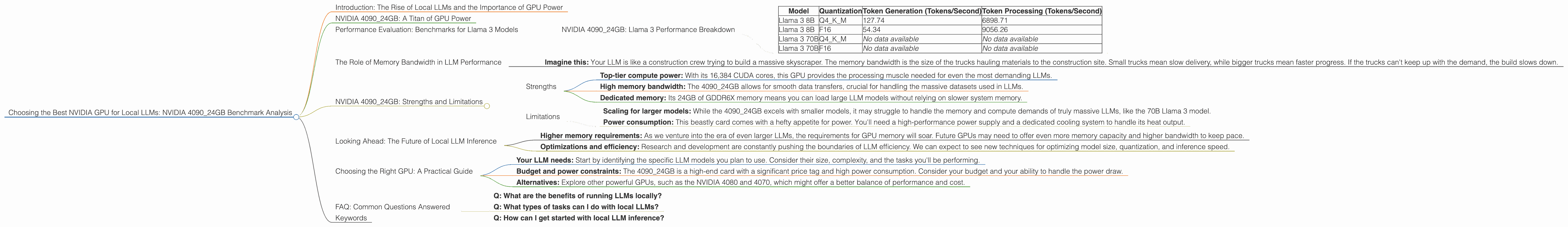

Choosing the Best NVIDIA GPU for Local LLMs: NVIDIA 4090 24GB Benchmark Analysis

Introduction: The Rise of Local LLMs and the Importance of GPU Power

The world of large language models (LLMs) is exploding, with new models popping up like mushrooms after a rainstorm. We're seeing LLMs used for everything from writing code to generating art, and the potential applications are only just beginning to be explored. With the rise in popularity of these powerful AI models, the need for efficient and powerful hardware to run them locally is becoming increasingly critical.

This article dives deep into the performance of the NVIDIA 4090_24GB GPU – a beastly card known to handle the most demanding tasks – in the context of local LLM inference. We'll dissect benchmarks for popular LLM models like Llama 3 and highlight its strengths and limitations, giving you a detailed picture of how this GPU stacks up for local LLM adventures.

NVIDIA 4090_24GB: A Titan of GPU Power

The NVIDIA 4090_24GB is no ordinary GPU. It's a powerhouse engineered for extreme performance, boasting a whopping 24GB of GDDR6X memory and 16,384 CUDA cores. This makes it an ideal candidate for running large, complex LLMs locally, where memory bandwidth and compute power are paramount.

Performance Evaluation: Benchmarks for Llama 3 Models

Let's delve into the heart of the matter – the benchmarks! We'll focus on Llama 3 models, a top choice for local LLM deployments known for their impressive capabilities.

Key Considerations for Benchmark Analysis:

Quantization: LLMs can be "quantized" to reduce their memory footprint and processing demands. Think of quantization like compressing a file – you lose some detail, but the file becomes much smaller and faster to process. We'll be examining the results for two quantization levels:

- Q4KM: This represents quantization to 4-bit precision, using the "K-Means" algorithm for memory-efficient storage.

- F16: This represents 16-bit floating-point precision, offering a balance between accuracy and performance.

Generation vs. Processing: We'll look at two key metrics:

- Token Generation: Measures how many tokens (the building blocks of text) the model can generate per second. This reflects the speed at which it can produce outputs like responses to prompts.

- Token Processing: Reflects how many tokens the model can process (internal computations) per second. This directly impacts the speed of the model's internal operations.

NVIDIA 4090_24GB: Llama 3 Performance Breakdown

| Model | Quantization | Token Generation (Tokens/Second) | Token Processing (Tokens/Second) |

|---|---|---|---|

| Llama 3 8B | Q4KM | 127.74 | 6898.71 |

| Llama 3 8B | F16 | 54.34 | 9056.26 |

| Llama 3 70B | Q4KM | No data available | No data available |

| Llama 3 70B | F16 | No data available | No data available |

Analysis:

- Llama 3 8B: The 409024GB performs remarkably well with the Llama 3 8B model, achieving a significant 127.74 tokens/second for generation in Q4K_M quantization. This translates to a very snappy and responsive experience. Switching to F16 quantization compromises generation speed (54.34 tokens/second), but boosts processing power to 9056.26 tokens/second.

- Llama 3 70B: Unfortunately, we don't have benchmarks for the larger Llama 3 70B model on the 4090_24GB. This is a crucial gap in the data, as it's essential to know how this GPU handles the demands of larger, more complex models.

Key Observations:

- Quantization trade-off: Quantization is a double-edged sword. While Q4KM offers faster generation speeds, F16 offers significantly higher processing power. Choosing the right quantization level depends on your priorities – fast output or robust internal processing.

- Model size matters: We see clear performance differences between the 8B and 70B Llama 3 models. It's reasonable to assume that the 4090_24GB will struggle to handle the 70B model, especially for generation tasks, as it's simply too demanding for the GPU's memory and compute resources.

The Role of Memory Bandwidth in LLM Performance

You might be wondering why we're so focused on memory bandwidth. Well, think of it like a highway for data. LLMs are data hungry beasts – they constantly need to load and process massive amounts of information. Memory bandwidth represents how much data can be moved through this highway per second.

- Imagine this: Your LLM is like a construction crew trying to build a massive skyscraper. The memory bandwidth is the size of the trucks hauling materials to the construction site. Small trucks mean slow delivery, while bigger trucks mean faster progress. If the trucks can't keep up with the demand, the build slows down.

The 4090_24GB's generous 24GB of GDDR6X memory, coupled with its blazing-fast 912 GB/s bandwidth, makes it a strong contender for handling the data demands of LLMs. However, when you scale to larger models like Llama 3 70B, even this high bandwidth can become a bottleneck.

NVIDIA 4090_24GB: Strengths and Limitations

Strengths

- Top-tier compute power: With its 16,384 CUDA cores, this GPU provides the processing muscle needed for even the most demanding LLMs.

- High memory bandwidth: The 4090_24GB allows for smooth data transfers, crucial for handling the massive datasets used in LLMs.

- Dedicated memory: Its 24GB of GDDR6X memory means you can load large LLM models without relying on slower system memory.

Limitations

- Scaling for larger models: While the 4090_24GB excels with smaller models, it may struggle to handle the memory and compute demands of truly massive LLMs, like the 70B Llama 3 model.

- Power consumption: This beastly card comes with a hefty appetite for power. You'll need a high-performance power supply and a dedicated cooling system to handle its heat output.

Looking Ahead: The Future of Local LLM Inference

The world of LLMs continues to evolve rapidly, with new models becoming increasingly powerful and complex. The NVIDIA 4090_24GB is a formidable tool for local LLM inference, but it's essential to stay aware of the evolving landscape.

- Higher memory requirements: As we venture into the era of even larger LLMs, the requirements for GPU memory will soar. Future GPUs may need to offer even more memory capacity and higher bandwidth to keep pace.

- Optimizations and efficiency: Research and development are constantly pushing the boundaries of LLM efficiency. We can expect to see new techniques for optimizing model size, quantization, and inference speed.

Choosing the Right GPU: A Practical Guide

- Your LLM needs: Start by identifying the specific LLM models you plan to use. Consider their size, complexity, and the tasks you'll be performing.

- Budget and power constraints: The 4090_24GB is a high-end card with a significant price tag and high power consumption. Consider your budget and your ability to handle the power draw.

- Alternatives: Explore other powerful GPUs, such as the NVIDIA 4080 and 4070, which might offer a better balance of performance and cost.

FAQ: Common Questions Answered

- Q: What are the benefits of running LLMs locally?

A: Running LLMs locally offers several advantages: * Privacy: Your data stays on your device, improving security and privacy. * Offline access: Run LLMs even if you're offline or have a poor internet connection. * Faster performance: Direct access to the GPU memory can significantly speed up inference compared to cloud-based solutions.

- Q: What types of tasks can I do with local LLMs?

A: Local LLMs open up a world of possibilities: * Code generation: Generate code in various programming languages. * Text summarization: Summarize lengthy documents. * Translation: Translate text between languages. * Creative writing: Generate stories, poems, or articles. * Conversational AI: Build chatbots that can hold engaging conversations. * Image generation: Generate images based on text prompts.

- Q: How can I get started with local LLM inference?

A: There are several ways to start: * llama.cpp: This is a popular open-source library for running LLMs locally. * Hugging Face Transformers: This library provides tools for loading, fine-tuning, and deploying LLMs. * Google Colab: This cloud-based platform allows you to experiment with LLMs without requiring a powerful GPU.

Keywords

Large Language Models, LLM, NVIDIA, GPU, 409024GB, Llama 3, Token Generation, Token Processing, Quantization, Q4K_M, F16, Memory Bandwidth, Local Inference, GPU Performance, Benchmark, Power Consumption, Alternatives, Hugging Face Transformers, llama.cpp, Google Colab.