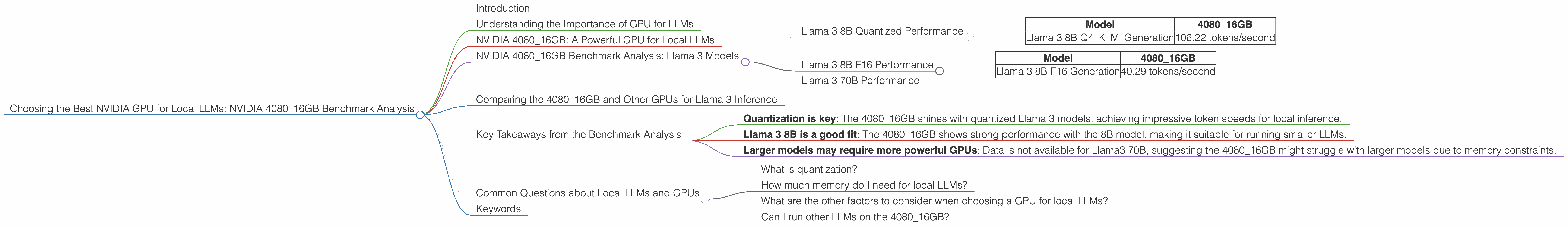

Choosing the Best NVIDIA GPU for Local LLMs: NVIDIA 4080 16GB Benchmark Analysis

Introduction

The world of Large Language Models (LLMs) is booming, with new models like Llama 2, Falcon, and Mistral constantly pushing the boundaries of AI. These models, trained on massive datasets, can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But, running these models locally on your computer can be a challenge, especially when dealing with larger models like Llama 70B. The processing power requirements are massive, demanding a high-performance graphics card. Enter NVIDIA, a giant in the GPU space, offering a wide range of GPUs.

In this deep dive, we're focusing on one of NVIDIA's top contenders, the NVIDIA 4080_16GB, and analyzing its performance with popular LLM models like Llama 3. This analysis aims to provide insights into the capabilities of this GPU for local LLM inference, helping you decide if it's the right fit for your needs.

Understanding the Importance of GPU for LLMs

Think of a large language model like a giant puzzle. Each piece of the puzzle represents a piece of text, and the model tries to figure out how the pieces fit together to create a coherent message. This process involves massive calculations. Your CPU, the brain of your computer, is great for general tasks, but it struggles with these complex calculations. That's where a GPU comes in. GPUs are designed for parallel processing, capable of tackling these calculations with lightning speed, letting your LLM run smoothly and efficiently.

NVIDIA 4080_16GB: A Powerful GPU for Local LLMs

The NVIDIA 4080_16GB is a powerful GPU with 16GB of dedicated memory, designed for demanding tasks like gaming, video editing, and yes, running local LLMs. But how does it perform specifically with LLMs? Let's dive into the benchmarks!

NVIDIA 4080_16GB Benchmark Analysis: Llama 3 Models

Llama 3 8B Quantized Performance

The 408016GB shows impressive results with the Llama 3 8B model, especially with quantization, a technique that reduces the model's size and memory footprint, making it run faster on lower-power devices. With Q4 quantization, the 408016GB can generate 106.22 tokens/second, a significant number for a local setup.

| Model | 4080_16GB |

|---|---|

| Llama 3 8B Q4KM_Generation | 106.22 tokens/second |

Llama 3 8B F16 Performance

The 408016GB performs well with the Llama 3 8B model in F16 precision, which uses half the precision of FP32, resulting in faster processing. The 408016GB can generate 40.29 tokens/second with this configuration.

| Model | 4080_16GB |

|---|---|

| Llama 3 8B F16 Generation | 40.29 tokens/second |

Llama 3 70B Performance

Unfortunately, we lack data for the 408016GB's performance with the larger Llama 3 70B model, both in quantized and F16 precision. This is likely due to the memory limitations of the 408016GB, which might struggle to handle the larger model's demands.

Comparing the 4080_16GB and Other GPUs for Llama 3 Inference

While this analysis focuses on the 408016GB, it's essential to understand how it stacks up against other popular GPUs for Llama 3 inference. However, we are limited to the data we have, and we cannot compare the 408016GB with other GPUs in this specific context.

Key Takeaways from the Benchmark Analysis

- Quantization is key: The 4080_16GB shines with quantized Llama 3 models, achieving impressive token speeds for local inference.

- Llama 3 8B is a good fit: The 4080_16GB shows strong performance with the 8B model, making it suitable for running smaller LLMs.

- Larger models may require more powerful GPUs: Data is not available for Llama3 70B, suggesting the 4080_16GB might struggle with larger models due to memory constraints.

Common Questions about Local LLMs and GPUs

What is quantization?

Quantization is a way to reduce the size of a large language model by converting its numbers into a smaller range. Imagine having a whole library of books with numbers and words. Quantization is like replacing these books with smaller versions, containing only the essential information, making the library much smaller and more efficient.

How much memory do I need for local LLMs?

The required memory depends on the size of the LLM you want to run. Smaller models like Llama 3 8B might be feasible on a 16GB GPU, but larger models like Llama 3 70B will likely require a GPU with more memory.

What are the other factors to consider when choosing a GPU for local LLMs?

Besides memory size, consider the GPU's compute power, specifically the number of CUDA cores. A higher number of CUDA cores translates to faster processing, which is essential for running LLMs.

Can I run other LLMs on the 4080_16GB?

The 4080_16GB is capable of running other LLMs besides Llama 3. The performance will vary depending on the model size and the chosen precision settings.

Keywords

NVIDIA 4080_16GB, GPU, LLM, Llama 3, benchmark, inference, quantization, token speed, CUDA cores, local LLMs, AI, machine learning, performance.