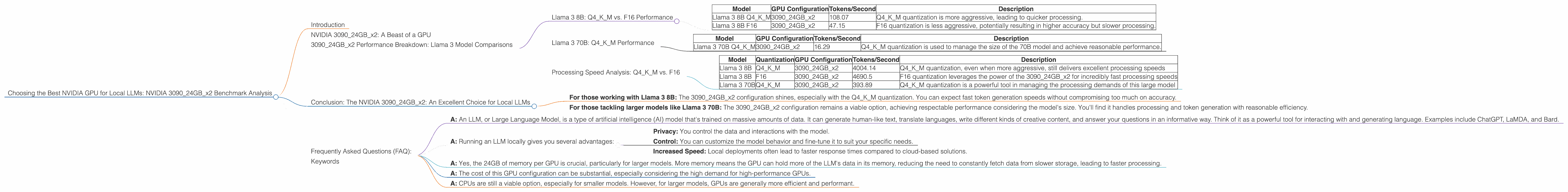

Choosing the Best NVIDIA GPU for Local LLMs: NVIDIA 3090 24GB x2 Benchmark Analysis

Introduction

Welcome, fellow language model enthusiasts! Today, we are delving into the world of local LLMs (Large Language Models) and dissecting the performance of the mighty NVIDIA 309024GBx2 configuration. This GPU powerhouse is a popular choice for running LLMs locally, offering significant speed boosts compared to CPUs, especially for larger models.

Our goal is to shed light on the performance characteristics of this specific GPU setup when it comes to running different LLM models. We will analyze the speeds achieved for various models and quantization configurations.

Think of it as a performance review for a GPU that's been training hard to handle the demands of your favorite LLMs! Let's dive in and see what this beast can do!

NVIDIA 309024GBx2: A Beast of a GPU

Let's get our bearings straight: the NVIDIA 309024GBx2 is a powerful GPU configuration that boasts 2x 24GB of dedicated memory. This means you have a significant pool of memory for storing and processing large language models.

But why are we focusing on this specific configuration?

Well, it's a common choice for developers and enthusiasts who want to run LLMs locally, especially those working with larger models like Llama 70B. The dual configuration offers a significant performance advantage over single-GPU setups, leading to faster inference (meaning quicker response times) and allowing for more efficient processing of those large language models.

309024GBx2 Performance Breakdown: Llama 3 Model Comparisons

Let's dive into the nitty-gritty and see how the 309024GBx2 performs with different Llama 3 models.

Llama 3 8B: Q4KM vs. F16 Performance

The first model we'll analyze is Llama 3 8B. We're comparing two different quantization configurations: Q4KM and F16. Quantization is a technique used to reduce the size of the model and increase its efficiency. Think of it as compressing a video file to make it smaller without sacrificing too much quality.

Q4KM uses a more aggressive quantization scheme, which means it's even smaller and more efficient, but it might slightly impact accuracy. F16 is a less aggressive quantization scheme, meaning it's larger but potentially more accurate.

Here's a breakdown of the performance numbers:

| Model | GPU Configuration | Tokens/Second | Description |

|---|---|---|---|

| Llama 3 8B Q4KM | 309024GBx2 | 108.07 | Q4KM quantization is more aggressive, leading to quicker processing. |

| Llama 3 8B F16 | 309024GBx2 | 47.15 | F16 quantization is less aggressive, potentially resulting in higher accuracy but slower processing. |

Observations:

- Faster Generation with Q4KM: As expected, the Llama 3 8B model with Q4KM quantization shines when it comes to token generation speed. The 309024GBx2 configuration generates tokens at a rate over 100 tokens per second, which is impressive.

- Trade-offs: While Q4KM is faster, keep in mind that the trade-off might be slightly lower accuracy. F16 quantization balances performance with accuracy, allowing for a smoother experience in some scenarios.

Llama 3 70B: Q4KM Performance

Now let's move on to a larger model: Llama 3 70B. We don't have F16 performance data for this model, so we'll only look at the Q4KM configuration.

| Model | GPU Configuration | Tokens/Second | Description |

|---|---|---|---|

| Llama 3 70B Q4KM | 309024GBx2 | 16.29 | Q4KM quantization is used to manage the size of the 70B model and achieve reasonable performance. |

Observations:

- Significant Speed Boost: While the token generation speed for Llama 3 70B is significantly slower than the 8B model, it's still impressive for a model of this size. The 309024GBx2 configuration proves its mettle by efficiently handling the large model.

- Memory Considerations: Keep in mind that the 70B model demands more memory than the 8B model. This is where the 24GB of memory per GPU in this setup really shines.

Processing Speed Analysis: Q4KM vs. F16

Now, let's shift our focus to processing speed. This refers to how quickly the GPU can handle the underlying computations required for the LLM. It's crucial for smooth and efficient interaction with your language model.

| Model | Quantization | GPU Configuration | Tokens/Second | Description |

|---|---|---|---|---|

| Llama 3 8B | Q4KM | 309024GBx2 | 4004.14 | Q4KM quantization, even when more aggressive, still delivers excellent processing speeds |

| Llama 3 8B | F16 | 309024GBx2 | 4690.5 | F16 quantization leverages the power of the 309024GBx2 for incredibly fast processing speeds |

| Llama 3 70B | Q4KM | 309024GBx2 | 393.89 | Q4KM quantization is a powerful tool in managing the processing demands of this large model |

Observations:

- Q4KM & F16: Processing Powerhouse: Both Q4KM and F16 quantization configurations deliver exceptional processing speeds. It's clear that the 309024GBx2 configuration can handle the computational demands of even the largest LLMs.

- 70B Model Performance: The Llama 3 70B model, despite its size, still achieves impressive processing speeds with the 309024GBx2. This highlights the power of this GPU configuration for local LLM deployment.

Conclusion: The NVIDIA 309024GBx2: An Excellent Choice for Local LLMs

Based on the analysis, the NVIDIA 309024GBx2 configuration emerges as a strong contender for local LLM deployment. It offers impressive performance with both smaller (8B) and larger (70B) LLM models, making it a versatile choice for developers and enthusiasts.

- For those working with Llama 3 8B: The 309024GBx2 configuration shines, especially with the Q4KM quantization. You can expect fast token generation speeds without compromising too much on accuracy.

- For those tackling larger models like Llama 3 70B: The 309024GBx2 configuration remains a viable option, achieving respectable performance considering the model's size. You'll find it handles processing and token generation with reasonable efficiency.

The 309024GBx2 is a strong choice for individuals working with LLMs, especially those who demand speed and efficiency, but be prepared for the high cost.

Frequently Asked Questions (FAQ):

Q: What is an LLM?

- A: An LLM, or Large Language Model, is a type of artificial intelligence (AI) model that's trained on massive amounts of data. It can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in an informative way. Think of it as a powerful tool for interacting with and generating language. Examples include ChatGPT, LaMDA, and Bard.

Q: Why would I want to have an LLM locally?

- A: Running an LLM locally gives you several advantages:

- Privacy: You control the data and interactions with the model.

- Control: You can customize the model behavior and fine-tune it to suit your specific needs.

- Increased Speed: Local deployments often lead to faster response times compared to cloud-based solutions.

Q: Does a 24GB GPU really make that much of a difference?

- A: Yes, the 24GB of memory per GPU is crucial, particularly for larger models. More memory means the GPU can hold more of the LLM's data in its memory, reducing the need to constantly fetch data from slower storage, leading to faster processing.

Q: Is this configuration affordable?

- A: The cost of this GPU configuration can be substantial, especially considering the high demand for high-performance GPUs.

Q: Are there any other ways to run LLMs locally besides using GPUs?

- A: CPUs are still a viable option, especially for smaller models. However, for larger models, GPUs are generally more efficient and performant.

Keywords

NVIDIA, GPU, LLMs, Large Language Models, Llama 3, 8B, 70B, Q4KM, F16, Token Generation, Processing Speed, 309024GBx2, local, performance, benchmark, quantization, inference