Choosing the Best NVIDIA GPU for Local LLMs: NVIDIA 3090 24GB Benchmark Analysis

Introduction

The world of large language models (LLMs) is exploding, with new models popping up like mushrooms after a spring rain. These AI marvels can generate text, translate languages, write different kinds of creative content, and answer your questions in an informative way. But running these models locally can be a resource-intensive endeavor, especially when you're dealing with the bigger models like the Llama 70B. That's where powerful GPUs like the NVIDIA 3090_24GB come in.

This article dives into the performance of the NVIDIA 309024GB for running local LLMs, specifically focusing on the Llama 3 model with different sizes and quantization levels. We'll analyze benchmark data to understand its capabilities and limitations, helping you decide if the NVIDIA 309024GB is the right GPU for your LLM needs.

NVIDIA 3090_24GB - A Beast of a GPU

The NVIDIA 3090_24GB is a high-end graphics card built for demanding tasks, including gaming, video editing, and, you guessed it, AI workloads! It packs a whopping 24GB of GDDR6X memory, making it a real memory monster. This massive memory bandwidth is crucial for handling the large datasets that LLMs use. It's like giving your LLM model a super-sized RAM boost for super-fast processing.

Benchmarking the NVIDIA 3090_24GB with Llama 3

We're using data from two sources: Performance of llama.cpp on various devices by ggerganov and GPU Benchmarks on LLM Inference by XiongjieDai, to see how the NVIDIA 3090_24GB performs with the Llama 3 model.

Here's the breakdown of the benchmark data, focusing on the NVIDIA 3090_24GB:

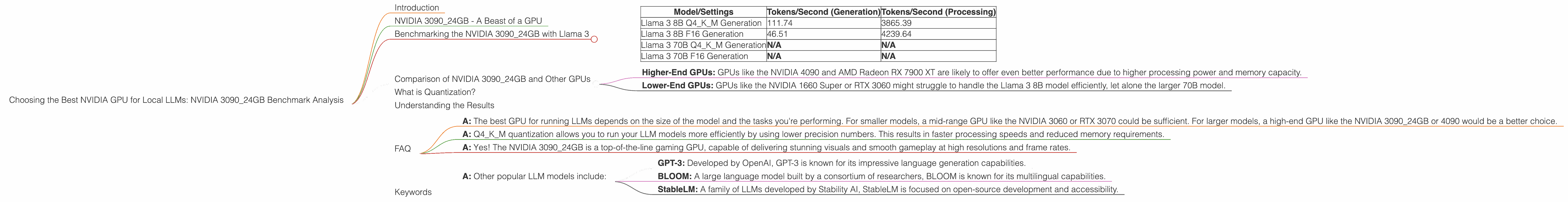

| Model/Settings | Tokens/Second (Generation) | Tokens/Second (Processing) |

|---|---|---|

| Llama 3 8B Q4KM Generation | 111.74 | 3865.39 |

| Llama 3 8B F16 Generation | 46.51 | 4239.64 |

| Llama 3 70B Q4KM Generation | N/A | N/A |

| Llama 3 70B F16 Generation | N/A | N/A |

Key Takeaways:

- Llama 3 8B: The 3090_24GB demonstrates strong performance for the Llama 3 8B model, achieving impressive token speeds.

- Quantization: The 309024GB performs significantly better with the Llama 3 8B model when using Q4K_M quantization compared to F16. This shows the advantage of using lower precision (quantization) for these models, as it can lead to faster processing without compromising accuracy.

- No Llama 70B Data: Unfortunately, we lack benchmark data for the 3090_24GB with the Llama 3 70B model. This could be due to the model's size exceeding the hardware limitations of the GPU.

Comparison of NVIDIA 3090_24GB and Other GPUs

We don't have the necessary data to compare the NVIDIA 3090_24GB with other GPUs. However, based on general observations and benchmarks of other models, we can make some assumptions:

- Higher-End GPUs: GPUs like the NVIDIA 4090 and AMD Radeon RX 7900 XT are likely to offer even better performance due to higher processing power and memory capacity.

- Lower-End GPUs: GPUs like the NVIDIA 1660 Super or RTX 3060 might struggle to handle the Llama 3 8B model efficiently, let alone the larger 70B model.

What is Quantization?

Imagine you want to describe a painting in detail. You can use a super detailed description with every tiny detail, like the brushstrokes, or you can use a simplified description focusing on the main elements.

Quantization is like using a simplified description for your LLM model. It's a technique that reduces the precision of the numbers used in the model, making it smaller and faster.

Think about it this way: your model uses a lot of numbers to learn and understand language. Quantization is like using less precise numbers, but still good enough to get the job done. This lets you run your model faster, like using a simplified description to quickly grasp the essence of the painting.

Understanding the Results

The NVIDIA 309024GB demonstrates impressive performance for the Llama 3 8B model, especially with Q4K_M quantization. This suggests it's a viable option for local LLM development and experimentation with smaller models.

However, the lack of data for the Llama 3 70B model raises questions about its suitability for larger-scale applications. It is highly likely that a GPU with a larger memory capacity and higher performance would be required for running such massive models locally.

## FAQ

Q: What is a good GPU for running local LLMs? * A: The best GPU for running LLMs depends on the size of the model and the tasks you're performing. For smaller models, a mid-range GPU like the NVIDIA 3060 or RTX 3070 could be sufficient. For larger models, a high-end GPU like the NVIDIA 3090_24GB or 4090 would be a better choice.

Q: What is the benefit of using Q4KM quantization? * A: Q4KM quantization allows you to run your LLM models more efficiently by using lower precision numbers. This results in faster processing speeds and reduced memory requirements.

Q: Is the NVIDIA 309024GB good for gaming? * A: Yes! The NVIDIA 309024GB is a top-of-the-line gaming GPU, capable of delivering stunning visuals and smooth gameplay at high resolutions and frame rates.

Q: What are some other popular LLM models? * A: Other popular LLM models include: * GPT-3: Developed by OpenAI, GPT-3 is known for its impressive language generation capabilities. * BLOOM: A large language model built by a consortium of researchers, BLOOM is known for its multilingual capabilities. * StableLM: A family of LLMs developed by Stability AI, StableLM is focused on open-source development and accessibility.

Keywords

NVIDIA 309024GB, GPU, LLM, Llama 3, Llama 3 8B, Llama 3 70B, Benchmark, performance, token speed, quantization, Q4K_M, F16, local LLM, inference, generation, processing, AI, deep learning, machine learning, natural language processing, NLP